The artificial intelligence industry has been discussing larger models, more context, and greater computing power for months. But in practice, one of the most serious challenges isn’t just training these systems, but maintaining their efficient operation once they are in production. Google Research has now focused on this issue with TurboQuant, a compression technique introduced on March 24, 2026, aimed at reducing one of the major bottlenecks of LLMs: the memory consumed by the key-value cache, known as KV cache.

This proposal arrives at a time when pressure on infrastructure is increasing. The longer the context a model can handle, the more memory it needs to reserve to retain already processed information and reuse it during inference. This “fast memory” enables responses without recalculating everything from scratch but increases costs and limits scalability. Google suggests that TurboQuant can address this problem without sacrificing useful performance, which is especially relevant for conversational assistants, RAG systems, semantic search engines, and vector databases.

Less memory, lower costs, and more room to scale

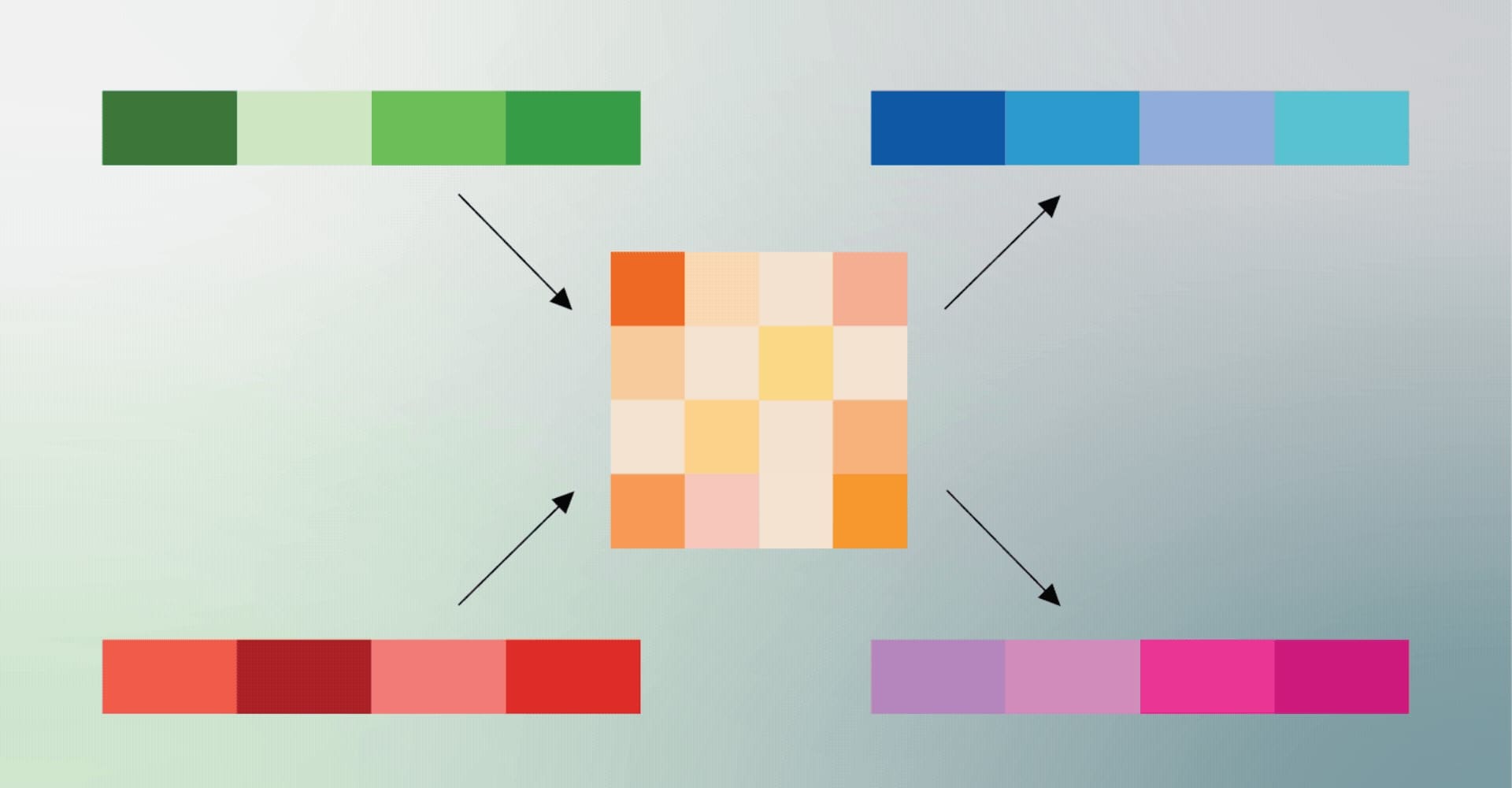

The core technical aspect of the announcement revolves around vector quantization, a classical compression technique that reduces the size of high-dimensional vectors. These vectors form the basis for how models represent meaning, semantic relationships, and data features. According to Google, many traditional quantization methods introduce additional memory overhead because they require storing extra constants for each compressed small block. TurboQuant is presented as a way to reduce this hidden toll.

To achieve this, Google combines two components. The first is PolarQuant, a technique that transforms vectors to make their compression more efficient and reduce the need for costly normalizations. The second is QJL, which stands for Quantized Johnson-Lindenstrauss—acting on the small residual error with a 1-bit correction. Official explanations state that the combined effect allows aggressive compression of information without introducing biases that distort the model’s attention calculations. Additionally, PolarQuant is scheduled for presentation at AISTATS 2026 on May 4.

For a tech audience, the important aspect isn’t just the elegant mathematical approach, but its architectural implications. If such a technique genuinely reduces memory pressure, the potential impact goes beyond benchmarking: more users per GPU, less dependence on extreme-memory configurations, greater flexibility to extend context windows, and potentially lower inference costs. In a market where hardware efficiency has become nearly as critical as model quality, such optimizations could be as strategic as a new product launch.

What tests show and what needs nuance

Google claims to have evaluated TurboQuant, PolarQuant, and QJL on long-context benchmarks such as LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval, using models like Gemma and Mistral. In published results, the company states that TurboQuant achieves perfect results on “needle in a haystack” type tasks while reducing KV memory size by at least 6 times. It also claims that the technique can quantize that memory down to 3 bits without the need for training or fine-tuning, with negligible impact on runtime.

On NVIDIA H100 accelerators, Google adds another figure to illustrate why the industry is interested: a 4-bit version of TurboQuant achieved up to 8 times higher performance compared to unquantized 32-bit keys in attention logit calculation. If this performance holds outside the lab, the benefits would be both economic and operational: faster inference and better utilization of expensive, scarce resources.

However, it’s important to interpret these findings with the necessary caveats. In a general-audience post, Google mentions lossless compression down to 3 bits without precision loss during testing. Yet, in the official OpenReview technical sheet for ICLR 2026, the paper’s summary describes “absolute neutrality of quality” at 3.5 bits per channel and only marginal degradation at 2.5 bits per channel. While this doesn’t invalidate the contribution, it highlights that performance may depend on specific scenarios, measurement criteria, and evaluation workloads.

This nuance is crucial because AI progress often shows impressive results in controlled environments but encounters friction in production—unoptimized kernels, integration issues, inconsistent compatibility with serving engines, or notable differences between models. In this case, Google Research positions TurboQuant as a theoretically solid algorithmic contribution, and OpenReview has accepted it as a poster at ICLR 2026. It’s a sign of academic and technical interest, though it doesn’t yet translate into immediate, universal adoption across all inference stacks.

Beyond Gemini: why it could also impact vector search

One of the most interesting aspects of the announcement is that Google doesn’t limit TurboQuant to the KV cache problem in generative models. The company emphasizes that these techniques are also useful for high-dimensional vector search—systems that find results based on semantic proximity rather than simple keyword matching. This area is key to the evolution of search engines, retrieval-based assistants, and much enterprise software built on embeddings.

In this context, TurboQuant aligns with a growing trend: the next major competitive advantage in AI won’t be just the biggest model but who can execute faster, more efficiently, and with less memory. Google aims to position this research within that debate—not merely as an internal boost for Gemini. If extreme vector compression can go from a research paper to inference engines and search infrastructure, the impact could be felt in costs, latency, and deployment density. In an industry constrained by high-bandwidth memory shortages and GPU prices, this isn’t just a technical detail but a strategic variable of the highest importance.

FAQs

What is Google’s TurboQuant?

It’s a vector compression method introduced by Google Research to reduce memory consumption in AI models and vector search systems, specifically targeting the KV cache bottleneck during inference.

Why is the KV cache so important in LLMs?

Because it stores previously processed information that the model reuses when generating responses. This accelerates inference but also significantly increases memory usage when contexts are long or multiple requests are processed concurrently.

Is TurboQuant already deployed in Google’s commercial products?

Official sources describe TurboQuant as a research contribution related to potential applications in systems like Gemini. There are no public schedules yet for widespread commercial deployment.

How does TurboQuant benefit semantic search and vector databases?

Google states that it enables building and querying vector indexes with much less memory, minimal preprocessing, and high accuracy—relevant for search engines, RAG systems, and enterprise applications based on embeddings.