Quantum computing has been promising for years to accelerate problems that classical supercomputers solve with great difficulty. So far, many demonstrations have been important from a scientific perspective but hard to translate into immediate industrial utility. Q-CTRL has now entered this delicate area with an ambitious announcement: claiming to have found evidence of a “practical quantum advantage” in a materials simulation relevant to the energy sector, using the IBM Quantum platform.

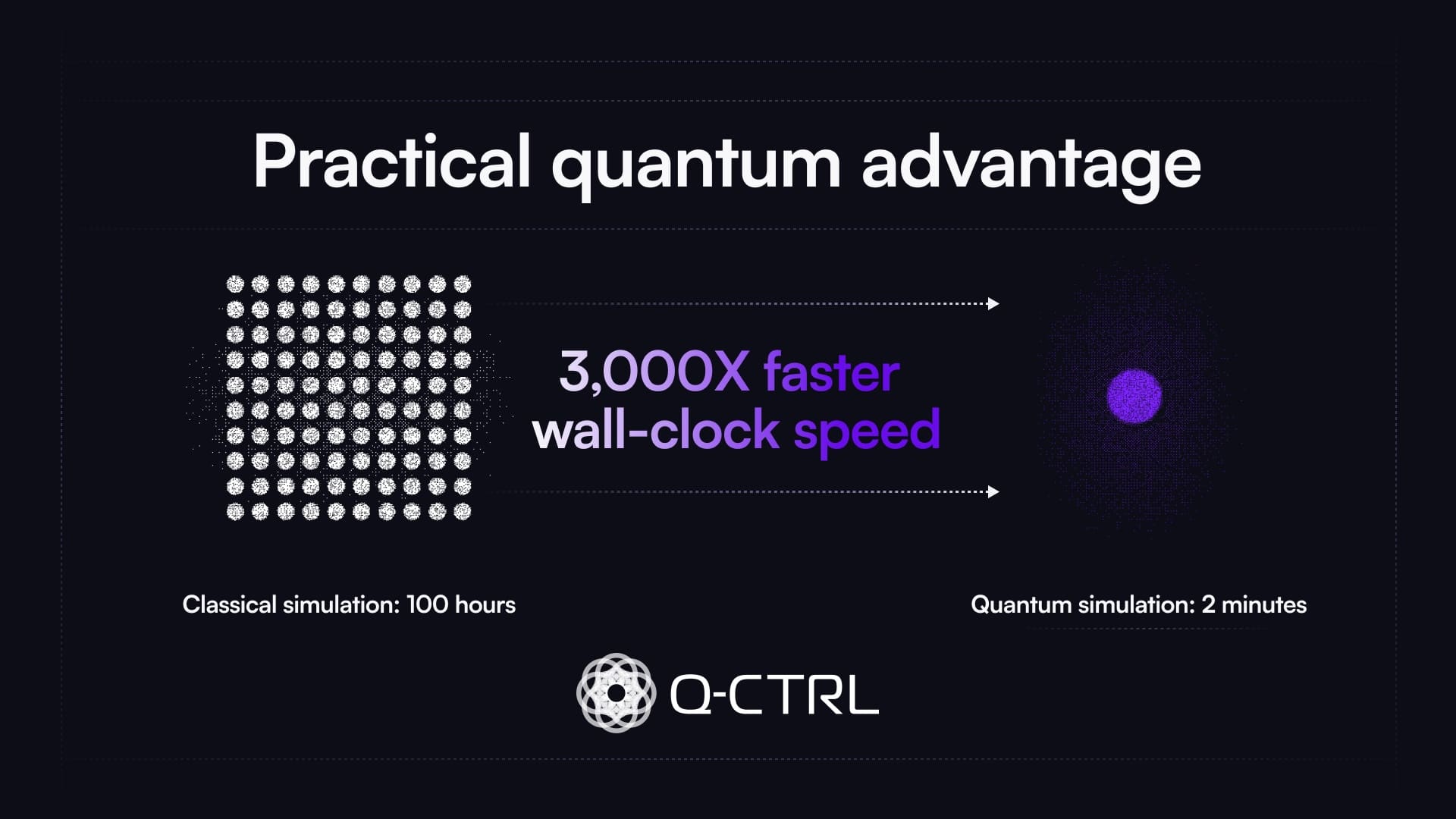

The company states that its performance management software allowed them to run a quantum algorithm in about two minutes that, with optimized classical tools, would have taken over 100 hours. The result focuses on a fermionic simulation of the one-dimensional Fermi-Hubbard model. This family of problems is widely used in condensed matter physics to study how electrons interact in materials. The comparison suggests an acceleration of up to 3,000 times in runtime, although there are important nuances regarding what is being compared and the extent of the conclusion.

What has Q-CTRL demonstrated

The technical work, published on arXiv under the title “Fast, accurate, high-resolution simulation of large-scale Fermi-Hubbard models on a digital quantum processor,” describes a digital simulation of the one-dimensional Fermi-Hubbard model on a superconducting quantum processor. The team used up to 120 qubits, 30 Trotter steps in their larger experiments, and over 10,000 two-qubit logical operations, according to Q-CTRL’s report.

The chosen problem is no coincidence. Material and chemical simulations consume a significant portion of global supercomputing time, and many energy-related challenges depend on better understanding the electronic properties of materials: superconductors, batteries, photovoltaics, catalysis, storage, and generation. Quantum computers are attractive in this field because the systems they aim to simulate also follow quantum laws.

Q-CTRL compared their results with classical reference methods based on tensor networks, specifically TDVP (Time-Dependent Variational Principle), widely used by the scientific community. They claim that increasing the resolution of classical simulations to match the quantum results resulted in a cost that exceeded 3,000 times the runtime of the IBM quantum processor.

| Element | Q-CTRL & IBM Demonstration |

|---|---|

| Problem studied | 1D fermionic simulation of the Fermi-Hubbard model |

| Maximum scale | Up to 120 qubits |

| Quantum operations | Over 10,000 two-qubit operations, according to Q-CTRL |

| Classical comparison | TDVP simulation using tensor networks |

| Reported quantum time | Approximately two minutes |

| Reported classical time | Over 100 hours |

| Claimed acceleration | Up to 3,000 times in runtime |

| Target sector | Materials science and energy |

The key element isn’t just the hardware. Q-CTRL focuses on infrastructure software for quantum technology, arguing that the advantage emerges from combining current quantum processors with advanced error suppression techniques during runtime. In noisy quantum machines, errors are the biggest enemy: the more gates executed, the higher the likelihood that the result degrades. Reducing this degradation without adding unmanageable overhead is one of the major challenges for practical quantum computation.

Why is this termed “practical quantum advantage”

The phrase “quantum advantage” has often been used confusingly. It doesn’t mean a quantum computer is surpassing classical supercomputers for all tasks. Nor does it imply the existence of a universal, fault-tolerant quantum machine ready to replace classical computing. In this case, Q-CTRL refers to “practical quantum advantage” because the quantum processor has outperformed the best available classical approach for a specific, known problem of scientific or commercial interest.

This nuance is crucial. The demonstration doesn’t suddenly lead to discovering new battery materials or room-temperature superconductors. It shows that, for a relevant family of simulations, a current quantum processor with appropriate software can compete in total runtime with widely used classical tools. It’s a constrained but meaningful result if independently confirmed and reproduced.

Q-CTRL itself acknowledges a relevant caution: specialized classical algorithms or GPU acceleration improvements might reduce the observed advantage. They clarify that their claim pertains to what is achievable today with existing classical tools, not a comparison with a future, hypothetical solution.

This honesty is important because the history of quantum computing features many announcements later tempered by classical algorithmic advances. Demonstrations of supremacy or advantage have often been scaled back after new algorithms, supercomputer optimizations, or hybrid approaches are developed. Therefore, the key question isn’t only how impressive the current result is, but whether it indicates a class of problems that will retain a quantum advantage as hardware scales.

Implications for energy and materials

The potential industrial utility lies in simulating strongly correlated electrons. In complex materials, properties critical for energy transmission, storage, or generation depend on quantum interactions that are hard to model. Classical methods leverage powerful approximations but can become costly or inaccurate as the system size, evolution time, or correlation complexity increases.

If quantum computing can accelerate part of this work, it would significantly impact R&D. It wouldn’t replace labs, experiments, or classical simulations but could serve as an additional tool. For example: use classical methods where they’re efficient, turn to quantum processors where classical costs escalate, and combine both results to guide experiments.

The announcement also carries a market perspective. For years, much of the quantum narrative has been caught between long-term promises and hard-to-monetize applications. Q-CTRL’s breakthrough shifts the focus toward practical returns achievable with current machines, not a distant era of fault-tolerant quantum computers. They also mention that the software configuration used will be integrated as a Qiskit Function into the IBM Quantum platform, enabling other researchers to build on these results.

This step is critical. If the advantage depends on a closed configuration, community evaluation will be delayed. If other teams can reproduce, adapt, and challenge the method, the credibility of the result will grow. In computational science, usefulness isn’t only about one milestone but about turning it into a repeatable tool.

What still needs to be demonstrated

Q-CTRL’s announcement shouldn’t be read as the end of quantum waiting. Current quantum computers remain limited by noise, connectivity, calibration, gate fidelity, hardware availability, and circuit size limits for useful results. Software can greatly expand capabilities but can’t eliminate all physical constraints.

It’s also yet to be seen how this advantage translates to full-scale industrial problems. The Fermi-Hubbard model is valuable and well-established, but real materials often require larger dimensions, more physical terms, complex conditions, and experimental validation. Moving from a reference simulation to a discovery pipeline for materials in energy or chemistry will require further work.

Nevertheless, the result is valuable because it shifts the conversation. It’s no longer only about when fault-tolerant quantum computers will arrive, but about what current machines can contribute when combined with advanced software, error mitigation, and benchmarking. Practical quantum computing might initially thrive in niche applications before becoming a general platform.

For IBM, this achievement also reinforces its strategy of opening up quantum hardware through a broad platform and software ecosystem. Jay Gambetta, IBM Research Director, emphasizes in the Q-CTRL communication that the question isn’t whether quantum computers are useful, but how to use them effectively. This ambitious framing aligns with many quantum companies’ hybrid approach — combining advanced hardware, specialized software, and targeted problems.

The quantum industry has needed fewer grand promises and more measurable demonstrations. Q-CTRL’s figure — 3,000 times faster — is striking, but its true importance lies in the nature of the comparison: a scientifically relevant problem, a well-known classical tool, accessible quantum hardware via IBM, and software designed to extract useful results from noisy devices. If others reproduce and expand this work, it could be a significant step toward integrating quantum computing into industrial R&D pipelines.

Frequently Asked Questions

What has Q-CTRL announced?

Q-CTRL claims to have demonstrated practical quantum advantage in a materials simulation on IBM Quantum, achieving up to 3,000x speedup compared to current classical tools.

What problem was simulated?

They worked with the 1D Fermi-Hubbard model, used in material physics to study the dynamics of interacting electrons.

Does this mean quantum computers now outperform classical ones in all tasks?

No. The result applies to a specific problem and a comparison with current classical methods. It doesn’t imply universal quantum superiority.

Why is this important for the energy sector?

Because many energy advances depend on discovering and understanding new materials. Quantum simulation could accelerate some of that research when integrated into R&D workflows alongside classical methods.

via: q-ctrl