OpenAI might be preparing one of the most ambitious infrastructure moves seen so far in the race for Artificial Intelligence. According to information published by The Information and subsequently reported by other financial outlets, the company has agreed to allocate over $20 billion over three years to servers based on Cerebras chips, in a deal that would also include warrants or equity participation and additional support for data centers tailored to this hardware. Neither OpenAI nor Cerebras have publicly confirmed these details at this time.

What is confirmed is that both companies announced in January a multi-year alliance to deploy 750 MW of low-latency inference computing with Cerebras wafer-scale systems, a deployment that will begin in phases starting in 2026. OpenAI explained at the time that this capacity would be aimed at accelerating response times for its services in scenarios where latency is especially critical, such as programming, complex searches, imaging, and agents.

This nuance is important because it clarifies that OpenAI is not trying to replace all its hardware fleet with Cerebras, but rather diversifying its infrastructure based on the workload. The AI market is no longer solely about training massive models but increasingly about inference: delivering responses to millions of users quickly, interactively, and cost-effectively. That’s where Cerebras aims to gain strength.

A bet that goes beyond just buying chips

If the deal concludes on the terms reported, it would not be a one-time purchase of accelerators but a much deeper infrastructure partnership. Information circulating in Anglo-American media suggests a combination of computing capacity, funding for data centers, and possibly OpenAI gaining equity in Cerebras via warrants, with the option to increase its stake if overall spending continues to grow. However, as of now, these elements should be considered unconfirmed officially.

The only confirmed aspect is OpenAI’s strategic interest in expanding its computing base. The company announced in March the closing of a $122 billion equity funding round, with a post-money valuation of $852 billion. OpenAI explicitly stated that this funding would be used to accelerate “the next phase” of its AI infrastructure.

In that context, Cerebras fits as a specialized piece within an increasingly heterogeneous hardware portfolio. It doesn’t seem like a move intended to universally replace other manufacturers but rather to strengthen a specific layer: fast, low-latency inference.

Why Cerebras has become an interesting piece

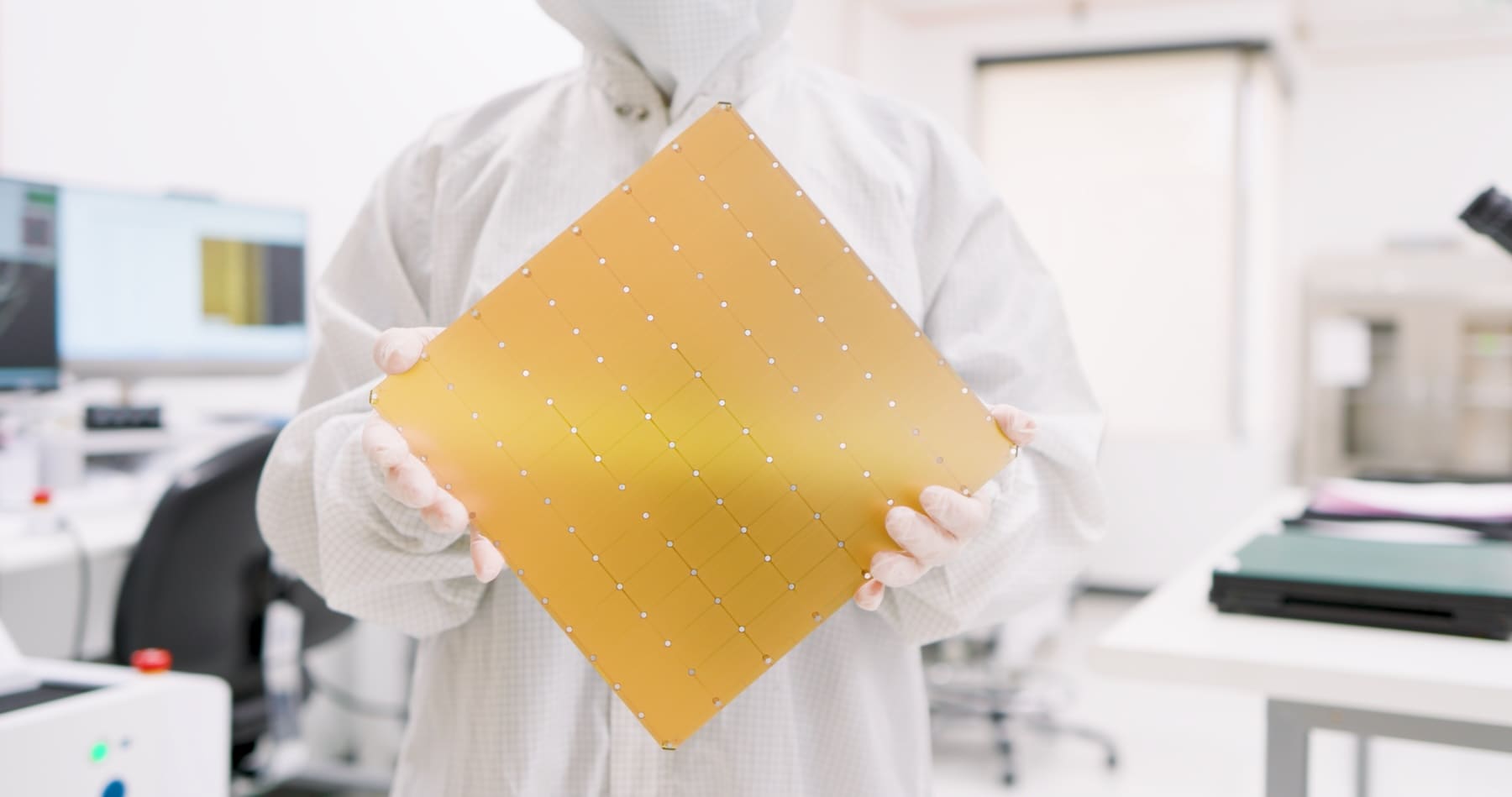

Cerebras gained prominence by challenging one of the most established conventions in the industry: instead of building small chips and scaling with large clusters, they focused on wafer-scale processors. Its WSE-3, according to the company, measures 46,225 mm², contains 4 trillion transistors, and delivers 125 petaflops of AI computing through 900,000 optimized cores. Cerebras presents it as the largest AI chip ever manufactured.

Beyond the impressive numbers, the key is its focus on inference. The company insists that its architecture reduces common bottlenecks in GPU-based systems and supports very fast real-time responses. This promise has made it an attractive option for providers seeking to accelerate conversational experiences, code generation, and other workloads where every millisecond counts.

It’s no coincidence that AWS also sought to adopt this approach. In March, Amazon and Cerebras announced a collaboration to bring CS-3 systems to AWS data centers and make them available through Amazon Bedrock. The combined architecture will split the workload between AWS Trainium for the preprocessing phase and Cerebras’ CS-3 for the decoding phase—precisely the same functional separation emerging in modern inference systems.

The AI race shifts its focus

Over the past two years, much of the industry’s narrative has been dominated by foundational model training and the massive accumulation of GPUs. However, the next battle seems to be shifting to another front: who can serve these models faster, with lower latency, better cost-efficiency, and at larger scale.

This shift benefits players like Cerebras, which are not competing to set the universal standard for training but are offering very specific advantages in inference. OpenAI hinted at this in January when explaining its deal with Cerebras: it wasn’t just about more power, but about response times and interactive experiences for end-users.

If OpenAI indeed expands its relationship with Cerebras as reported, the message would be clear: the future AI infrastructure will not rely on a single chip family or architecture. It will resemble a mix of specialized pieces tailored to different tasks: training, massive inference, low-latency inference, agents, multimodal processing, or enterprise services.

A move that also impacts Cerebras

For Cerebras, this deal would be hugely significant. The company has reactivated its U.S. stock market plans, and having a client like OpenAI, with multi-billion dollar commitments linked to real deployments, clearly improves its market positioning. Financial press already connects this alliance with the company’s renewed attempt to go public.

It also strengthens a perception that until recently seemed distant: being “the alternative to NVIDIA” is no longer enough. What now matters is becoming the right alternative for a very specific part of the AI stack. Cerebras is aiming to fill precisely that niche.

Frequently Asked Questions

Has OpenAI officially confirmed an investment exceeding $20 billion in Cerebras?

No. The only official confirmation is the January alliance to deploy 750 MW of inference computing. Details about a deal exceeding $20 billion, equity participation, and additional data center support come from reports by The Information and other outlets, but they have not been confirmed by OpenAI or Cerebras.

What makes Cerebras different from other AI hardware manufacturers?

Its most distinctive feature is wafer-scale architecture. The WSE-3 covers a full wafer, offers 900,000 AI-optimized cores, 4 trillion transistors, and 125 petaflops, according to the company.

Why would OpenAI be interested in this hardware?

Because Cerebras is targeting fast, low-latency inference—a key aspect for conversational products, agents, real-time image generation, and code execution. This complements other infrastructure layers focused on training.

How does all this relate to OpenAI’s recent funding?

OpenAI announced in March a $122 billion committed capital round to accelerate its next AI infrastructure phase. This financial capacity contextualizes large-scale infrastructure deals like the one attributed to Cerebras.