NVIDIA has taken the opportunity at GTC 2026 to clearly state that their next major push is no longer centered around a single super-powerful GPU but around a complete platform designed to cover all phases of modern AI. Under the name Vera Rubin, the company has introduced a block comprising seven chips and five types of racks that, according to their official announcement, are already in production and can be combined into a single “supercomputer” focused on pretraining, post-training, test-time scaling, and real-time agent inference.

The innovation isn’t just in raw power but also in the shift of approach. For years, NVIDIA promoted the idea that one GPU family could meet nearly any relevant AI need. Vera Rubin suggests a much more ambitious and pragmatic evolution: specialized CPUs for agent environments and reinforcement learning, GPUs for training and context, Groq’s LPUs for low-latency inference, storage racks designed for contextual memory, and an integrated Ethernet and InfiniBand network layer from the start. Practically, it’s an admission that the era of agentic AI can no longer be resolved solely with more FLOPS on a GPU.

From Single Chip to Rack and POD Platform

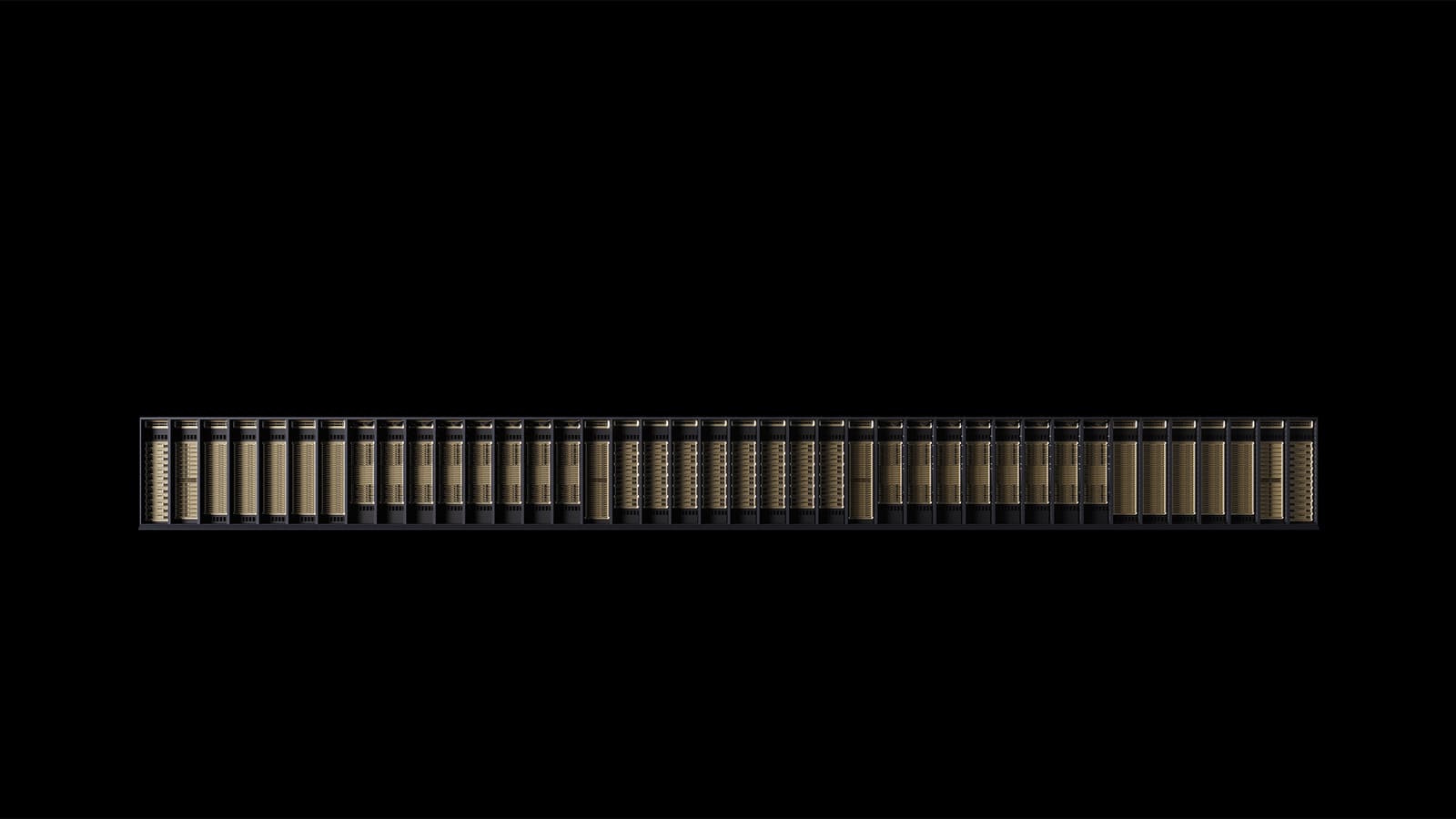

NVIDIA describes Vera Rubin as a leap from isolated servers to rack systems, and from there to a full factory-scale AI POD. This vision materializes through several components. The most prominent is the Vera Rubin NVL72, a rack housing 72 Rubin GPUs and 36 Vera CPUs connected via NVLink 6, SuperNIC ConnectX-9, and BlueField-4 DPUs. The company claims this setup enables training large mixture-of-experts models with only a quarter of the GPUs required by Blackwell, and achieving up to 10 times more inference throughput per watt with a tenth of the cost per token. These are NVIDIA’s official figures, not independent measurements, but they offer insight into the scale of the promise.

The second key element is the Vera CPU Rack. NVIDIA presents it as a dense, liquid-cooled infrastructure with 256 Vera CPUs, designed to host large-scale execution environments used by AI agents and reinforcement learning systems for testing, validation, and orchestration. This clearly indicates the target market: not just prompt-response models, but systems that iterate, explore paths, execute tools, and require a much more visible CPU backbone than in previous cycles.

Groq Deeply Integrates into Rubin’s Core

Perhaps the most symbolic announcement is the integration of NVIDIA Groq 3 LPX, a new inference rack with 256 LPU processors, 128 GB of on-chip SRAM, and 640 TB/s of scale-up bandwidth. NVIDIA asserts that, when deployed alongside Vera Rubin NVL72, this block accelerates decoding by enabling GPUs and LPUs to jointly compute each model layer per output token. The company even promises up to 35 times more inference throughput per megawatt and up to 10 times more revenue opportunities for billion-parameter models. While this should be read as product positioning, it marks a clear shift: NVIDIA no longer just wants to sell GPUs; it aims to dominate specialized low-latency inference.

This nuance is particularly relevant in 2026. Agent inference demands long contexts, rapid responses, and far greater energy efficiency than traditional training. The addition of Groq’s hardware and the notion that Rubin isn’t a single uniform platform but configurable by workload phase reflect this. In other words, NVIDIA is designing a stack where different components carry out distinct tasks and cooperate within the same AI factory. This is probably the strongest indication so far that the company has moved beyond the “one GPU for everything” vision.

Storage and Networking Now Part of the Design, Not Add-ons

The platform also incorporates BlueField-4 STX, an “AI-native” storage rack designed to extend context memory at POD scale. NVIDIA ties this to the new DOCA Memos framework and claims that this layer can increase inference throughput up to fivefold by accelerating management of the KV cache for large models. Additionally, Spectrum-6 SPX Ethernet is included as the backbone for east-west traffic between racks, with configurations available for Spectrum-X Ethernet or Quantum-X800 InfiniBand. NVIDIA emphasizes the use of co-packaged optics to improve energy efficiency and resilience against traditional pluggable transceivers.

All of this sends a clear message to the tech audience: the battle is no longer solely about the main accelerator. Contextual memory, KV cache storage, internal POD networking, and power management are now on the same strategic level as the GPU. NVIDIA is aiming to integrate all these value streams into a single reference design, even introducing DSX Max-Q and DSX Flex layers to better optimize power budgets and make AI factories more flexible network-wise. According to NVIDIA, DSX Max-Q could enable deploying 30% more AI infrastructure within a fixed-power data center, while DSX Flex helps turn these facilities into “grid-flexible” assets.

Availability, Ecosystem, and What Remains to Be Seen

NVIDIA states that Vera Rubin-based products will be available through partners starting in the second half of this year. Cloud providers like AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure are mentioned, along with cloud partners such as CoreWeave, Crusoe, Lambda, Nebius, Nscale, and Together AI. Hardware manufacturers including Cisco, Dell, HPE, Lenovo, Supermicro, ASUS, Foxconn, Gigabyte, Inventec, Pegatron, QCT, Wistron, and Wiwynn are also listed. Meanwhile, frontier labs and AI developers like Anthropic, Meta, Mistral AI, and OpenAI are named as future platform users.

That doesn’t mean everything will roll out simultaneously or at the same maturity level. The most cautious view highlights that what has been announced is an official platform plan with availability set for the second half of 2026 and a broad ecosystem already aligned. The key uncertainties are actual performance outside demos, the real adoption of LPU racks, operational costs compared to Blackwell, and most importantly, whether the market accepts this shift from just GPU products to an entire AI factory as an economic unit. Still, GTC 2026 concludes with a compelling message: Vera Rubin is not merely NVIDIA’s next GPU; it’s the first platform where the company openly envisions the infrastructure of industrial-scale agentic AI.

Frequently Asked Questions

What exactly is NVIDIA Vera Rubin?

It’s NVIDIA’s new AI hardware platform announced at GTC 2026. It integrates seven chips—including Vera CPU, Rubin GPU, NVLink 6, ConnectX-9, BlueField-4, Spectrum-6, and Groq 3 LPUs—and multiple rack types designed for training, post-training, test-time scaling, and agent inference.

How does it differ from previous generations like Blackwell?

The main difference is that Vera Rubin is envisioned as a much more heterogeneous AI factory architecture. It no longer revolves solely around one GPU but instead comprises specialized racks for GPUs, CPUs, LPU-based inference, contextual storage, and high-speed networking, all working together as a single system.

What role does Groq play within Vera Rubin?

NVIDIA has integrated Groq 3 LPX as a low-latency inference rack. According to the company, its LPUs will work alongside Rubin GPUs to accelerate especially the decode phase in large, long-context models.

When will Vera Rubin systems be available?

NVIDIA indicates that products based on Vera Rubin will reach through partner channels in the second half of 2026. Among the announced partners are major cloud providers, server manufacturers, and leading AI labs.