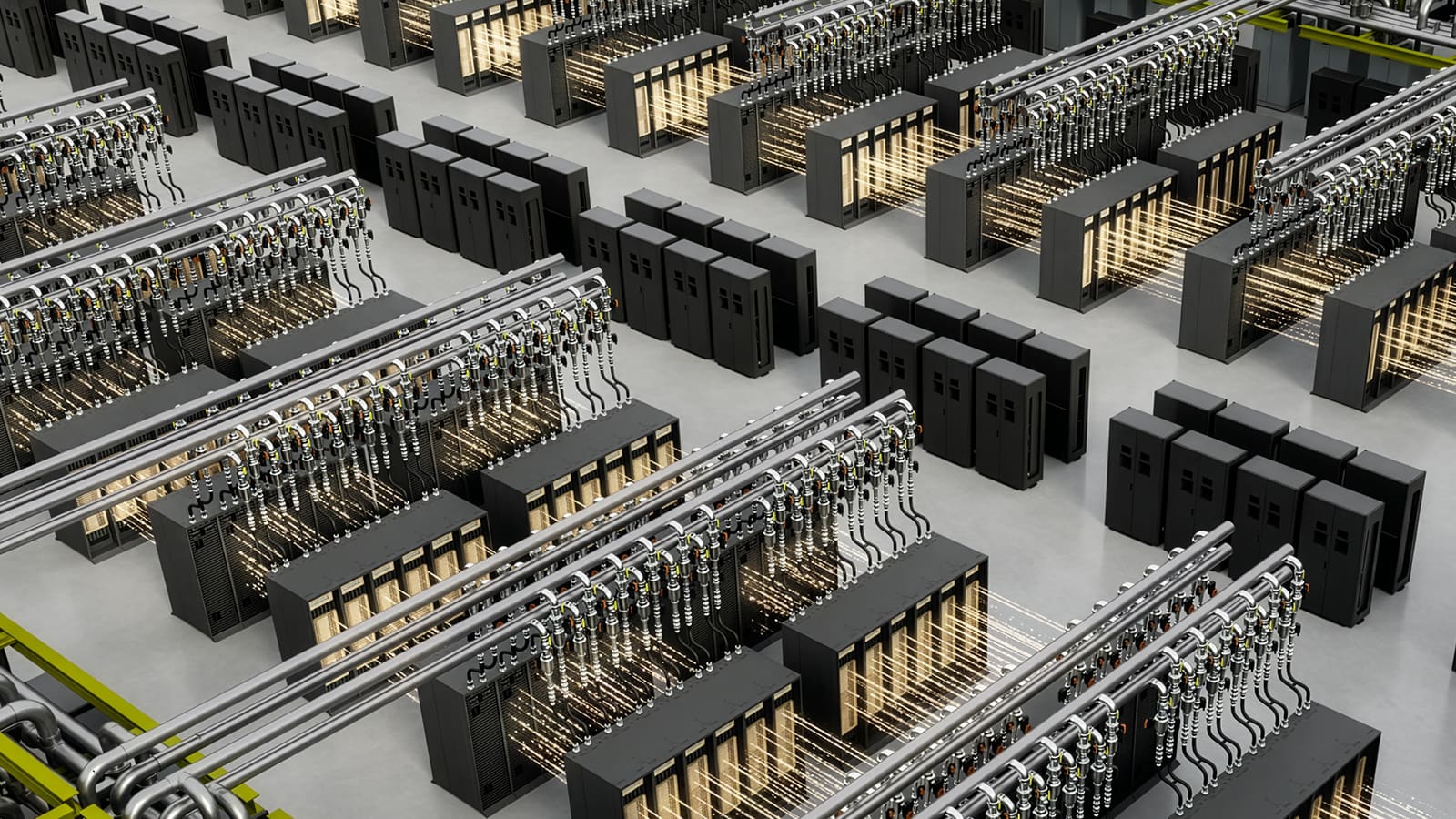

NVIDIA unveiled at GTC 2026 its new Vera Rubin DSX AI Factory reference design along with the general availability of Omniverse DSX Blueprint, a combination through which the company aims to provide cloud operators, hyperscalers, colocation providers, and integrators with a comprehensive recipe for designing, building, and operating large AI data centers with a much more industrialized logic. The proposal is not limited to compute: it encompasses networking, storage, power, cooling, control, and pre-simulation using physically accurate digital twins.

The centerpiece is the Vera Rubin DSX reference design, which NVIDIA describes as a guide to deploying “co-designed” AI infrastructure optimized to maximize tokens per watt and accelerate the transition from planning to first production. According to the company, this reference design covers the entire stack of an AI factory, from compute and Spectrum-X Ethernet networking to storage, and includes best practices for power, cooling, and control systems, enabling partners to repeat large-scale deployments with less friction and lower integration risk.

Simultaneously, NVIDIA positions Omniverse DSX Blueprint as the layer for simulation and pre-validation. Now generally available at build.nvidia.com, the Blueprint allows for building digital twins of these facilities to test designs, operational policies, and hardware or load changes before implementing them physically. In practice, the environment enables configuration comparisons of GPUs, visualization of metrics like energy consumption, operational efficiency, total cost of ownership, and execution of thermal and electrical simulations within a single workflow.

This Blueprint is not just a conceptual demo. NVIDIA’s public documentation states that the repository includes digital geometry of a 50-acre site, a front-end application for interacting with digital twins, SimReady assets to speed up environment creation, and simulations of electrical load and thermal behavior in hot aisle configurations. It also supports running electrical failure scenarios and thermal ramp simulations linked to load distribution, which is particularly relevant in data centers where every megawatt and degree matters.

On the software side, NVIDIA structures DSX around four blocks. DSX Max-Q aims to maximize computational performance and tokens per watt within a fixed power budget; DSX Flex connects AI factories to grid services for load modulation and local generation coordination; DSX Exchange integrates signals between IT and OT across compute, network, power, and cooling; and DSX Sim validates the installation as a high-fidelity digital twin via the DSX Air platform and SimReady assets. NVIDIA’s own page claims that the Max-Q approach could deliver up to 30% more GPU performance at data center scale, though this figure should be viewed as an optimistic commercial estimate from the company.

The scope of the initiative is also reflected in the ecosystem that has joined it. NVIDIA cites Cadence, Dassault Systèmes, Eaton, Jacobs, Nscale, Phaidra, Procore, PTC, Schneider Electric, Siemens, Switch, Trane Technologies, and Vertiv as contributors to the reference design and blueprint — whether through platform integration, providing SimReady assets, or connecting design, construction, and operation software. In essence, NVIDIA seeks to turn its vision of the AI factory into a sort of common language spanning physical infrastructure, industrial software, and data center operation.

Some partners have already outlined how they will fit into this scheme. Schneider Electric has announced that their validation includes new power and cooling models for NVIDIA’s rack-scale architectures, supporting 480 VAC, higher supply temperatures in the cooling loop, and room designs that better separate GPU racks, networking, storage, and CPUs. Additionally, Schneider and AVEVA will integrate their digital twin and multi-domain simulation capabilities — covering electrical distribution, thermal dynamics, airflow, and controls — across the Omniverse DSX ecosystem.

Vertiv, on its part, will contribute digital assets for power and cooling, ready for simulation, along with repeatable and validated infrastructure blocks aimed at accelerating deployments and reducing execution risk. The company frames this collaboration as an evolution of their converged infrastructure approach, where power, cooling, control, and services are designed as an interdependent system rather than separate components.

An additional key element in NVIDIA’s movement is energy. The company states that the primary current bottleneck for new AI deployments is no longer just chips or civil works but access to power — citing over $300 billion in equipment delays and more than 200 GW of projects awaiting interconnection in the U.S. To address this, NVIDIA is working with Emerald AI, GE Vernova, Hitachi, and Siemens Energy to accelerate grid access, enhance electrical system stability through dynamic demand control, joint grid modeling, and computing, as well as digital twin platforms for monitoring and failure prevention.

Overall, the announcement conveys a clear message: NVIDIA no longer intends to simply sell GPUs, networks, or DPUs separately. It aims to codify how to build a complete AI factory — from facility layout and cooling policies to thermal simulation, power grid interaction, and IT-OT orchestration. This approach aligns with how the company describes Vera Rubin DSX and builds upon the previous evolution of Omniverse DSX, introduced in October 2025 as an open blueprint for designing and operating gigawatt-scale AI factories validated at the Virginia AI Factory Research Center.

For the data center sector, this could mean a significant shift: the value proposition is no longer just about acquiring more GPUs before competitors but in getting them into production faster, with fewer costs, less overprovisioning, and reduced risks of integration issues across energy, cooling, network, and compute. NVIDIA summarizes this goal with terms like time to first production or time to revenue. In practical terms, the company is striving to make the AI data center look less like a handcrafted project and more like an industrial product — simulable, repeatable, and optimizable before the first rack is even installed.