Despite promises of unprecedented performance, the new AI GB300 servers based on Blackwell Ultra chips are falling short of the expected interest. Microsoft and other giants have reportedly reduced orders due to issues inherited from the GB200 generation.

Nvidia, a global leader in accelerated computing and artificial intelligence, is facing an unexpected setback: its new and ambitious GB300 NVL72 system, based on the revolutionary Nvidia Blackwell Ultra chips, is not convincing some of its major clients in the cloud and data center industry.

What are the new Blackwell Ultra chips?

Unveiled at GTC 2025, the Blackwell Ultra chips represent the largest generational leap since Hopper. They are specifically designed for the era of AI reasoning, where language models and deep learning not only generate text or images but also reason, plan, and respond in increasingly complex contexts.

These GPUs incorporate:

- Double the acceleration for attention layers (crucial for LLMs).

- 1.5 times more floating-point operations per second (FLOPS) than Hopper.

- Up to 288 GB of HBM3e memory with higher bandwidth.

- SuperNIC ConnectX-8 with 800 Gb/s connectivity per GPU.

- 5th Generation NVIDIA NVLink for maximum inter-node communication.

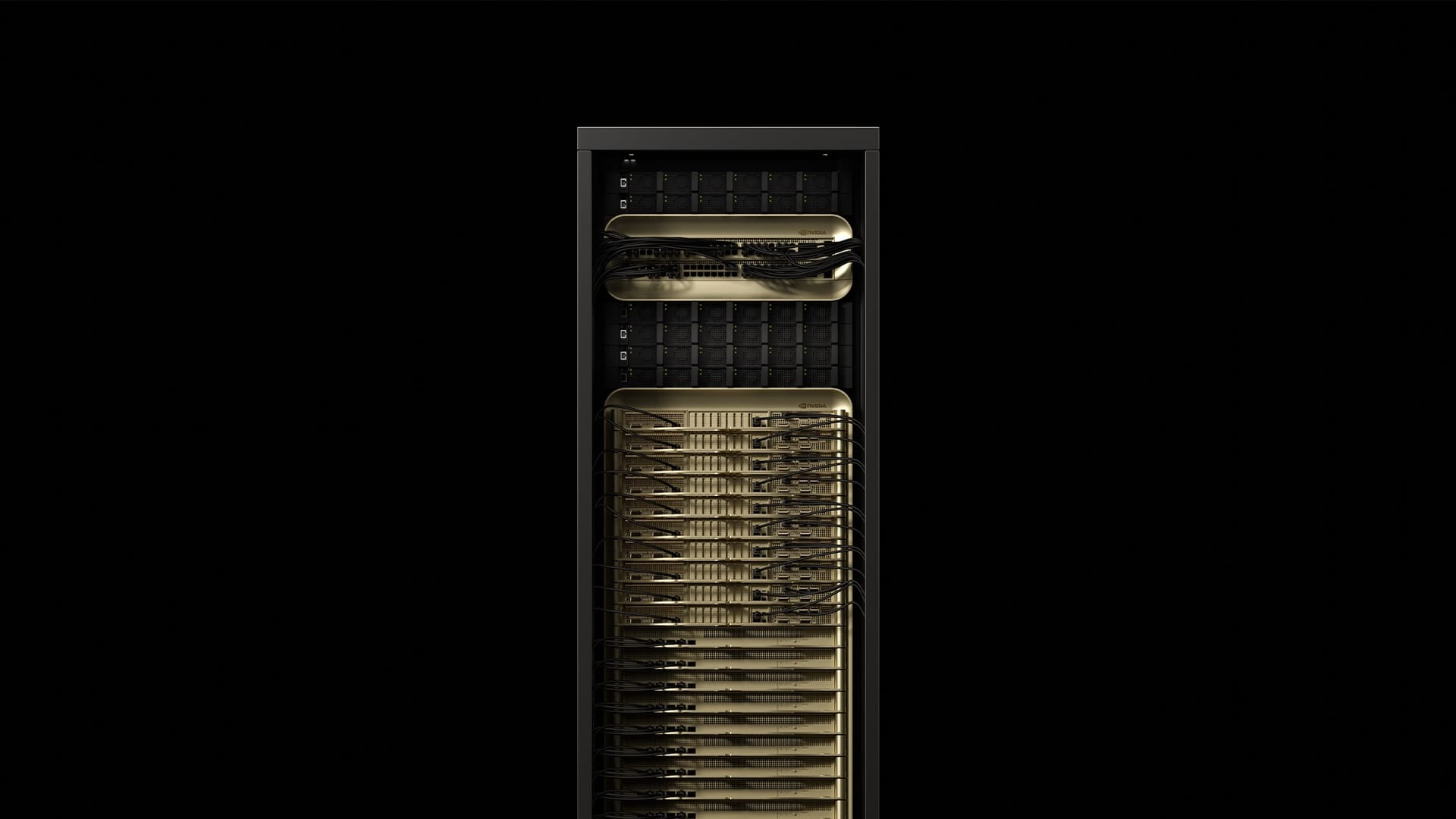

Mounted in configurations like the GB300 NVL72, these chips allow the creation of true AI factories, unified by Grace ARM CPUs and rack-scale liquid cooling architecture. Nvidia has promised up to 50x performance improvements for inference compared to its previous Hopper platform, with a 10x increase in TPS per user and 5x in energy efficiency (TPS/MW).

Why aren’t they selling as Nvidia expected?

According to the Taiwanese outlet Ctee, several large cloud service provider companies, including Microsoft, are reportedly “discarding” or delaying orders for these new systems. The reason is not only the high cost but also the poor experiences accumulated with the GB200 servers, which were also based on Blackwell and faced adoption challenges due to:

- Initial performance and integration issues, attributed to advanced packaging from TSMC.

- Long and complex installations that slow down deployments.

- Exclusive technical dependency on Nvidia for troubleshooting, which creates bottlenecks in maintenance and support.

- An still immature ecosystem in software and optimization tools to fully leverage the new architecture.

As a result, Nvidia only plans to distribute 15,000 GB200 servers during 2025, a figure significantly lower than what Hopper achieved. This has undermined confidence in the GB300, whose mass production could be delayed until 2026 if demand does not improve.

Companies prioritize stability over innovation

Although Blackwell Ultra offers impressive specifications, customers seem to be opting for more stable and mature platforms, such as the HGX H100 and H200 servers, which still dominate inference and large-scale model training. These solutions, backed by years of optimization and support, present lower operational risks in sensitive projects or those with critical availability demands.

In contrast, the GB300 NVL72 is still in its early stages. Its installation complexity, high energy consumption, and reliance on proprietary ecosystems lead many companies to think twice before committing at large scale.

Conclusion: Is Nvidia stalling in its own rhythm?

Nvidia has been the driving force behind the rise of generative AI. However, its ambitious bet on Blackwell GB300 seems to have outpaced what its partners are willing to follow for now. While no one doubts the technical potential of the new architecture, the market appears to be clamoring for maturity, stability, and support more than performance promises.

The company under Jensen Huang will need to adjust its deployment strategy, improve post-sales support, and lower entry barriers if it wants Blackwell to succeed at the scale that Hopper once achieved. The future of accelerated computing depends as much on hardware as on the trust of the ecosystem that accompanies it.