NVIDIA has introduced Nemotron 3 Nano Omni, an open multimodal model designed to enable AI agents to reason over video, audio, images, documents, and text within a single system. The company’s main promise is clear: replacing architectures with multiple separate models by a single perception and reasoning layer capable of reducing latency, costs, and context loss.

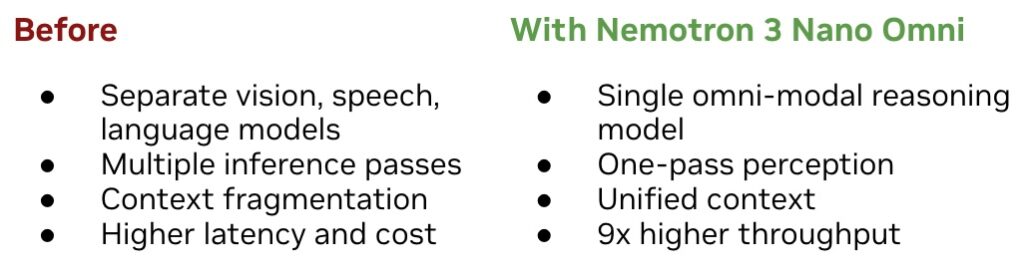

The launch addresses a practical challenge in enterprise AI. Many current systems use one model for vision, another for speech, yet another for language, and in some cases, additional components for documents, interfaces, or data extraction. This fragmentation requires multiple inference passes, increases costs, and can break the context between what is seen, heard, and read. NVIDIA claims that Nemotron 3 Nano Omni can deliver up to 9 times higher throughput than other open omni models with comparable interactivity.

An Omni Model for Seeing, Hearing, and Reasoning in a Single Pass

Nemotron 3 Nano Omni is based on a hybrid Mixture-of-Experts architecture with 30B-3B parameters, featuring integrated vision and audio encoders. Practically, this allows an agent to process different input types without relying on a chain of specialized models passing information among themselves.

This can be critical in real enterprise applications. Support agents might need to analyze a screen recording, review call audio, read logs, and respond with a coherent explanation. A financial agent may need to interpret PDFs, spreadsheets, charts, screenshots, and voice notes. If each step is handled by a different model, latency increases and context can degrade.

With Nemotron 3 Nano Omni, NVIDIA aims to unify this perception phase into a common model. The company describes it as a kind of “eyes and ears” for broader agent systems, capable of working alongside other Nemotron models like Nemotron 3 Super for frequent execution or Nemotron 3 Ultra for complex planning, as well as proprietary models from other providers.

The goal is not to replace all models within a workflow but to specialize a critical piece: fast multimodal understanding. In agents interacting with graphical interfaces, dense documents, or video, this capability can mean the difference between a useful demo and a slow or costly system to operate.

From Complex Document to Computer Use

NVIDIA highlights three major application areas. The first is computer use, where the model can assist agents navigating graphical interfaces, interpreting screen content, and reasoning about application states over time. H Company, one of the early users, reports that its agents can interpret Full HD recordings faster, which is significant for desktop automation and computer workflows.

The second area is document intelligence. Nemotron 3 Nano Omni can interpret documents, tables, graphics, screenshots, and mixed inputs, maintaining a more coherent relationship between visual structure and text. This suits compliance tasks, financial analysis, contract review, internal processes, and corporate reporting—where data rarely appears as clean, ordered text.

The third is understanding audio and video. In customer support, research, monitoring, or training workflows, many scenarios combine what was said, what appeared on screen, and later documented. A unified multimodal model can keep these components in the same reasoning flow rather than producing disconnected summaries from each source.

Business interest is reflected in the companies NVIDIA mentions. Aible, Applied Scientific Intelligence, Eka Care, Foxconn, H Company, Palantir, and Pyler are already adopting the model, while Dell Technologies, DocuSign, Infosys, K-Dense, Lila, Oracle, and Zefr are evaluating it. While a diverse list, it points to specific use cases: corporate agents, document analysis, automation, healthcare, manufacturing, and knowledge workflows.

Open, Deployable, and Designed for Business Control

A key aspect of the announcement is the open nature of the model. NVIDIA states that Nemotron 3 Nano Omni is released with open weights, datasets, and training techniques, enabling organizations to customize, evaluate, and deploy it with greater control. For regulated companies or those with data sovereignty needs, this is as important as performance.

The model is available on Hugging Face, OpenRouter, and build.nvidia.com as NVIDIA NIM microservice, and can also be deployed via cloud partners, inference platforms, or local systems. It can be customized using NVIDIA NeMo for specific domains. The company emphasizes that the architecture supports deployments from on-premises systems like DGX Spark or DGX Station, to data centers and public clouds.

This approach addresses a growing concern. Many organizations want AI agents but cannot always send documents, videos, calls, or internal data to closed services outside their control. An open, deployable model within their own environment offers more flexibility to meet internal policies, regulatory requirements, or data localization strategies.

NVIDIA’s strategic intent is clear: beyond selling GPUs, supporting models like Nemotron, NIM, and NeMo bolsters a software layer that makes their accelerators more useful and easier to adopt in production. Building enterprise agents on optimized models and microservices tied to their platform makes hardware-software separation in purchasing decisions more difficult.

The claimed 9x throughput increase should be viewed as a provider’s statement tied to specific scenarios and comparisons. Nonetheless, the challenge they address is real. Multimodal agents need to see, hear, read, and act faster. If each interaction with a screen or document demands multiple chained models, operational costs can escalate rapidly.

Nemotron 3 Nano Omni hits this critical point: it’s not enough for a model to understand multiple formats; it must do so with sufficient speed, cost-efficiency, and control to support continuous enterprise use. AI agents become much less appealing if each step adds seconds or if inference costs soar with each document, video, or desktop session.

Frequently Asked Questions

What is NVIDIA Nemotron 3 Nano Omni?

It’s an open multimodal model from NVIDIA that combines understanding of text, images, video, and audio to serve as a perception and reasoning layer for AI agents.

What does it mean that it offers up to 9 times more throughput?

NVIDIA asserts that its unified architecture can process more tasks per unit time than other open omni models with similar interactivity, by avoiding multiple passes through separate models.

What are its intended use cases?

Designed for agents working with graphical interfaces, complex document analysis, audio and video comprehension, customer support, compliance, research, and multimodal enterprise workflows.

Where can it be used or deployed?

Available on Hugging Face, OpenRouter, and build.nvidia.com as NVIDIA NIM, and deployable through cloud partners, inference platforms, and local systems compatible with NVIDIA infrastructure.

via: wccftech and NVIDIA blogs