NVIDIA showcased an idea at the San Francisco Game Developers Conference that has been gaining traction among large, distributed studios: moving part of game development from traditional physical hardware to centralized data center infrastructure. Their proposal revolves around NVIDIA RTX PRO Server, a platform based on the RTX PRO 6000 Blackwell Server Edition and the NVIDIA vGPU software, aiming to virtualize workstations, testing, AI workflows, and engineering tasks on a shared foundation.

This is no small concept. Modern game development involves larger worlds, more complex production pipelines, and increasingly distributed teams across offices, remote work, external partners, and studios in different regions. In this context, relying on fixed GPU hardware tied to a single desk begins to show inefficiencies: underutilized stations waiting for resources, misaligned work environments between departments, and difficulties reproducing errors when hardware, drivers, and tools don’t exactly match. NVIDIA presents RTX PRO Server as a solution to address these issues.

The company claims this approach would enable centralizing and virtualizing art, development, AI research, and QA tasks on the same GPU infrastructure within the data center, providing a workstation-class experience with greater scalability, resource utilization, and operational consistency across locations. This aligns with a broader market trend: it’s no longer just about buying powerful GPUs, but organizing them as shared, reassignable resources based on the studio’s real workloads.

From isolated workstations to shared GPU infrastructure

According to NVIDIA, the main shift is moving from scaling “workstation by workstation” to scaling via a centralized infrastructure. This would allow, for example, using the same GPU capacity overnight for model training, simulations, or automation, and reallocating it during the day for interactive development and content creation. From an efficiency standpoint, the message is clear: reduce idle capacity and increase internal elasticity without multiplying the number of physical machines in each department.

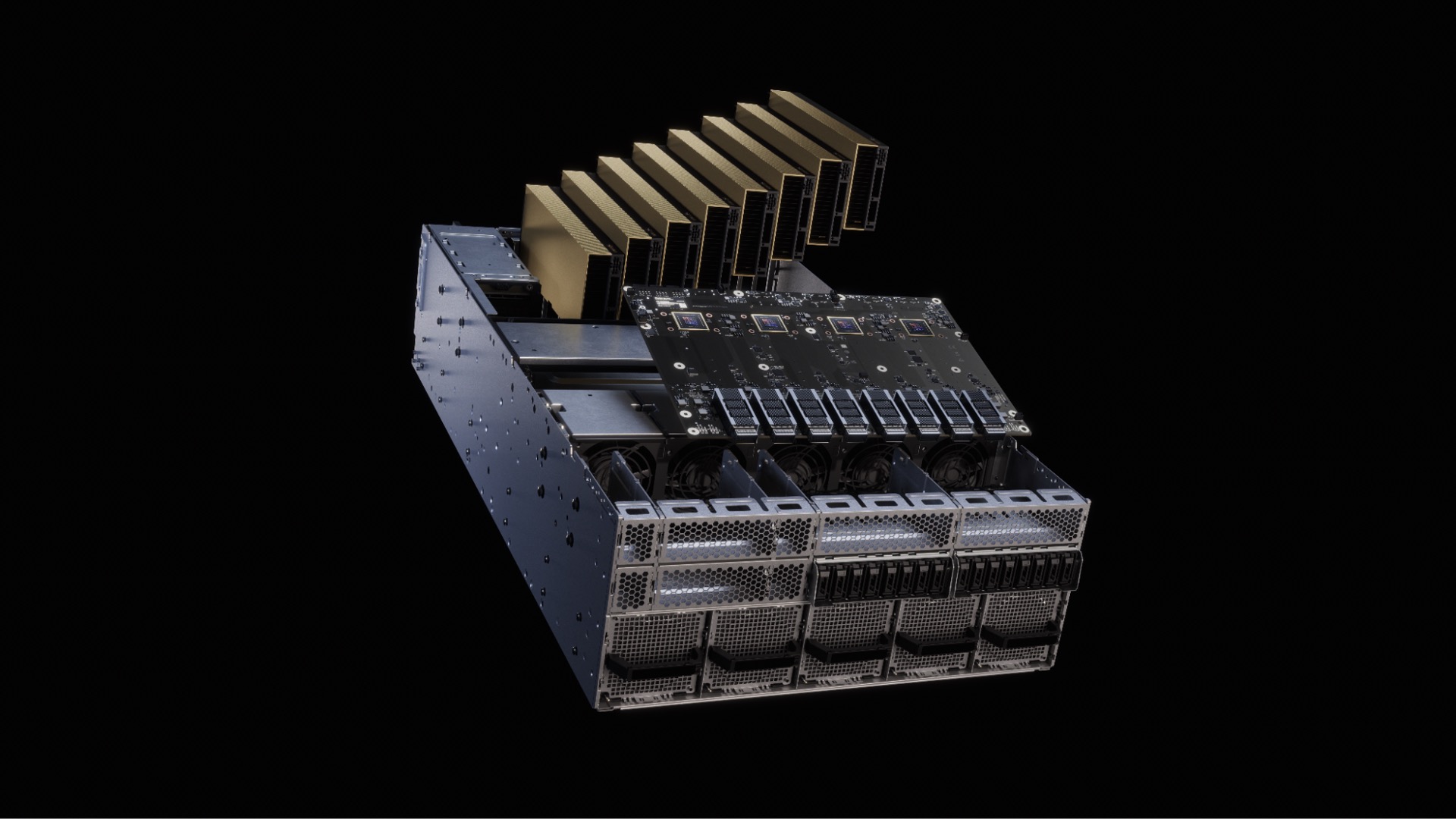

The hardware supporting this vision is the RTX PRO 6000 Blackwell Server Edition, a professional data center GPU with 96 GB of ECC GDDR7 memory, 24,064 CUDA cores, PCIe 5.0 support, up to 4 PFLOPS FP4 for AI, and configurable power consumption up to 600 W, according to NVIDIA’s specifications. It’s presented as a GPU capable of accelerating RTX graphics, AI workloads, rendering, simulation, and advanced analytics within a single environment.

For game studios, this opens interesting possibilities. NVIDIA’s own blog suggests that artists could work with virtual RTX stations for 3D modeling and generative workflows; developers would have consistent environments for programming and 3D development; AI researchers could reserve profiles with more memory for fine-tuning, inference, and agents; and QA teams could scale validation and performance testing using the same Blackwell architecture that powers the GeForce RTX 50 Series.

AI, QA, and development sharing the same backend

A key point of the announcement is that NVIDIA isn’t just selling RTX PRO Server as a graphics virtualization platform but as shared infrastructure for graphics and AI. The company emphasizes that AI is now part of daily workflows in studios—supporting programming, content creation, testing, and live operations. This often leads to separate stacks: high-end workstations for graphics and dedicated servers or clusters for AI. NVIDIA’s proposal seeks to bridge that divide.

This is made possible through the combination of MIG (Multi-Instance GPU) and vGPU. NVIDIA explains that MIG allows partitioning a single GPU into isolated instances with dedicated memory, cache, and compute resources. The RTX PRO 6000 Blackwell Server Edition can support up to 4 MIG partitions of 24 GB each. In a gaming development context, NVIDIA adds that, with combined MIG + vGPU configurations, a single RTX PRO 6000 Blackwell can support up to 48 concurrent users, maximizing utilization while maintaining performance isolation.

This figure is notable but should be interpreted carefully. It doesn’t mean all 48 users will experience the same performance under any load; rather, it indicates that the platform can segment resources and assign capacity based on different profiles and needs. For example, a light review session versus intensive rendering, compilation, or simulation workloads. The strategic message is clear: NVIDIA wants studios to think of GPUs as shared enterprise resources rather than fixed boxes under individual desks.

An enterprise approach for increasingly distributed studios

NVIDIA highlights that the RTX PRO Server is designed for enterprise-grade data center operations. Studios can deploy virtual workstations on hypervisors and remote workstation platforms compatible with NVIDIA vGPU, fitting into existing IT infrastructure without requiring isolated setups or parallel environments. Large publishers already use vGPU for scaling centralized development infrastructure, making this a natural evolution.

This is important because the value of this approach extends beyond raw performance. It addresses concrete modern development challenges: external contractors, multi-site studios, hybrid teams, faster QA validation, and higher reproducibility of bugs under homogeneous configurations. In a landscape where game production is distributed, budget-conscious, and increasingly reliant on AI, standardizing environments can be as critical as hardware power.

Of course, the proposal raises questions. NVIDIA has presented its vision and technological foundation, but the real value for each studio will depend on factors such as total deployment cost, remote access latency, profile and permission management, integration with existing tools, and the capacity of IT teams to operate this infrastructure without adding excessive complexity. The idea is promising; execution will determine whether this becomes a new industry standard or remains a solution for large studios.

What is clear is that NVIDIA is advocating a broader shift: the future of game development isn’t just about faster local GPUs but involves GPU virtualization, shared AI resources, and workstations transformed into internal services. The RTX PRO Server aims to be the backbone that unites artists, programmers, researchers, and QA under a shared Blackwell infrastructure.

Frequently Asked Questions

What is NVIDIA RTX PRO Server?

A data center infrastructure platform based on RTX PRO 6000 Blackwell Server Edition GPUs and NVIDIA vGPU software, designed to virtualize workstations and run graphics and AI workloads in enterprise environments.

Why does NVIDIA want to virtualize game development?

To centralize GPU resources for artists, developers, QA teams, and AI researchers, improving hardware utilization, consistency across teams, and scalability in distributed studios.

What GPU does RTX PRO Server use?

It’s built around the RTX PRO 6000 Blackwell Server Edition, featuring 96 GB of ECC GDDR7 memory, up to 24,064 CUDA cores, supporting RTX graphics, AI, rendering, and simulation workloads.

How many users can a single RTX PRO 6000 Blackwell support?

In combined MIG and vGPU configurations, NVIDIA states it can support up to 48 concurrent users, though actual performance varies with workload.

via: blogs.nvidia