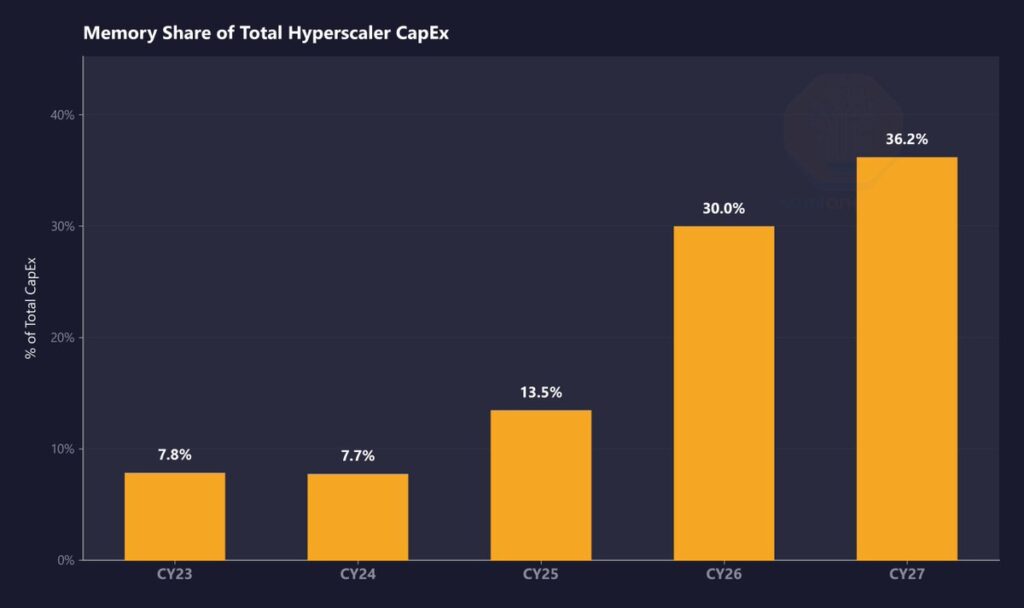

The upcoming major tension point for artificial intelligence doesn’t seem to be solely in GPUs. Increasingly, analysts and manufacturers are focusing on memory, to the extent that a new phase of the cycle is starting: an AI infrastructure era where DRAM, LPDDR5, and HBM are becoming increasingly significant in the total system costs and capex of major cloud providers. SemiAnalysis has presented the most aggressive thesis, estimating that memory will go from representing around 8% of hyperscaler total spending in 2023 and 2024 to nearly 30% in 2026, with even more pressure in 2027. This is an estimate from that firm, not an official hyperscaler guideline, but it well reflects the market’s scale shift.

This shift isn’t driven by a single variable. On one side, data center capex continues to accelerate driven by AI. BloombergNEF projects investments by major operators will be close to $750 billion in 2026, while Dell’Oro forecasts that total sector capex could reach $1.7 trillion by 2030. On the other side, a growing portion of this spend is no longer just on logic silicon, but also on memory needed by accelerators, servers, and entire racks to handle increasingly demanding training and inference loads.

The clearest sign of this pressure comes from the memory market itself. TrendForce predicts that conventional DRAM contract prices will rise between 58% and 63% quarter-over-quarter in Q2 2026, following a very tight first quarter, with this escalation attributed to capacity reallocation toward AI-related applications and servers, combined with still-tight supply. The firm also anticipates that shortages will persist throughout 2026 and likely beyond, as new production capacity will not reach sufficient volume until late 2027 or 2028.

HBM, the most visible bottleneck

If there’s a segment where memory inflation is most evident, it’s HBM. Micron confirmed in its Q1 fiscal 2026 results that it had already locked in prices and volumes for all its HBM offerings for the 2026 calendar, including HBM4. The company stated that its increased capex, reaching around $20 billion, would largely be aimed at boosting HBM capacity and advanced DRAM nodes, but still admitted it could not satisfy all customer demand.

SK hynix is heading in the same direction. In its official outlook for 2026, the company describes the current moment as an “AI memory supercycle” driven by HBM3E and, in the next phase, by HBM4. While it does not provide figures as detailed as SemiAnalysis regarding the exact weight on capex, it reinforces the idea of a market where memory is no longer just a component in the BOM but a central element of the business’s core.

This directly impacts the cost of AI servers. As HBM’s weight within the system increases, so does the overall sensitivity to price hikes or supply restrictions. SemiAnalysis argues that this pressure is already beginning to influence the capex guidance for 2026 and that the repricing expected in 2027 has yet to be fully reflected in market estimates. Again, this is an interpretation from that firm, not an official industry consensus, but it is supported indirectly by the fact that manufacturers like Micron have already sold a substantial part of their HBM capacity in advance.

DRAM and LPDDR5: less prominent but equally critical

The public narrative has mainly focused on HBM because it’s the memory most closely associated with high-end AI accelerators. However, pressure is also mounting on general-purpose DRAM and variants like LPDDR5. TrendForce explains that manufacturers continue prioritizing higher-margin products linked to servers and enterprise applications, which tightens the conventional market. This helps explain why some analysts are starting to speak of memory “consuming” an increasing part of cloud budgets—not just in cutting-edge systems but across the broader infrastructure that supports AI expansion.

SemiAnalysis’s estimate for LPDDR5—with contract prices more than tripling since Q1 2025 and open-market references above $10 per GB in 2026—should be seen as that analyst firm’s projection, not an officially verified figure from manufacturers. Still, it aligns with a broader trend: higher-performance, lower-power memory is gaining importance in architectures where efficiency and density are becoming increasingly critical.

Nvidia offsets part of the impact; the rest of the market, less so

One of SemiAnalysis’s more interesting nuances is that not all buyers are equally exposed. The firm suggests that NVIDIA benefits from preferential DRAM supply conditions that allow it to better mitigate memory inflation within its total server costs, whereas other players are more exposed. This claim isn’t backed by public contracts or official statements from NVIDIA or its suppliers, so it should be considered a market interpretation. However, it points to a plausible reality: in a limited supply environment, buying power and commercial priority matter as much as technical demand.

This may also explain why pressure varies among market participants. A vendor with mass scale, multi-year contracts, and supply priority can better absorb an inflationary cycle than a smaller company or one with less ability to secure preferential deals. In this sense, memory inflation not only raises server costs but could also shift competitive advantages within the AI accelerator and system market. This is a reasonable inference based on market behavior and the early closure of HBM capacity allocations, even if not explicitly stated in a single official source.

The true message of the cycle

The core takeaway is clear: memory is ceasing to be a secondary component in the BOM and is becoming one of the central levers of cost and infrastructure planning. AI not only demands more compute but also requires more bandwidth, higher capacity, and closer integration between processor and memory. When that demand meets a rigid supply with difficult-to-expand advanced nodes and pre-sold HBM, the result is a market that pushes capex, margins, and purchasing strategies simultaneously.

If this trend continues, 2026 and 2027 could be remembered not only as the years of massive AI data center deployment but also as the years when memory shifted from a supporting role to a key driver shaping the rules of the game.

Frequently Asked Questions

Why is HBM being discussed so much in AI infrastructure?

Because HBM offers significantly higher bandwidth than conventional DRAM and is crucial for powering high-performance AI accelerators. Micron has already confirmed that its entire HBM calendar supply for 2026 is committed, illustrating the high level of demand tension.

Is DRAM really increasing that much in 2026?

TrendForce forecasts that in Q2 2026, conventional DRAM contract prices will rise by 58% to 63%, after a very bullish first quarter. It doesn’t mean prices will double across all segments, but it confirms very strong market pressure.

Is it official that memory will represent 30% of hyperscaler capex?

No, this is not an official figure from hyperscalers. It is a SemiAnalysis estimate, publicly shared as part of its analysis of memory and AI cycles.

Does memory shortage only impact HBM?

No. While HBM is the most visible case, TrendForce also sees significant pressure in conventional DRAM and NAND due to capacity reallocation toward higher-margin AI and server applications.