Intel and SoftBank, through SAIMEMORY, have revealed new details about HB3DM, a 3D memory based on Z-Angle Memory (ZAM) that aims to become an alternative to traditional HBM for AI workloads and high-performance computing. While it isn’t a commercial memory ready to replace HBM4 in NVIDIA, AMD, or Intel accelerators tomorrow, it presents an interesting technical proposal at a time when memory bandwidth has become a major bottleneck in AI infrastructure.

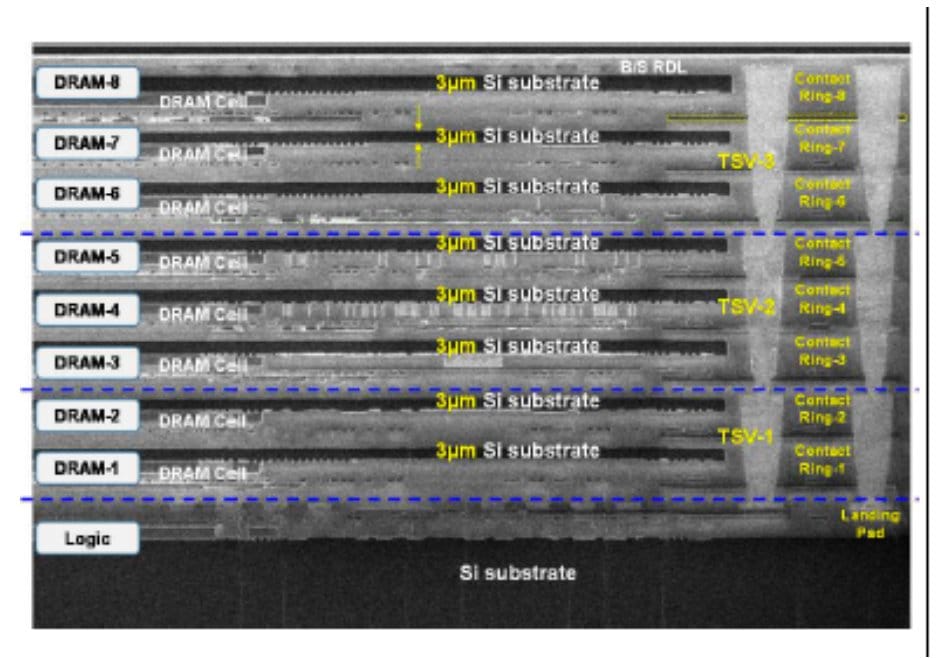

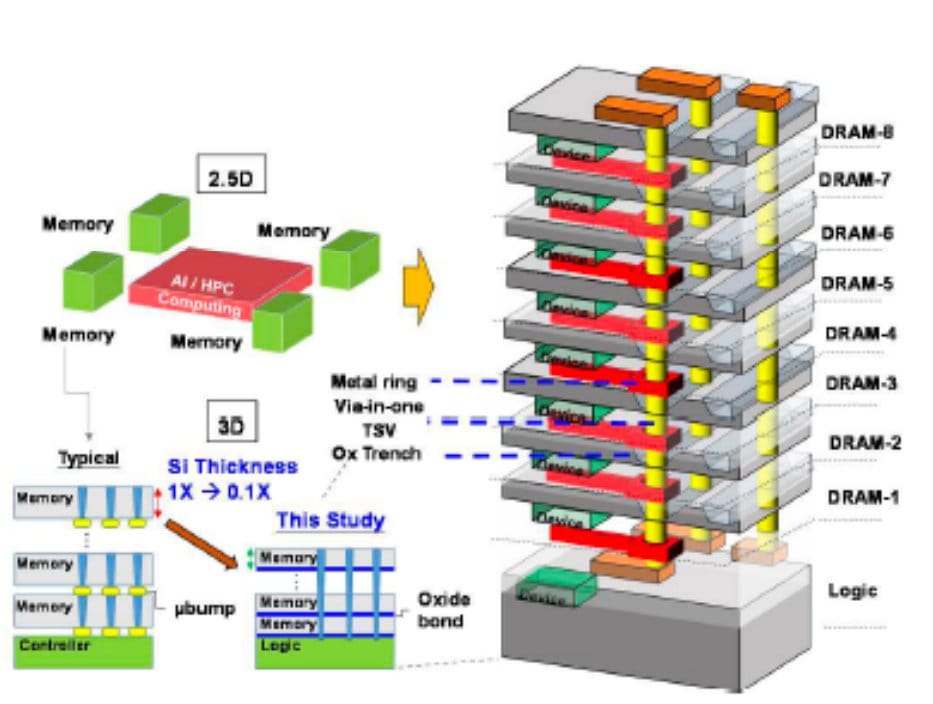

The information comes from data associated with a presentation scheduled for the VLSI Symposium 2026, which will be held in June in Honolulu. According to the disclosed details, the first HB3DM demonstration features a nine-layer stacking: a logic layer at the base and eight DRAM layers on top, connected via hybrid bonding with a very thin silicon substrate, just 3 µm per DRAM layer.

What does HB3DM offer compared to HBM

The current HBM memory already stacks DRAM chips vertically using through-silicon vias (TSVs), but HB3DM seeks to push this integration to an even denser design. The lower logic layer manages data movement, while the DRAM layers store information. The promise is clear: more bandwidth per area, lower power consumption, and a architecture better suited for AI accelerators.

In February, SoftBank announced that its subsidiary SAIMEMORY had signed an agreement with Intel to advance the commercialization of Z-Angle Memory, a next-generation memory technology designed for high capacity, high bandwidth, and low power consumption. The company aims to create prototypes in the 2027 fiscal year and reach commercialization by 2029.

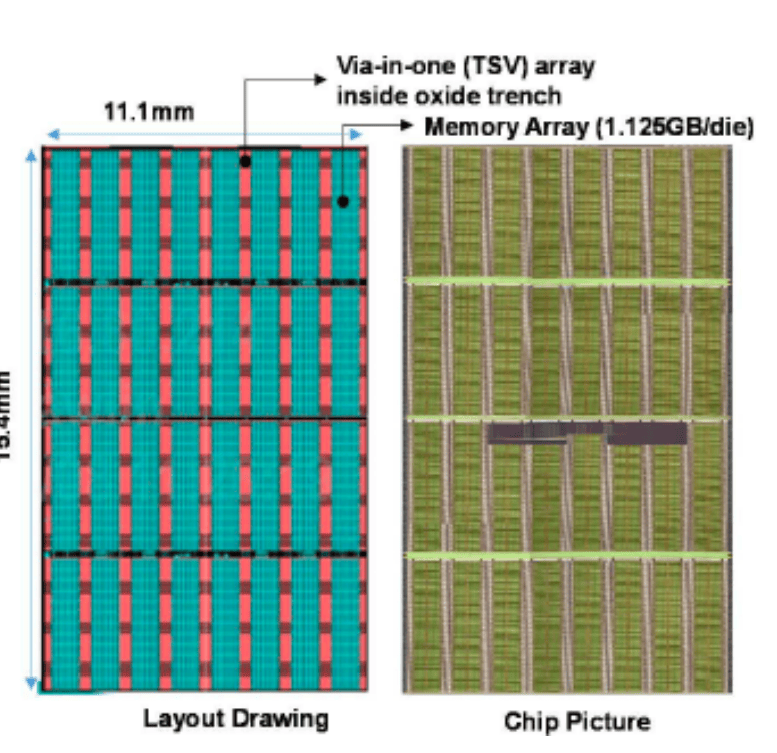

The known technical details of HB3DM reveal impressive figures. Each layer would incorporate around 13,700 TSVs, the die would have an area of 171 mm², and the bandwidth density would be approximately 0.25 Tb/s per mm². Applied to that surface, the total bandwidth per stack would be about 5.3 TB/s. In terms of capacity, the proposal moves around 9-10 GB per module, which is significantly below the 36 GB or 48 GB currently available in HBM4.

| Characteristic | HB3DM/ZAM, based on disclosed data |

|---|---|

| Structure | 1 logic layer + 8 DRAM layers |

| Total layers | 9 |

| DRAM substrate thickness | 3 µm per layer |

| Vertical connection | Hybrid bonding |

| TSVs per layer | Approximately 13,700 |

| Estimated capacity | Around 9-10 GB per stack |

| Die area | 171 mm² |

| Bandwidth density | 0.25 Tb/s/mm² |

| Estimated bandwidth | Approximately 5.3 TB/s per stack |

| Prototype target | Fiscal Year 2027 |

| Commercial target | Fiscal Year 2029 |

Comparing with HBM4 requires nuance. For example, Micron talks about HBM4 with a 2,048-pin interface, speeds exceeding 11 Gbps, and more than 2.8 TB/s per stack in its current generation, along with samples of 48 GB HBM4 in a 16-high configuration. HB3DM aims for higher bandwidth per stack based on published data but offers considerably less capacity. For AI workloads, both variables matter: moving data fast isn’t enough if there’s insufficient space near the processor.

A promising architecture but still early-stage

Intel and SAIMEMORY are not presenting a memory product ready for mass production. The official roadmap mentions prototypes first and commercialization later. This places HB3DM in a phase of technical validation, not as an immediate alternative for the accelerators arriving in 2026 and 2027.

This timeline is significant because HBM is also progressing. SK hynix, Samsung, and Micron are already working on HBM4 and HBM4E, with increased capacity, higher bandwidth, and more sophisticated silicon dies. By the time ZAM reaches commercial maturity, the market may be looking toward HBM5 or custom variants for large-scale clients.

Nevertheless, the concept makes sense. The demand for high-bandwidth memory has skyrocketed due to AI. Language models, multimodal systems, agents, and inference workloads all require moving large volumes of data with low latency and power consumption. The scarcity and cost of HBM have opened the door for alternative approaches, even if none will be easy.

Intel’s role is especially interesting. The company exited the conventional memory business years ago but retains expertise in advanced packaging, interconnection, and stacking technologies. SoftBank explains that SAIMEMORY will leverage validated technologies and experience from Intel’s Next Generation DRAM Bonding initiative, developed within U.S. advanced memory programs.

That doesn’t necessarily mean Intel will produce DRAM traditionally in its factories. For now, it’s unclear who will manufacture the underlying memory layers or how the supply chain will be organized. TrendForce notes that the timeline for commercializing these chips remains unknown, as does the identity of the base DRAM supplier. Still, Intel’s involvement suggests a potential indirect return to the domain of advanced memory.

More bandwidth, less capacity

The main appeal of HB3DM is its bandwidth. A stack capable of around 5.3 TB/s would be highly competitive for certain workloads where the processor needs continuous data access. Surface density can also enable more compact accelerators or systems with more memory channels in the same package space.

The main limitation is capacity. A module of about 10 GB falls far short of current and future HBM4 figures. For training large models, capacity and bandwidth must grow in tandem. If an accelerator needs many HB3DM stacks to match HBM4’s capacity, the bandwidth advantage might be offset by increased packaging complexity, cost, or overall power consumption.

Therefore, HB3DM should not be seen simply as “a replacement for HBM.” It could fit specific niches, such as inference accelerators, designs prioritizing extreme bandwidth, specialized HPC systems, or even proprietary products from Intel if the company chooses to differentiate from NVIDIA and AMD.

Thermal efficiency will also need to be assessed. Densely stacked memory faces physical challenges in heat dissipation across layers. While ZAM proposes lower power consumption and structures designed to enhance cooling, real-world prototypes will be necessary to accurately assess system-level benefits.

The potential of reducing power consumption by up to 40% compared to traditional HBM, as suggested by ZAM data, would be highly significant if proven in real products. In data centers focused on AI, every watt counts—impacting power bills, cooling, rack density, system stability, and deployment capacity.

A sign that memory will become the new battleground

HB3DM arrives at a moment when the industry is rediscovering a lesson system architects have emphasized for years: computation is futile if memory cannot keep pace. AI has turned this into a critical business challenge.

So far, public discourse has centered on GPUs, accelerators, and manufacturing nodes. But memory bandwidth bottlenecks are gaining increasing prominence. HBM is expensive, complex to produce, supply-limited, and demanding in packaging. An alternative that offers higher bandwidth, lower power, or better scalability will find a market.

The question is whether ZAM can move from a technical demonstration to a manufacturable, profitable product that meets the needs of leading accelerator designers. Many promising hardware architectures have failed to make the industrial leap. In memory, this leap demands performance, reliability, capacity, cost-efficiency, volume production, and years of validation with customers.

Intel and SoftBank have proposed an idea that deserves attention but still needs validation. HB3DM won’t displace HBM4 solely based on bandwidth figures. It must demonstrate scalability, capacity improvements, manufacturing feasibility, and acceptance by system integrators beyond a technical curiosity.

The interesting part is that the race is no longer just about making existing HBM faster. AI-driven demand is opening alternative paths in 3D memory, packaging, chiplets, interconnects, and power management. HB3DM is one of these paths. If successful, Intel could regain relevance in advanced memory. If not, it still confirms where the industry is heading: the next AI battlefield will also be fought in the micrometers separating each memory layer.

Frequently Asked Questions

What is HB3DM?

HB3DM is a 3D memory proposal based on Z-Angle Memory, developed within the collaboration between Intel and SAIMEMORY, a SoftBank subsidiary. It aims to provide high bandwidth and low power consumption for AI and HPC.

How does it differ from HBM?

HB3DM also uses vertical stacking but relies on ultra-fine 3D integration with 3 µm DRAM layers, hybrid bonding, and an underlying logic layer to handle data movement.

Is it already a commercial alternative to HBM4?

No. The technology is still in the technical demonstration phase. SoftBank talks about prototypes in FY2027 and commercialization in FY2029.

What are its main advantage and limit?

Its main advantage is the estimated bandwidth—about 5.3 TB/s per stack. The main limit is initial capacity, around 9-10 GB per stack, below current HBM4 figures.

News from @Intel and @SoftBank SAIMEMORY from @VLSI_2026

Paper T17.5First demo of HB3DM

➡️ 9 layer, 3 micron per stack

➡️ 1 logic + 8 DRAM layers,

➡️ 13.7k TSVs/layer with hybrid bonding

➡️ 1.125 GB/layer, so 10 GB per stack

➡️ 0.25 Tb/sec/mm2 bandwidth

➡️ 171 mm2 die, so 10… pic.twitter.com/q79qV4sRdT— 𝐷𝑟. 𝐶𝑙𝑎𝑖𝑟𝑒 𝐶𝑟𝑒𝑤𝑦 (@IanCutress) April 29, 2026