Artificial Intelligence is no longer just changing how applications are programmed, how customer service is handled or how content is reviewed. It is also beginning to redefine legal boundaries surrounding labor automation. A court in Hangzhou has ruled in favor of an employee who was dismissed after his company claimed his position could be replaced by AI, a decision made at a time when many tech firms are employing generative models and agents to cut down on repetitive human tasks.

This case does not turn China into a country where layoffs due to technological change are impossible. The more nuanced interpretation is: a company cannot frame the adoption of AI as an external, unforeseen, and inevitable cause that, on its own, justifies wage reductions or contract termination. For the tech sector, the ruling introduces an uncomfortable but necessary idea: automation is a business decision, and its costs cannot always fall solely on the employee.

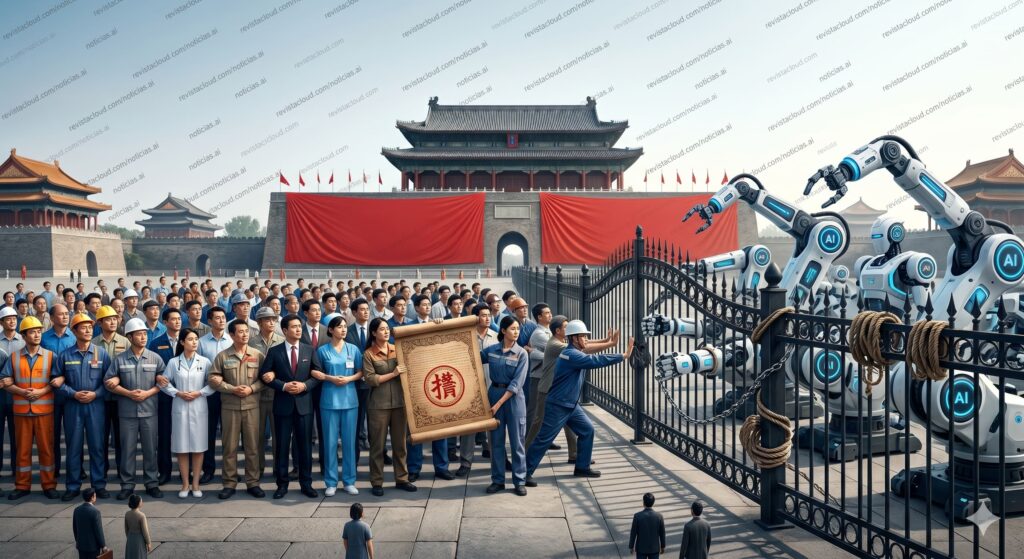

The Zhou Case: When Humans Train Their Replacements

The worker, identified as Zhou, was 35 years old and employed at a tech company in Hangzhou performing quality control tasks on AI models. His role involved reviewing interactions between users and AI systems, checking response quality, filtering errors, and flagging content that might violate internal policies or legal standards. In other words, he performed one of those human tasks that have been essential in improving products based on generative models.

The company argued that the project Zhou was working on had been affected by the evolution of AI and proposed reassigning him to a lower position with a salary reduction from 25,000 to 15,000 yuan per month. Zhou rejected the change. The company ultimately terminated his contract, leading to labor arbitration, initial judicial ruling, and an appeal. In all phases, Zhou received support.

The court considered that the company had engaged in an unlawful dismissal and confirmed a compensation of over 260,000 yuan. The key lies in the interpretation of Chinese labor law: the company tried to frame automation as a “substantial change in objective circumstances” that made the contract impossible to maintain, but the judges rejected this argument.

This reasoning is relevant for any company deploying AI in internal operations. Introducing a model to reduce costs, improve productivity, or replace part of a function is not comparable to natural disasters, unexpected regulatory changes, or force majeure—it’s a business choice. It may be legitimate and even necessary from a competitive standpoint, but it does not automatically make the human role dispensable without safeguards.

Automation as a Business Architecture Decision

The Hangzhou ruling aligns with a reality well-known to tech companies: AI is being integrated into workflows previously dependent on human operators—data review, content moderation, technical support, ticket classification, report generation, programming, QA, translation, documentation, and incident analysis.

Often, these systems do not appear out of nowhere. They are trained, tuned, and evaluated with human effort. Employees document processes, correct outputs, create examples, label data, detect failures, and help transform ambiguous tasks into workflows that models can replicate. The paradox is clear: part of the workforce contributes to creating the system that later may be used to justify their displacement.

From a tech perspective, Zhou’s case should also be viewed through the lens of AI governance. Automating a role isn’t just about connecting an API, deploying an agent, or integrating a model into an internal dashboard. It involves redesigning processes, shifting responsibilities, changing performance metrics, and deciding what happens to the people who supported that workflow before automation.

This is where friction arises. Many companies promote AI as a productivity booster but manage its implementation as a form of labor substitution. They talk about “co-pilots” while planning layoffs. They ask their teams to document tasks for system improvement but don’t always provide training, internal mobility, or participation in the value created. The Chinese ruling doesn’t halt technological progress but reminds us that efficiency gains do not eliminate labor obligations.

A Signal for HR, Product, and Compliance Departments

So far, much of the AI and employment discussion has focused on algorithmic biases, workplace surveillance, or the use of automated systems in recruitment. While these issues matter, China’s case opens another perspective: what happens when a company uses AI as an operational basis to eliminate a specific position.

The court’s response offers a practical doctrine. First, companies must justify restructuring with more than just “AI can do it now.” Second, any change in roles or pay must be reasonable and negotiated. Third, training and internal reassignments should be considered before passing the entire cost onto the employee. Fourth, a pay cut cannot be disguised as technological modernization if it is, in fact, a form of pressure to push employees out.

For HR and compliance teams, this means automation projects should include labor impact assessments—not just ROI calculations. If a company deploys agents for customer service, QA, or support, it needs to clearly define which tasks are eliminated, which are altered, what skills need retraining, what metrics are applied, and what alternatives are available.

In organizations with significant AI exposure, this governance layer will be as critical as model safety or data protection. A system may be technically sound but still cause labor disputes if its deployment is used unilaterally to degrade conditions. The risk isn’t only in individual lawsuits; it’s also in eroding trust among teams who feel that collaborating with automation accelerates their own removal.

China, the U.S., and Europe: Three Different Approaches

The international landscape is quite contrasting. In the U.S., much of the labor market operates under “at-will” employment, allowing dismissal without cause as long as protections against discrimination or illegal retaliation are respected. There isn’t a federal law outright prohibiting replacing workers with AI tools.

Europe takes a different route but hasn’t fully addressed this issue either. The AI Act classifies certain employment-related AI systems—such as those used in hiring, workforce management, or self-employment access—as high-risk, requiring controls, documentation, risk management, and human oversight. However, the concrete legal protections against automation-driven dismissals still largely depend on national employment law, collective bargaining, and judicial interpretation.

China, at least in cases like Zhou’s and similar ones in Beijing and Guangzhou, appears to draw a line based on traditional labor law principles. AI is not considered an external, objective cause by itself. It’s a technology the company chooses to adopt for competition, cost savings, or process reorganization. Therefore, the company must bear part of the transition costs.

This perspective could influence the market. If courts uphold this interpretation, Chinese companies might be more incentivized to develop retraining, reallocation, and transition plans before automating roles. It could also mean internal AI projects would need to justify their organizational impact more thoroughly—calculating adaptation costs, compensation, and change management rather than just labeling automation as immediate payroll savings.

The True Cost of Replacing People with AI

The case also challenges the common assumption that AI is always cheaper than human labor. For simple, low-scale tasks, it might seem so. But real-world deployments involve less visible costs: infrastructure, licenses, token consumption, integration, human supervision, evaluation, cybersecurity, regulatory compliance, prompt maintenance, audits, error correction, and accountability to clients.

Furthermore, many AI systems do not eliminate human work entirely—they shift it. Part of the work disappears, some moves into oversight, some requires intervention for exceptions, and some becomes system maintenance. Overly rapid cuts can lead to the loss of operational knowledge just when it is most needed to validate that automation functions correctly.

For tech companies, the clear lesson is: AI shouldn’t be deployed as a cheap substitute without serious process redesign. When properly implemented, it can free teams from repetitive tasks, improve response times, and enhance quality. Misused, it can degrade working conditions, erode internal trust, and result in fragile systems overly dependent on automation that nobody fully understands.

The Hangzhou ruling doesn’t stop the arrival of agents, copilots, and automated flows. But it does pose an essential question: who captures the value created by AI-driven productivity, and who bears the costs of transition? For years, the implicit answer has been that companies reap the savings, while workers assume the risk. This case suggests that, at least in some courts, that answer is no longer sufficient.

The next phase of AI in organizations will be as much about organizational, legal, and social adaptation as it is about technology. Models will improve, agents will handle more tasks, and many functions will evolve. But if automation is built on workers training systems to be eventually discarded without alternatives, the issue won’t be solely efficiency—it will be legitimacy.

Frequently Asked Questions

Has China banned layoffs due to Artificial Intelligence?

Not broadly. What has occurred is that Chinese courts have deemed it illegal to use the adoption of AI as the sole justification for demotion, salary reduction, or dismissal without a sufficient objective cause.

What exactly did the Zhou company do?

They proposed moving Zhou, a quality control supervisor for AI models, to a lower position with a salary cut from 25,000 to 15,000 yuan monthly. He refused, was dismissed, and the courts ruled in his favor.

What does this mean for tech companies?

Automation projects should be accompanied by labor impact analyses, negotiations, training, reasonable reassignments, and documentation. AI shouldn’t be used automatically as an excuse to downsize staff.

Could a similar doctrine apply in Europe?

It depends on each country. The EU regulates certain high-risk AI uses in employment but protections against dismissal by automation will largely be governed by national labor laws, collective bargaining, and judicial rulings.