Amazon is ready to take the race for AI infrastructure to a new level. According to recent reports, the company plans to increase its capital expenditure (capex) to $200 billion by 2026, primarily aimed at expanding AWS data centers, strengthening networks, and continuing its investment in proprietary AI chips. This move reflects an increasingly uncomfortable reality for the sector: in the cloud, the main bottleneck is no longer just software, but the physical capacity available.

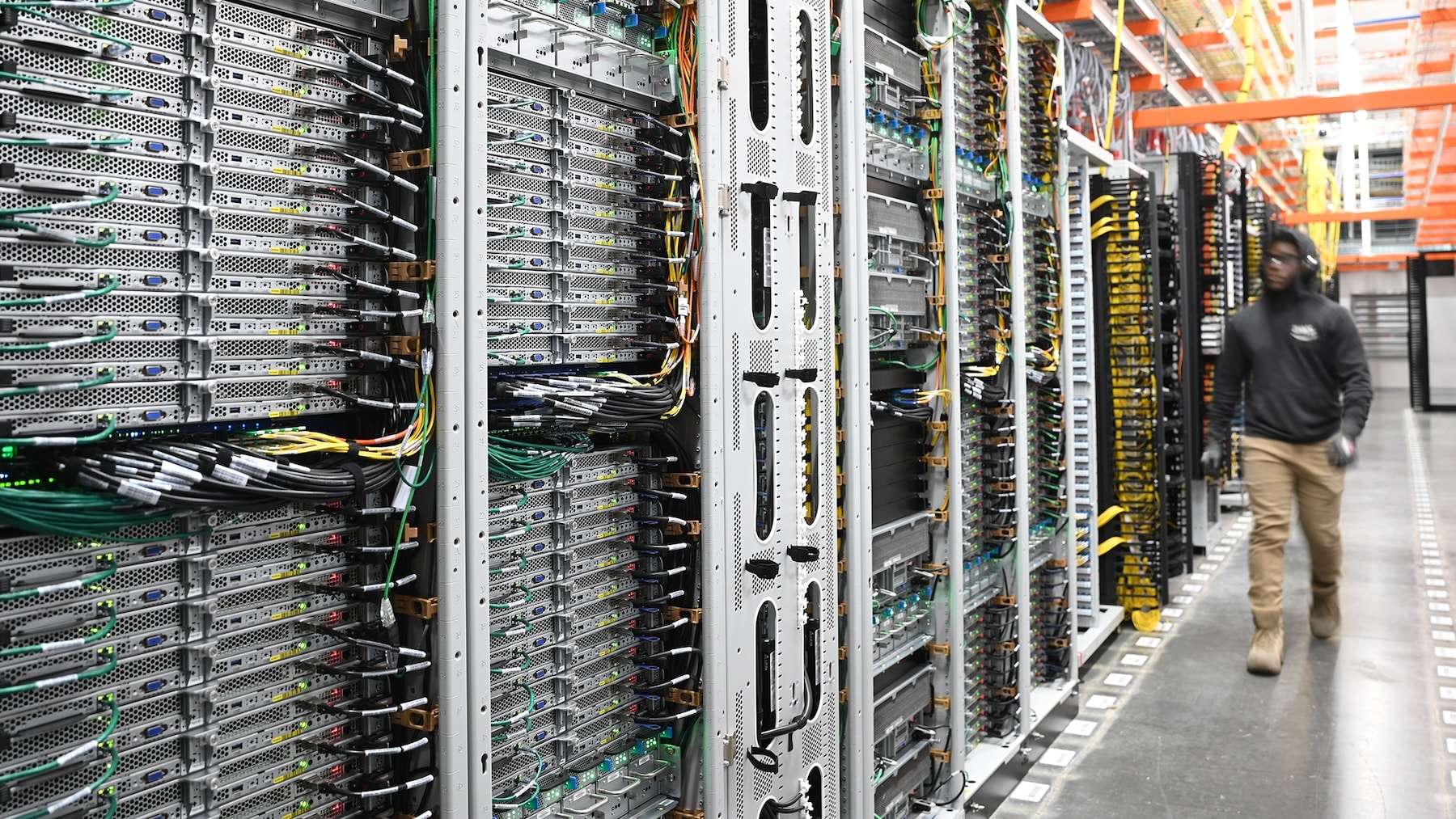

The message from Amazon is clear. Companies are moving from pilot projects to operating AI in production, and this leap multiplies demand for compute, storage, networks, and — above all — energy. It’s not just about “having GPUs”: we need entire racks, cooling, fiber, switching equipment, electricity contracts, and long-term planning measured in years. In this scenario, Amazon is betting on building ahead of demand to prevent system overload.

From $131 billion to $200 billion: capex as a thermometer of AI fever

Reuters estimates starting point at $131 billion in capex in 2025 with a jump to $200 billion in 2026, an increase of more than 50%. This acceleration explains why Wall Street reacts to every investment announcement with a mix of fascination and nervousness: the market demands growth but also expects spending to translate into sustainable revenues.

In the investor call cited by Reuters, Andy Jassy defended that AWS continues to grow strongly and highlighted an important detail: comparing percentages without considering the base can distort the picture. In the last reported quarter, AWS increased revenue to $35.6 billion, with a 24% year-over-year growth, while Google Cloud grew faster in percentage over a smaller base, and Azure maintained a high pace. In other words: AWS is not the fastest-growing, but it has a much longer track record.

Table — Amazon capex and AWS growth (recent public figures)

| Indicator | Data |

|---|---|

| Amazon Capex 2025 | $131 billion |

| Amazon Capex 2026 (projected) | $200 billion |

| Approximate variation | +52.7% |

| AWS revenue (for the cited quarter) | $35.6 billion |

| AWS YoY growth (for the cited quarter) | +24% |

The “AI cloud” changes the rules: more energy, more network, more chips

For years, cloud expansion was explained as a migration: moving on-premises servers to a provider. AI has changed that script. Training and deploying modern models drastically increases compute and network consumption and forces a reevaluation of all surrounding infrastructure: from data center topology to thermal design.

Additionally, Amazon has avoided dependence solely on external silicon. Its Trainium and Inferentia chips — designed for training and inference — are part of a strategy to optimize costs and availability in a market where specialized hardware has become scarce. At this point, capex no longer just means “more buildings”: it also involves more platform engineering, lower-latency network capacity, and investments in components that shorten the path from data to decision.

A hyper-scale race measured in hundreds of billions

Amazon is not alone. Bridgewater estimates that Alphabet, Amazon, Meta, and Microsoft could collectively invest around $650 billion in AI infrastructure in 2026, up from $410 billion in 2025. This analysis depicts a riskier phase: demand for compute continues to outpace supply, prompting the sector to accelerate spending to catch up.

This dynamic creates a domino effect. Increased investment leads to greater pressure on supply chains, industrial land, permits, power availability, and energy prices. On the other hand, there is more dependence: if a company builds its operations around cloud AI, capacity availability and resilience cease to be “technical details” and become strategic business requirements.

Implications for companies: capacity, timelines, and provider strategy

For corporates, the practical takeaway is clear: Amazon believes demand will keep growing, and it’s trying to prevent AWS from becoming a bottleneck. If the investment results in more effective capacity, organizations should see:

- Less friction to scale AI projects (from pilot to production) without hitting infrastructure limits.

- More architecture options, especially if AWS expands the availability and maturity of its own AI hardware.

- A cloud increasingly serving as an “automation platform”, not just hosting: infrastructure becomes part of the product.

However, there’s also an implicit warning: if the industry misjudges adoption pace or future profitability, maintaining that infrastructure could strain margins and prices. The debate is no longer just technological but also financial and operational.

Ultimately, the major transformation by 2026 isn’t that “the cloud makes AI” — it’s that AI is forcing cloud providers to operate like heavy industry: with scale investments nearly akin to electric infrastructure, long-term planning, and a quiet battle for the physical resources enabling the software.

Frequently Asked Questions

What does “capex” in AWS mean, and why does it matter for generative AI projects?

Capex refers to spending on infrastructure (data centers, servers, network, chips, etc.). In generative AI, it’s critical because it determines how much real capacity exists for training and running large-scale models.

How do AWS’s Trainium and Inferentia chips affect cloud AI costs?

They are hardware designed by Amazon to optimize training and inference. Widespread adoption could help improve availability and lower costs compared to third-party alternatives, depending on workload and software used.

Can this expansion address the “GPU capacity shortage” problem in the cloud?

That’s the goal: expanding data centers and platforms should ease some pressure. Still, demand is growing rapidly, and supply-demand balance may remain tight during peaks.

What should CIOs and platform teams consider when planning AI for 2026–2027?

Design with resilience: multi-region strategies, cost observability, capacity contingency plans, and realistic dependency assessments (model, data, network, latency, and provider commitments).