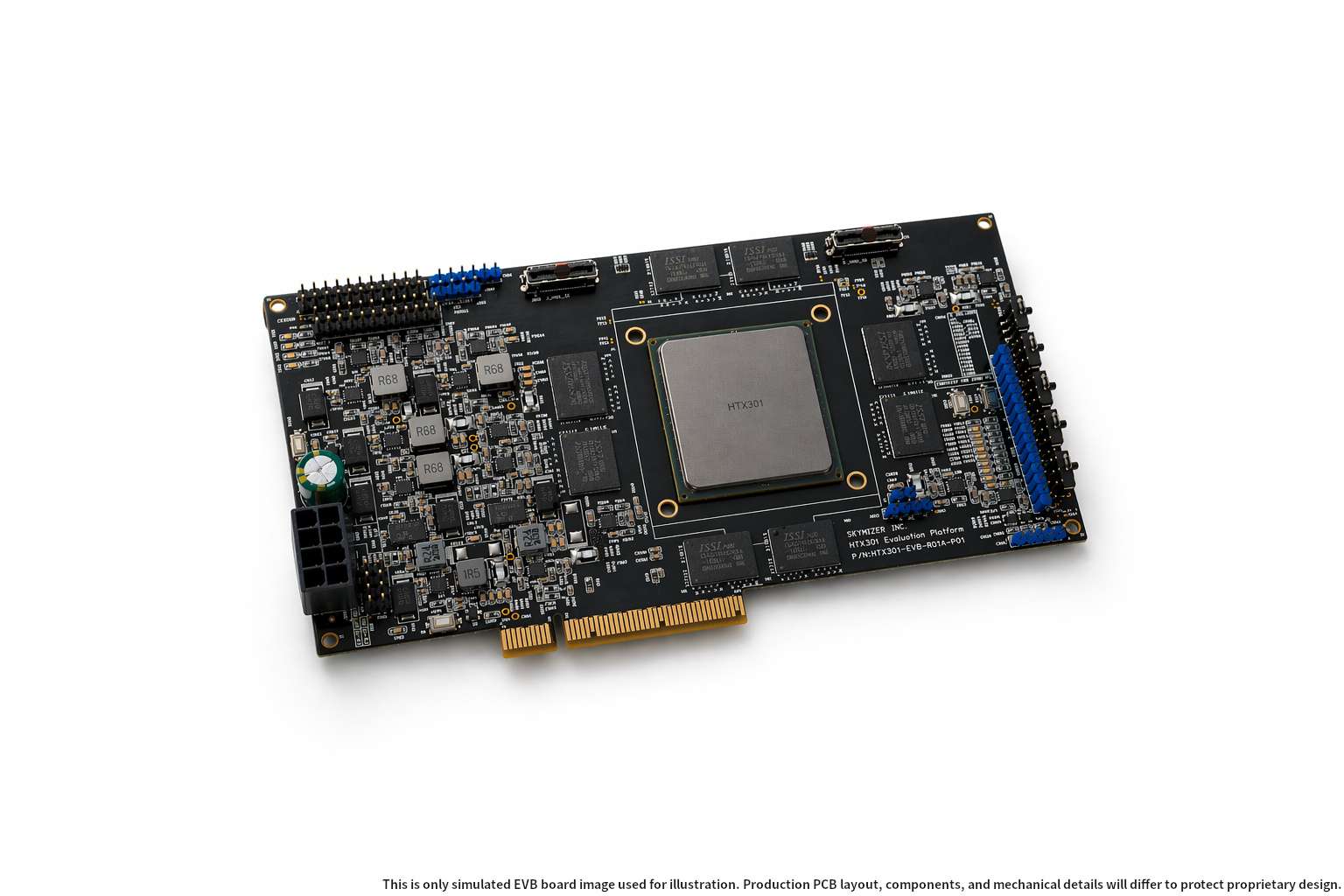

Skymizer has introduced HTX301, their first reference chip based on the HyperThought platform, offering an impressive promise to the AI inference market: running language models with up to 700 billion parameters locally on a single PCIe card equipped with six HTX301 chips, 384 GB of memory, and an estimated power consumption of around 240 W per card.

The proposal directly addresses one of the major challenges in enterprise AI. Many companies want to utilize large models without sending sensitive data to external services, but deploying advanced inference locally often requires GPU clusters, costly interconnections, complex cooling, and specialized hardware. Skymizer claims that their architecture can simplify this model by better separating inference phases and shifting part of the workload to silicon specifically designed for token generation.

It’s important to approach the announcement with cautious optimism. The company discusses a significant advancement and offers early access, but comprehensive independent testing, public performance data per model, accuracy, quantization, real latency, commercial costs, or mass availability are not yet available. Nonetheless, the approach is intriguing because it aligns with a clear trend: inference is beginning to outweigh training in terms of operational expenditure in AI.

A chip designed for the generation phase

Skymizer positions HTX301 as a response to the shifting usage of large models. During the initial stages of generative AI development, much focus was placed on training ever larger models. Now, many companies face a different challenge: how to run these models consistently, securely, and predictably in real applications—be it chatbots, internal copilots, search systems, customer service, document analysis, or programming tools.

The core technical innovation of HyperThought is in separating two inference phases of language models. The first is the “prefill” phase, where the system processes the input prompt; this is computation-intensive. The second is the “decode” phase, where the model generates tokens one-by-one; this stage is typically more limited by memory bandwidth and latency than raw processing power.

Current GPUs can perform both tasks, but they are not always the most efficient option for each. Skymizer advocates that their “decode-first” architecture allows specialized chips for generation, while existing GPUs handle more calculation-heavy workloads. In fact, they note that HTX301 complements GPU infrastructure rather than replacing it entirely.

This nuance is vital. While the most striking message in the announcement suggests eliminating the need for large GPU clusters to run huge models locally, the actual technical strategy appears more nuanced: leveraging specialized hardware for inference efficiency to free up GPU resources in certain scenarios.

Data sovereignty and more predictable costs

The business argument is as crucial as the technical one. Skymizer targets companies that prefer not to rely on cloud-based inference by token. In sectors like banking, healthcare, legal, government, defense, industry, and chip design, sending sensitive data to external platforms can pose privacy, regulatory, intellectual property, or operational control issues.

If the promised hardware enables running large models locally, it could transform deployment costs and architecture. Instead of paying per-use fees for cloud services or deploying complex clusters, an organization could install dedicated units for specific workloads, keeping data, models, and responses within their own infrastructure.

The company also mentions use cases like private coding copilots, RTL design assistants, contract review, clinical analysis, fraud detection, or enterprise agents. These scenarios demand low latency, privacy, and predictable costs. However, the suitability will depend on the chosen model, query volume, security requirements, and the software ecosystem’s maturity.

HTX301 is built upon LISA, Skymizer’s Language Instruction Set Architecture, a proprietary architecture focused on transformer inference. The company previously presented HyperThought as a hardware IP for generative and multimodal AI acceleration, aiming from edge devices to on-prem deployments. Synopsys noted in 2025 that Skymizer used their HAPS platform to validate HyperThought hardware before final silicon production, indicating an approach rooted in prototyping and co-design of hardware and software.

A bold promise, but still needing validation

The most ambitious claim is that a single PCIe card with six HTX301 chips and 384 GB of memory can run inference for models with 700 billion parameters using only about 240 W. This is very attractive compared to the typical deployment of large models across multiple high-end GPUs. Yet, key details are missing to assess the actual potential: the exact model format, quantization type, tokens per second, concurrent user capacity, context size, output quality, sustained performance, and how it compares to current GPUs in similar conditions.

In AI, available memory isn’t the whole story. A 700B parameter model can occupy very different amounts depending on precision and quantization schemes. Additionally, memory for KV cache, context, batching, runtime, and orchestration must be considered. Therefore, while the announcement opens an interesting possibility, independent benchmarks and tests with well-known models are necessary to evaluate its real-world impact.

Market trends favor such proposals. As companies shift from experimenting with chatbots to deploying agents and automation, inference transitions from an occasional test to a continuous operational load. Every query, agent, chained task, and workflow consumes tokens, and rapid consumption can make cloud inference costly or prohibitive.

This is where alternative architectures to general-purpose GPUs enter the picture. Not just Skymizer—industry as a whole is exploring specialized chips, NPUs, LPUs, edge accelerators, inference ASICs, near-memory architectures, and new workload separation techniques. The goal isn’t always maximum raw power but better cost-per-token, efficiency, latency, and deployment simplicity.

Skymizer seeks to distinguish itself with an end-to-end solution: chip, card, memory, ISA, and orchestration software to separate prefill and decode. If they can turn this vision into a stable product, it could appeal to companies seeking private, controlled AI without building massive hyperscale infrastructure.

Whether HTX301 will become a viable alternative or just another ambitious promise in a crowded market remains uncertain. AI hardware is complex: having a good chip alone isn’t enough. Software ecosystems, compilers, model support, framework integration, updates, monitoring tools, commercial availability, and customer trust are all critical. GPUs excel not just because of performance but also due to the ecosystem they support.

HTX301 deserves attention because it addresses a real need: simplifying, making more efficient, and reducing the cost of local inference for large models. But its real impact will be determined by real-world tests. If Skymizer can demonstrate sustained performance and practical compatibility with enterprise models, it could open an interesting pathway for bringing advanced AI out of large data centers into more localized deployments.

Frequently Asked Questions

What is Skymizer HTX301?

HTX301 is Skymizer’s first reference chip based on the HyperThought platform, designed for local inference of language models and AI workloads.

Can it run 700B parameter models on a single card?

Skymizer states that a PCIe card with six HTX301 chips and 384 GB of memory can perform local inference for models up to 700 billion parameters with around 240 W. Wide independent validation is needed to confirm actual performance, accuracy, and specific conditions.

Does HTX301 replace GPUs?

Not necessarily. Skymizer presents HTX301 as a complementary architecture that can offload the decode phase of inference, allowing GPUs to focus on more calculation-intensive tasks.

Which companies might find it useful?

Organizations that want to run AI locally for reasons of privacy, data sovereignty, low latency, or predictable costs—especially in banking, healthcare, legal, government, industry, defense, or software development.

via: skymizer.ai