Nanya Technology is reportedly part of the memory supply chain for NVIDIA’s upcoming Vera Rubin AI platform, according to information from the Taiwanese semiconductor industry. This move is significant because it would position the Taiwanese manufacturer within one of the most coveted hardware ecosystems in the market: NVIDIA’s next-generation AI servers, where memory has become as strategically important as the GPU itself.

The operation has not been publicly confirmed by NVIDIA or Nanya in the terms reported by Asian media. It points to LPDDR5X products intended for the CPU component of Vera Rubin. The platform combines the Vera CPU with Rubin GPUs, each using different types of memory: LPDDR5X for the CPU and HBM4 for the GPUs. This separation explains how a company like Nanya can participate in the system without directly competing with the major HBM suppliers—Samsung, SK hynix, and Micron.

LPDDR5X Reaches the Heart of AI Servers

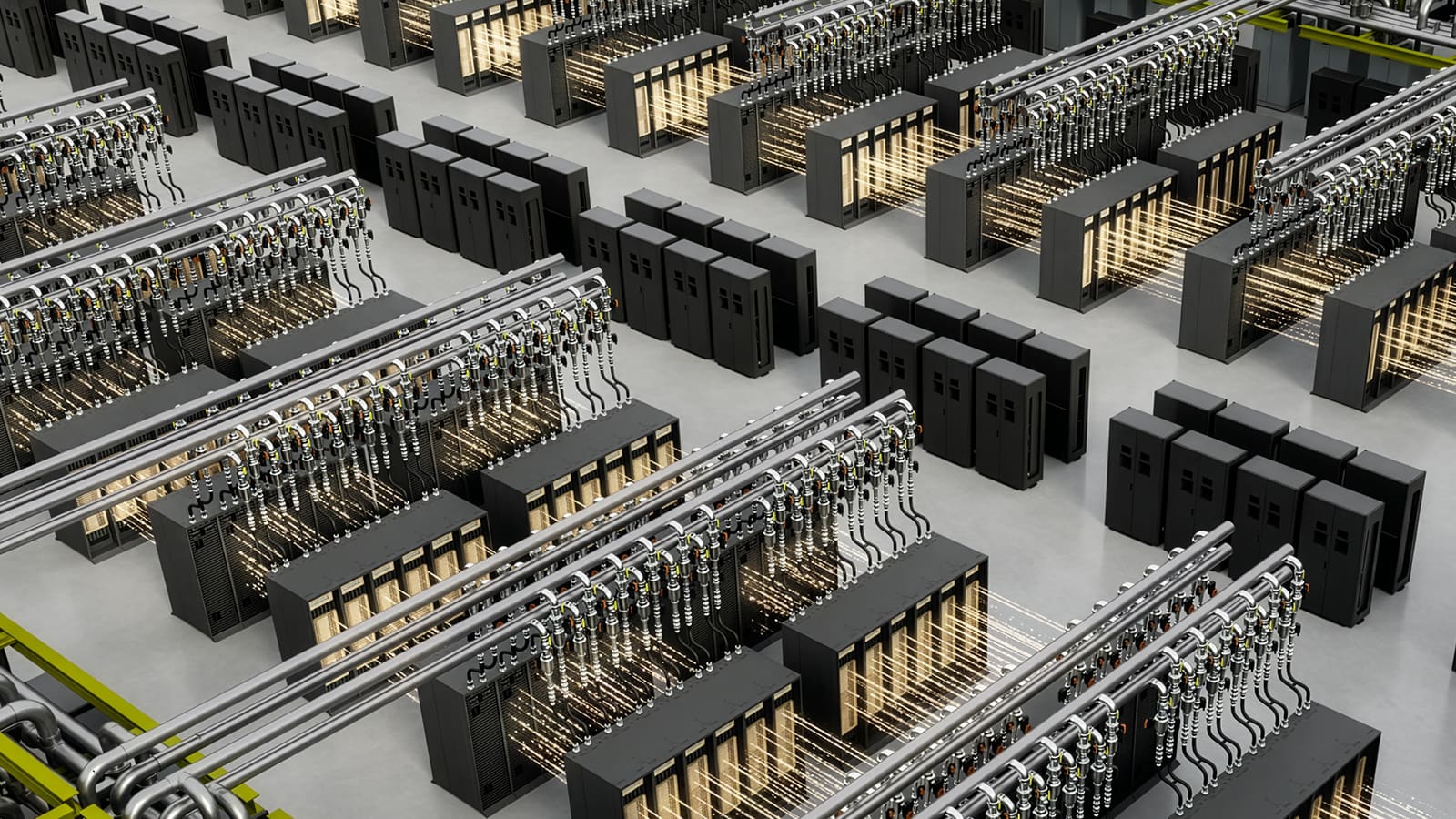

LPDDR memory is typically associated with mobile devices, lightweight laptops, and gadgets where power efficiency is critical. In Vera Rubin, NVIDIA is bringing this type of memory to the data center through SOCAMM, a modular format that aims to combine energy efficiency, capacity, and easier maintenance compared to soldered memory.

NVIDIA describes the Vera CPU as an 88-core custom ARM processor designed for AI workloads, reinforcement learning, orchestration, and memory-dependent tasks. The company indicates that Vera can work with up to 1.5 TB of LPDDR5X and achieve memory bandwidths of up to 1.2 TB/s. In the Vera Rubin NVL72 configuration, the platform reaches a total of 54 TB of LPDDR5X memory per rack for the CPU.

This is where Nanya’s potential role comes into play. According to Asian reports, the Taiwanese firm has been selected as a LPDDR provider for Vera Rubin, with technical support from TSMC in integration or packaging. If confirmed, this would be a symbolic and commercially important entry for Taiwan’s memory industry, which has so far played a more limited role in NVIDIA’s AI platforms compared to Korean and American suppliers.

It’s not simply any secondary memory. In modern AI architectures, the CPU does more than just support the GPU. It manages data, orchestrates flows, maintains software environments, coordinates tasks, prepares loads, and can intervene in critical parts of inference, agents, and simulations. Without sufficient bandwidth or memory capacity at the CPU level, the entire system’s efficiency can suffer.

Vera Rubin Shifts the Memory Pressure

The advent of Vera Rubin arrives amid global tensions over DRAM, NAND, HBM, and high-performance storage. AI demand has driven investments into advanced memory, especially HBM, but is also impacting prices and availability of other DRAM types. In this context, NVIDIA needs to diversify its suppliers and secure capacity before the next generation of servers enters large-scale production.

The choice of LPDDR5X for the Vera CPU reflects a technical balance. Compared to traditional DDR, LPDDR5X offers better energy efficiency and higher bandwidth within a more compact form factor. In AI racks where power and cooling are critical constraints, reducing memory consumption per capacity unit can free up margin for computation.

The SOCAMM format’s significance is also notable. NVIDIA advocates that these modules enable bringing low-power memory into data centers more easily, with simpler maintenance and fault isolation. Compared to soldered memory, a modular format can facilitate replacements, more flexible configurations, and service cycles better suited to server environments.

| Element of Vera Rubin | Associated Memory | Main Role |

|---|---|---|

| NVIDIA Vera CPU | LPDDR5X via SOCAMM | Orchestration, control, system memory, and agent workloads |

| NVIDIA Rubin GPU | HBM4 | Training, inference, and accelerated computing |

| Vera Rubin NVL72 Rack | LPDDR5X + HBM4 | Integrated data center-scale AI platform |

For Nanya, stepping into this space means moving up to a new level. Known primarily for DRAM and DDR/LPDDR products, the memory supply chain for AI has been concentrated among a handful of vendors capable of meeting strict technical, volume, and certification requirements. Having their LPDDR5X included in a platform like Vera Rubin would signal an improved positioning in higher-value segments.

Taiwan Gains Another Piece in the AI Supply Chain

Taiwan already holds a central position in AI through TSMC, server manufacturers, boards, power supplies, cooling, and system assembly. Nanya’s potential involvement adds another link: primary memory for AI platforms. While it doesn’t equate to dominating HBM, it reinforces Taiwan’s presence in an area where South Korea and the US have traditionally been more influential.

The move also carries geopolitical implications. NVIDIA relies on a highly integrated global supply chain, with chips manufactured by TSMC, HBM memory from major international suppliers, advanced assembly, and servers built by Asian manufacturers. In a market facing shortages, any additional supplier capable of meeting specifications reduces supply risks and enhances bargaining power.

Market reports suggest that this news may have already boosted investor interest in Taiwanese memory companies. While understandable, caution remains prudent. Entering NVIDIA’s supply chain does not guarantee immediate large-volume orders. AI platforms go through validation phases, ramp-up, technical reviews, and depend on the final availability of the entire architecture.

The broader reality, however, is clear: the next generation of AI is not solely decided by GPUs. Memory has become a critical technical, economic, and strategic bottleneck. HBM dominates headlines as it directly powers accelerators, but LPDDR5X, SOCAMM, high-performance SSDs, and low-latency networks are part of the same ecosystem. Without this foundation, models cannot be trained or run with promised efficiency.

Nanya could seize this moment for repositioning. If it manages to scale with reliability, quality, and competitive costs, entry into Vera Rubin could open doors to other server designs, AI-compatible CPUs, compact modules, and platforms where efficiency per watt outweighs traditional memory considerations. The AI landscape is reshaping the provider map, and not everyone who dominated memory markets in the past decade will benefit equally.

This news also underscores a broader point: NVIDIA is not just selling GPUs. It’s building complete platforms—encompassing CPUs, GPUs, memory, networking, interconnects, software, and entire racks. Participating in this supply chain means engaging with an architecture that could set the pace for data centers’ AI evolution in the coming years.

Frequently Asked Questions

What might Nanya Technology have achieved?

Nanya is reported to have entered as a LPDDR memory supplier for NVIDIA’s Vera Rubin platform, according to supply chain sources published in Asia.

What type of memory does NVIDIA Vera Rubin use?

The Vera CPU uses LPDDR5X modules via SOCAMM, while the Rubin GPUs utilize HBM4 for high-performance AI workloads.

Why is LPDDR5X important in AI servers?

Because it offers high capacity and bandwidth with lower power consumption compared to traditional configurations, which is crucial in AI racks where energy, space, and cooling are critical constraints.

Has NVIDIA officially confirmed Nanya as a supplier?

No direct public confirmation has been made by NVIDIA or Nanya regarding this; the information comes from supply chain sources and should be treated as such.