Google Cloud and NVIDIA have expanded their collaboration to bring the next generation of Vera Rubin GPUs to Google’s AI Hypercomputer infrastructure. The announcement centers around A5X, a new family of bare metal instances designed for agentic and physical AI workloads, with a scale promise hard to ignore: up to 80,000 NVIDIA Rubin GPUs within a single data center and up to 960,000 GPUs across clusters distributed across multiple locations.

This figure doesn’t mean any customer will simply press a button and reserve nearly a million GPUs at once. Instead, it highlights the direction large cloud providers’ AI infrastructure is heading: systems capable of integrating chips, networking, storage, and orchestration software so that increasingly complex models can be trained, fine-tuned, and deployed with fewer bottlenecks. Google presents this as an extension of its AI Hypercomputer, the same underlying technology behind Gemini and its enterprise and consumer AI services.

A5X: Google’s bet on Rubin within its AI Hypercomputer

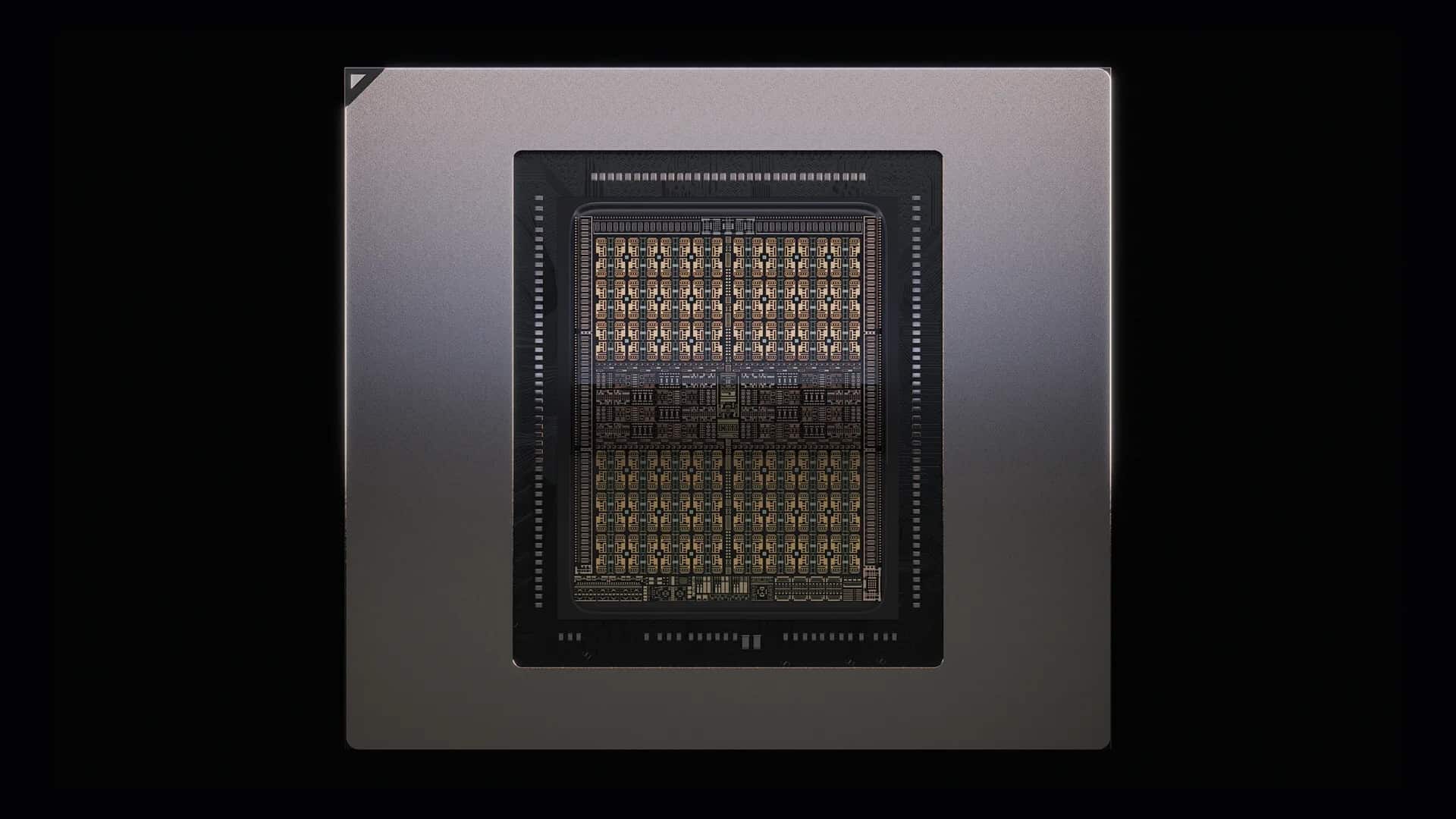

A5X will be based on NVIDIA Vera Rubin NVL72, NVIDIA’s next-generation architecture for rack-scale AI systems. Google states that it will be among the first providers to offer instances based on Vera Rubin when the platform becomes available later in 2026. Accuracy matters: this isn’t about a currently deployed instance but about an announced infrastructure for Google’s next expansion phase in Cloud.

The A5X approach aligns with a growing market reality. AI workloads are no longer limited to training large models. Large-scale inference, task-executing agents, reasoning flows, model tuning, and physical AI for robotics or digital twins demand more flexible architectures. It’s not enough to have many GPUs; they need to be connected with low latency, supplied with data, tasks restarted when nodes fail, and kept at high utilization to justify the investment.

NVIDIA assures that A5X, through the combination of Vera Rubin NVL72, ConnectX-9 SuperNICs, and Google’s Virgo network, can deliver up to 10 times lower inference cost per token and up to 10 times higher token throughput per megawatt compared to the previous generation. These numbers are vendor estimates and should be viewed as performance forecasts in specific scenarios but underline a real issue: AI already competes not just on accuracy but on operational cost and energy efficiency.

Google is also integrating concepts from the Falcon network protocol, developed alongside NVIDIA via the Open Compute Project. The goal is to improve data transport reliability in networks designed for AI clusters, where even small efficiency losses can multiply when tens of thousands of accelerators are involved.

Virgo and ConnectX-9: networking as a core component

The figure of 960,000 GPUs draws attention, but the real technical key is the network. Google’s Virgo Network fabric is the interconnection backbone Google is using to scale AI workloads within data centers and across multiple locations. With its eighth-generation TPUs, Google claims Virgo can connect 134,000 TPUs in a single data center and over one million TPUs across multiple sites. For A5X, the same technology extends to NVIDIA Vera Rubin NVL72, with announced caps of 80,000 GPUs in a single site and 960,000 in multi-site deployments.

This scale changes the nature of the challenge. In small clusters, the focus is often on per-GPU performance. In giant clusters, bottlenecks may come from node-to-node communication, training synchronization, storage access, fault recovery, or workload distribution—resources that demand an optimal combination of GPU, NIC, network, software, and services.

ConnectX-9 SuperNIC is NVIDIA’s hardware for accelerating network communication in cloud infrastructures over Ethernet. Its role is to reduce penalties when AI workloads are distributed across many servers. Virgo provides the layer for Google to connect this capacity within its AI Hypercomputer. The overall aim is to make each data center not an isolated island but part of a broader super-structure.

This strategy also indicates that Google does not want to choose between its own TPUs and NVIDIA GPUs. The company is strengthening its internal chips, like TPU 8t for training and TPU 8i for inference and reinforcement learning, while expanding its NVIDIA-based offerings. For customers, this hybrid approach can be advantageous: some models and frameworks will perform better on TPUs, others on GPUs, and many companies will prefer to maintain compatibility with CUDA, NVIDIA libraries, and established ecosystems.

Agentic AI needs more than just accelerators

Google Cloud introduced these updates within a broader package for what it calls agentic enterprise infrastructure. Alongside A5X and Virgo, the company announced new machines with Axion CPUs based on Arm, native PyTorch support for TPUs, improvements to Google Kubernetes Engine, new high-performance storage services, and capabilities to accelerate node and pod startup times.

The technical implication is clear: AI agents do not live only inside the model. They need to invoke tools, access databases, coordinate tasks, save context, evaluate responses, run code, retrieve documents, and respond with low latency. GPUs or TPUs are essential for the core model work, but around them is a layer of CPU, networking, storage, and orchestration that can distinguish a flashy demo from a production-ready service.

Google recognizes this with its Axion N4A instances optimized for agent runtimes, reward calculations, orchestration, and support tasks. It has also improved GKE to speed up node and pod launches, and introduced an Inference Gateway with predictive routing to reduce latency to the first token. In conversational AI applications, this latency directly affects user experience.

Storage is another vital yet less flashy aspect. Google Cloud Managed Lustre increases bandwidth up to 10 TB/s and capacity up to 80 petabytes, according to Google. Additionally, Rapid Buckets in Google Cloud Storage aim for low-latency checkpoints and training recoveries. If data doesn’t arrive on time, expensive accelerators are left waiting.

Google and NVIDIA need each other more than they compete

The announcement comes at a time when Google is heavily investing in its TPUs while NVIDIA continues to dominate much of the AI accelerator market. On the surface, the relationship might seem contradictory: Google competes with NVIDIA on specialized chips but also needs to offer NVIDIA GPUs because its clients request them and because much enterprise AI software is built around CUDA ecosystems.

NVIDIA, on its part, benefits from being present within one of the world’s largest clouds. Google Cloud provides clients, data centers, networks, managed services, security, Kubernetes, storage, and an enterprise layer that simplifies deploying models into production. For NVIDIA, selling hardware alone is no longer enough; it increasingly matters how its platforms integrate into complete AI architectures.

The collaboration also extends to Gemini in Google Distributed Cloud on Blackwell and Blackwell Ultra GPUs—confidential machines with NVIDIA Blackwell and models like Nemotron and the NeMo framework within agent platforms. This positions NVIDIA not just as a chip supplier but as part of the software stack that companies use to build agents, simulations, robotics, and industrial applications.

The prospect of nearly a million GPUs is ambitious, but the real focus is practical: the next phase of AI will depend less on who has the most powerful chip and more on how efficiently accelerators, networking, storage, software, and energy are combined. Google and NVIDIA aim to demonstrate that this scale can be packaged as a cloud service.

For customers, A5X could become a key option if they need to train very large models, serve large-scale inference, or deploy complex agents with demanding performance requirements. For the market overall, it signals that AI is entering an era of extreme infrastructure, approaching the scale of nearly a million accelerators, with each generated token supported by an increasingly sophisticated industrial architecture.

Frequently Asked Questions

What is Google Cloud A5X?

A5X is a new family of Google Cloud bare-metal instances based on NVIDIA Vera Rubin NVL72, designed for agentic AI workloads, massive inference, training, and physical AI applications.

How many GPUs can A5X scale?

Google and NVIDIA mention up to 80,000 NVIDIA Rubin GPUs in a single data center and up to 960,000 GPUs in clusters across multiple locations.

Is A5X already available?

Google states that A5X will be based on NVIDIA Vera Rubin NVL72 when this platform becomes available later in 2026. Therefore, it is an announced capability for the next-generation infrastructure.

Why does Google use NVIDIA if it also has its own TPUs?

Because many clients require NVIDIA GPUs for compatibility, performance, and software ecosystems. Google combines its TPUs with NVIDIA GPUs to provide more options depending on the workload.