The race for artificial intelligence is no longer just about training large models. Inference —the phase where these models respond, reason, perform tasks, and power AI agents— has become the new strategic battleground for chip manufacturers, foundries, and memory providers. On this front, Samsung is attempting to capitalize on an unusual opportunity: regaining influence in Nvidia’s supply chain.

The catalyst is Groq’s LPU technology, specialized for low-latency inference. Although some industry analyses allude to a hidden or strategic acquisition, the officially announced agreement by Groq was presented as a non-exclusive license for Nvidia to use its inference technology, along with the addition of Groq’s founder Jonathan Ross, Sunny Madra, and other team members to Nvidia. According to its own statement, Groq continues to operate as an independent company with Simon Edwards serving as CEO.

Samsung gains visibility with Groq 3 and inference AI

Samsung’s interest isn’t just in manufacturing a specific chip. The South Korean company aims to demonstrate that it can once again be relevant to Nvidia beyond HBM memory. At GTC 2026, Samsung emphasized its role as a partner to Nvidia in memory, advanced foundry, and packaging, and highlighted Jensen Huang’s mention of Samsung as a key collaborator in manufacturing Groq’s new LPU.

This move is significant because Nvidia has relied on TSMC for producing its most advanced GPUs for years. A component related to Nvidia’s future inference platform passing through Samsung Foundry doesn’t instantly shift industry dynamics, but it does open a gap in a long-standing relationship dominated by the Taiwanese foundry.

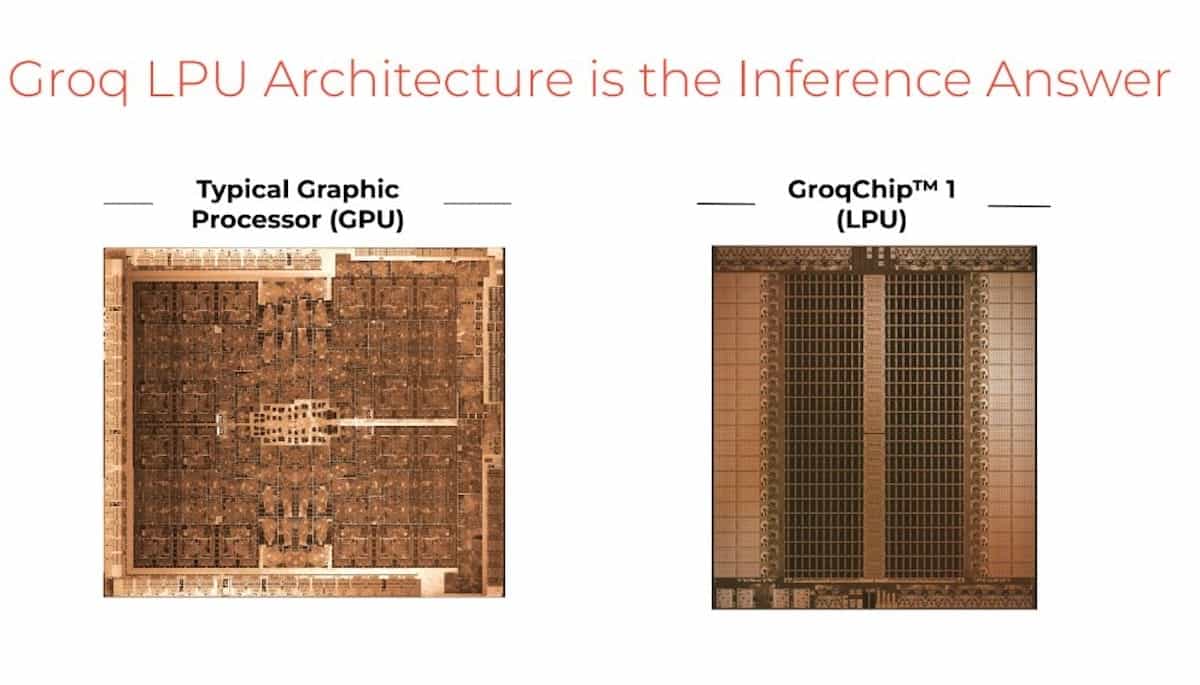

Industry sources indicate that Groq 3 will be manufactured by Samsung Foundry using a 4nm process. Unlike many AI GPUs, this chip is tailored for inference workloads and is based on an architecture leveraging SRAM instead of large stacks of HBM memory. This technical difference partly explains Nvidia’s interest: GPUs remain essential for training and many general tasks, but generative AI models and agents increasingly require accelerators capable of low-latency, high-performance execution.

The paradox is that Samsung seeks to strengthen its position in Nvidia by leveraging both its role in HBM and a new LPU that doesn’t depend on HBM in the same way training GPUs do. Its broader industry argument is to offer advanced memory, logic manufacturing, and packaging as an integrated AI infrastructure solution.

Samsung’s advantage: HBM, foundry, and packaging

Samsung recognizes that high-bandwidth memory has become a critical component for AI. At GTC 2026, the company showcased HBM4E and spoke about its upcoming HBM5 architecture. It also highlighted packaging technologies like Hybrid Copper Bonding aimed at improving thermal management in high-performance environments.

This combination is key. Large AI systems no longer rely solely on designing the best chip but on integrating GPU, specialized accelerators, memory, interconnections, storage, networking, and cooling into increasingly dense platforms. In this context, Samsung aims to offer a more comprehensive proposal: not just wafers or memory, but a more integrated technological chain.

For Nvidia, having a secondary manufacturing partner in certain lines also makes strategic sense. While TSMC remains the dominant supplier for Nvidia’s most advanced chips, AI demand has strained production capacity, advanced packaging, and memory availability. Diversifying suppliers can improve negotiation leverage, reduce risks, and accelerate the availability of specific products.

However, Samsung still needs to demonstrate consistency. Securing an order tied to Groq 3 doesn’t mean replacing TSMC in the high-volume, high-value GPU market. Confidence in advanced processes, wafer performance, energy efficiency, packaging, and delivery capacity remains critical. TSMC maintains a very strong position in this terrain.

TSMC isn’t standing still

The main narrative here is simple: Samsung aims to turn Groq’s LPU into a gateway for more business with Nvidia, while TSMC seeks to protect its central role in Nvidia’s supply chain. DigiTimes reports that TSMC is pushing to compete for future generations of LPUs, fueling speculation that Samsung’s initial advantage may not be guaranteed long-term.

TSMC’s interest makes sense. As inference and AI agents grow as expected by major cloud providers, LPUs and other specialized accelerators could become a new vital category within data centers. They wouldn’t replace GPUs but would complement their tasks, especially where latency, per-token cost, and energy efficiency are critical.

For Nvidia, integrating Groq’s technology serves both a defensive and an offensive purpose. Defensively, it prevents a specialized inference competitor from gaining too much ground in a diversifying market. Offensively, it allows Nvidia to expand its platform beyond traditional GPUs and adapt to scenarios where clients not only train models but also run millions of queries, agents, and automated workflows in real-time.

The result could be a more heterogeneous architecture. Instead of a data center infrastructure focused almost solely on GPUs, future AI data centers might combine GPUs, CPUs, DPUs, inference accelerators, HBM memory, integrated SRAM, and ultra-low-latency networks. The competitive advantage won’t just be in the most powerful chip, but in the platform that best integrates all these elements.

An industry battle beyond Nvidia

The competition between Samsung and TSMC for Nvidia’s LPUs reflects a broader industry trend. AI is restructuring the semiconductor industry around three bottlenecks: advanced manufacturing capacity, high-performance memory, and packaging. Whoever controls these pieces will hold a strategic advantage in the next decade of digital infrastructure.

Samsung needs to rebuild credibility in advanced foundry and capitalize on its historical strength in memory. TSMC wants to prevent its strategic customers from over-diversifying their orders to competitors. Meanwhile, Nvidia wields enormous power: it can allocate workloads among suppliers, incorporate external technologies, and leverage its volume as bargaining power.

Nonetheless, caution is advised. Current information suggests a significant collaboration between Nvidia, Groq, and Samsung, but it doesn’t confirm that Samsung will displace TSMC’s core role in Nvidia’s high-volume business. For now, the prudent view is that this is an open opportunity rather than a definitive leadership shift.

What’s clear is that inference has become the new battleground. While the past years focused on training ever larger models, the market is now shifting toward large-scale AI deployment in real-world applications. Speed of response, operational cost, energy efficiency, and the ability to scale millions of requests now matter most.

Samsung sees an opportunity to re-enter the spotlight. TSMC has no intention of ceding ground. Nvidia, as the key arbiter in this new AI phase, could be the main beneficiary of a competitive landscape that drives down costs, encourages diversity, and accelerates its own roadmap.

Frequently Asked Questions

What is an LPU and why is it important to Nvidia?

An LPU, or Language Processing Unit, is a specialized accelerator for inference tasks in language models. Its importance lies in delivering low-latency responses and sequential workloads, increasingly critical for chatbots, virtual assistants, and AI agents.

Has Nvidia acquired Groq?

Officially, Groq announced a non-exclusive inference technology licensing agreement with Nvidia. It also stated that founder Jonathan Ross, president Sunny Madra, and other team members would join Nvidia. Groq continues to operate independently.

Why is Samsung significant in Nvidia’s AI chips?

Samsung aims to strengthen its role as a provider of HBM memory, foundry services, and advanced packaging. Its involvement in manufacturing Groq’s LPU could help establish it as an alternative or complement to TSMC in certain supply chain areas.

Can Samsung replace TSMC as Nvidia’s main fabricator?

No concrete data suggests a complete replacement of TSMC. Samsung’s current opportunity appears focused on specific chips and capabilities in memory, foundry, and packaging. TSMC remains the primary partner for Nvidia’s most advanced products.