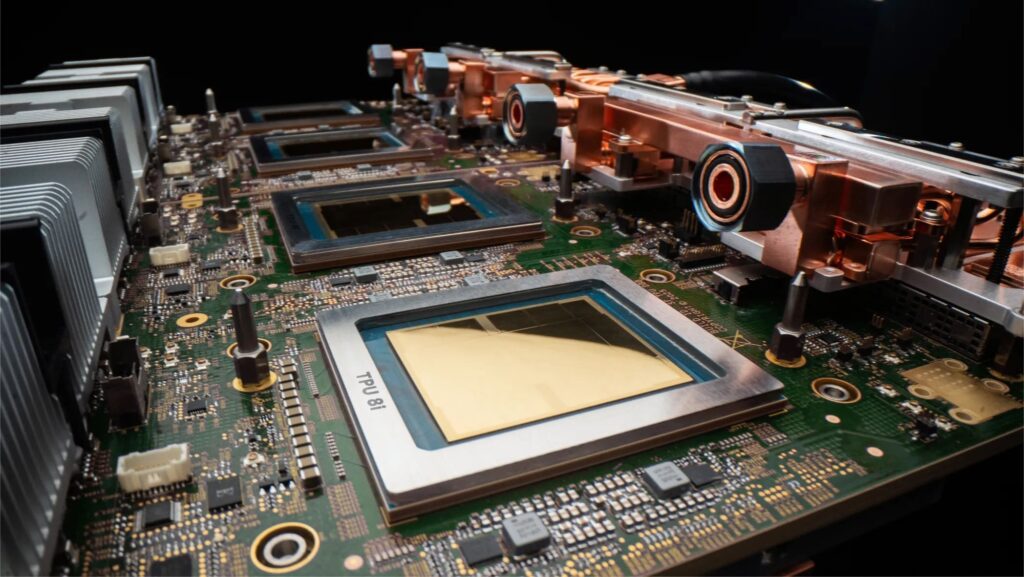

Google has announced the eighth generation of its Tensor Processing Units (TPUs) and, for the first time, has clearly separated its strategy into two distinct chips: TPU 8t, focused on model training, and TPU 8i, designed for low-latency inference. The company positions them as its response to a new phase of Artificial Intelligence, marked by agents that no longer just answer questions but also reason, chain steps, learn from their actions, and execute complex workflows in real time.

Google’s message is clear: agentic AI no longer fits well within a generic infrastructure. That’s why it has opted for two “purpose-built” architectures, tailored from the ground up for very different workloads. TPU 8t aims to reduce the development cycle of large models, while TPU 8i seeks to serve large-scale inference more efficiently with lower latency. Both chips will be generally available later this year within Google Cloud and will be part of its AI Hypercomputer platform.

This is not just a product catalog update. Google is trying to reinforce a long-standing idea: that the future of AI depends not only on the chip but on the integration of silicon, network, storage, software, cooling, and data centers. In this vein, TPU 8t and TPU 8i will run for the first time on Axion processors, Google’s own Arm CPUs, and continue to emphasize liquid cooling and energy optimization at the system level.

Two TPUs for two distinct problems

The decision to separate training and inference reflects a profound market shift. A few years ago, much of the effort was focused on training increasingly larger foundational models. Now, the focus has expanded to serving those models in production, handling millions of queries, maintaining long contexts, and coordinating specialized agents working together. Google argues that these workloads have such different needs that using the same hardware for both is no longer justified.

TPU 8t is primarily geared towards massive training. Google claims that a single superpod can scale up to 9,600 chips, with two petabytes of shared HBM memory and double the bandwidth between chips compared to the previous generation. The company estimates its performance at 121 exaflops and states that the system offers nearly three times the performance per pod compared to earlier versions. It also provides access to storage 10 times faster and aims for more than 97% goodput, meaning useful compute time with minimal loss due to errors, wait times, or restarts.

TPU 8i, on the other hand, is engineered for inference, post-training, and reasoning. Here, Google has prioritized memory and latency. Each chip includes 288 GB of HBM and 384 MB of on-chip SRAM, three times more than the previous generation, supported by a new interconnection topology called Boardfly. This architecture allows connecting up to 1,152 chips within a pod and reducing the maximum network diameter from 16 to 7 hops, critical for Mixture of Experts (MoE) models and workloads where coordination among agents and specialized experts could become bottlenecks. Google states that TPU 8i delivers up to an 80% improvement in performance per dollar over Ironwood.

A race no longer won solely on FLOPS

Beyond the numbers, the announcement suggests a clear trend: the AI hardware battle is shifting from isolated chips to the entire system. Google emphasizes that it designed TPU 8t and TPU 8i in collaboration with Google DeepMind, with specifications driven by the real needs of current models. For example, the Boardfly topology was conceived for the communication demands of reasoning models; TPU 8i’s SRAM is optimized to better host the KV cache in production; and the Virgo network supports the parallelism requirements of training trillion-parameter models.

Google also aims to make energy efficiency a key part of its commercial argument. The company claims TPU 8t and TPU 8i deliver up to twice the performance per watt compared to Ironwood, supported by a fourth-generation liquid cooling system. Additionally, they feature integrated connectivity and compute on the same chip and a global optimization that, according to Google, has enabled its data centers to deliver six times more computing power per unit of electricity than five years ago.

This approach is intentional. AI now confronts very physical limits: energy, cooling, density, and operational costs. In this context, simply selling more compute power is no longer sufficient. Google aims to communicate that its advantage lies in controlling the entire stack, from host CPU to network, software, and data center design.

Comparison table: how TPU 8t and TPU 8i are distributed

| Feature | TPU 8t | TPU 8i |

|---|---|---|

| Main focus | Massive training | Inference, serving, reasoning |

| Maximum scale per system | 9,600 chips per superpod | 1,152 chips per pod |

| HBM memory per chip | 216 GB | 288 GB |

| On-chip SRAM | 128 MB | 384 MB |

| Network topology | 3D torus | Boardfly |

| Highlighted performance | 121 exaflops per superpod | +80% performance per dollar over Ironwood |

| Key improvement | ~3x more performance per pod | Lower latency and better inference efficiency |

Sources: Google Cloud and Google Blog.

A message also for Nvidia

Although Google does not frame this announcement as a direct attack on Nvidia, the competitive landscape is clear. TechCrunch and other US media highlight that this eighth-generation TPU reinforces Google Cloud’s position in a race where Nvidia continues to dominate much of the AI accelerator market. The difference is that Google has spent years deploying TPUs in its own services, including models like Gemini, and now aims to turn that internal expertise into a more aggressive offering for cloud customers.

However, Google is not selling a closed ecosystem. The company emphasizes that both systems natively support JAX, PyTorch, SGLang, and vLLM, along with bare-metal access, to ease deploying existing models and workloads. Simultaneously, it maintains that TPU 8t and TPU 8i are not just chips but parts of a broader offering that includes storage, networking, software, and flexible consumption models within AI Hypercomputer.

Frequently Asked Questions

What exactly has Google announced?

Google unveiled its eighth-generation TPU with two differentiated chips: TPU 8t for model training and TPU 8i for inference, serving, and reasoning workloads.

What is the main difference between TPU 8t and TPU 8i?

TPU 8t is optimized for massive training, scaling up to 9,600 chips per superpod, while TPU 8i prioritizes memory, latency, and efficiency for inference with up to 1,152 chips per pod.

When will they be available?

Google has indicated that both chips will be generally available later this year on Google Cloud.

Why does Google talk about the “age of agents”?

Because, according to the company, new models no longer just respond but also execute multi-step workflows, reason, collaborate with each other, and handle long contexts, demanding a different infrastructure for training and inference.

via: blog.google