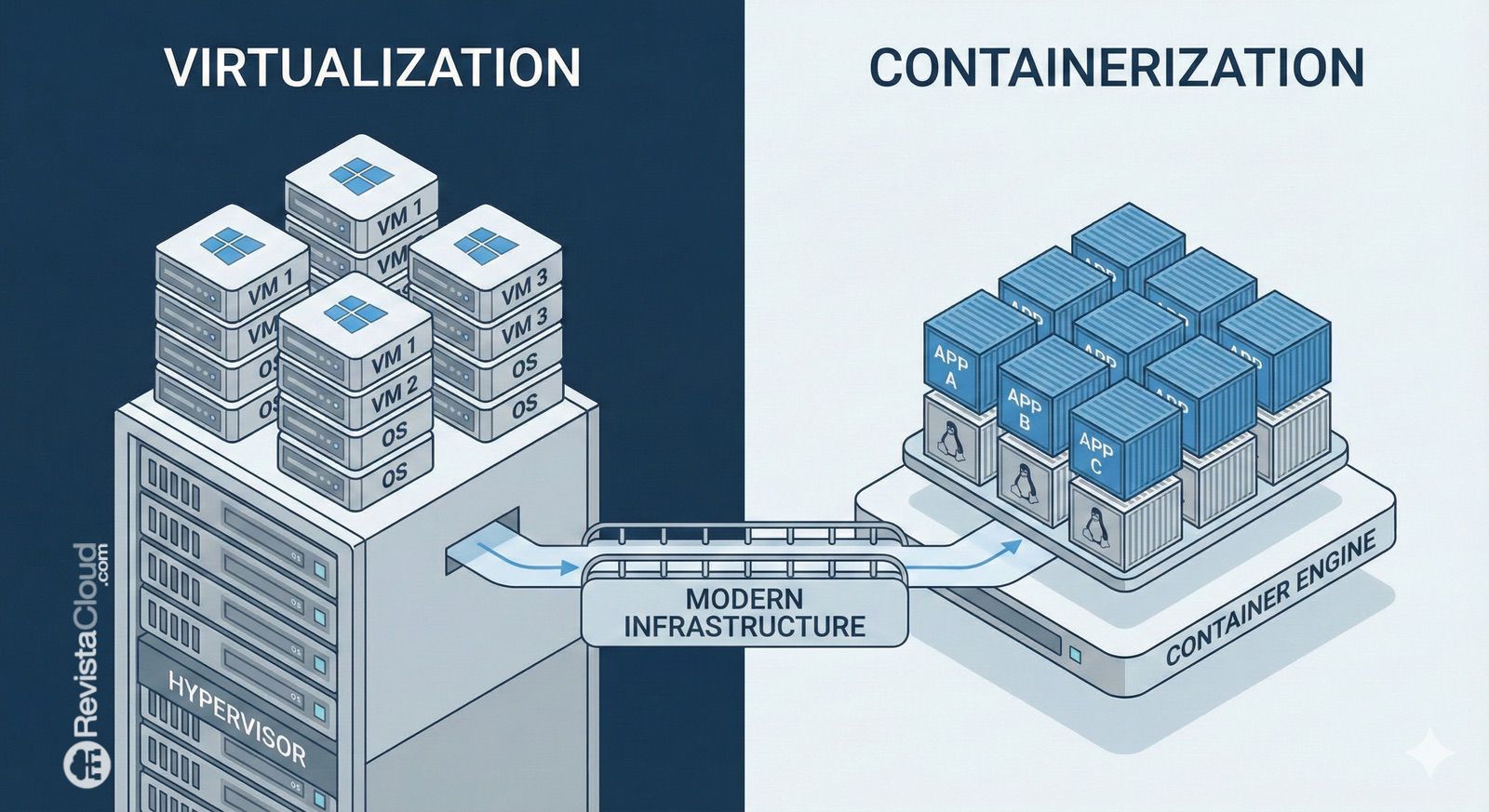

The comparison between virtualization and containerization often presents these technologies as if one were the natural replacement for the other. In practice, that’s not how it works. Both solve different problems, have clear advantages, and also face technical, operational, and economic limitations that are important to understand before choosing one for a project, an internal platform, or a development environment.

Traditional virtualization remains the foundation of many enterprise infrastructures because it allows running isolated workloads with their own operating system, kernel, and strong separation between environments. In contrast, containerization has become dominant in cloud-native development due to its lightness, rapid deployment, and ease of packaging applications with their dependencies. Today, they coexist rather than compete: many modern architectures use containers on virtual machines or combine both approaches depending on the workload type.

What a Virtual Machine Really Offers

A virtual machine creates an isolated computing environment that emulates a complete system. It has CPU, memory, network, storage, and its own operating system—all managed by a hypervisor on physical hardware. This enables running different operating systems on the same server, maintaining strong boundaries between workloads, and supporting legacy applications or environments that require a specific kernel.

This capability remains essential for organizations needing robust isolation, compatibility with legacy software, fine-grained OS control, or strict segmentation between clients, teams, or environments. It also explains why virtualization was the backbone of early cloud computing: it facilitated multitenancy, resource abstraction, and more efficient hardware utilization.

However, this model comes with costs. Each workload involves booting and maintaining a full OS. Not only does it consume more resources; it also introduces additional tasks around provisioning, updates, networking, service exposure, persistent storage, backups, credentials, and observability. For many organizations, the issue isn’t just having a VM but managing all the surrounding infrastructure to make it truly useful.

Why Containers Changed Modern Development

Containers follow a different logic. Instead of virtualizing an entire machine, they package the application with libraries, frameworks, and dependencies, running it as an isolated process on a shared host OS. Because they do not require a guest OS per workload, containers are lighter, faster to start, and easier to scale.

This lightweight nature aligns well with practices like microservices, continuous integration, continuous delivery, and frequent deployments. It also enhances portability across environments: a well-crafted container can run on a developer’s laptop, in a data center, or in the cloud with consistent behavior. That’s why containerization has become central to cloud-native development and platforms like Kubernetes.

However, containers are not a universal solution. Sharing the host kernel imposes compatibility constraints and provides a different isolation model than VMs. Operational complexities also increase: images, registries, orchestration, overlay networks, secrets, persistence, and distributed observability. The weight savings simply shift the workload to another layer, not eliminate it.

The Real Difference Is Not Just Performance

The technical debate often stops at the obvious: VMs are heavier and containers are faster. That’s true but incomplete. The real choice depends on more specific questions.

If you need to run multiple operating systems, isolate more strongly, support legacy software, or provide each workload with a complete environment, virtualization still makes a lot of sense. If your goal is rapid iteration, deploying small services, packaging modern apps, or working with automated pipelines, containers are generally more suitable. In many cases, the most reasonable architecture combines both models. Red Hat explains that VMs and containers are mature technologies that can coexist and meet different needs within the same platform.

This nuance is important because part of the market tries to promote the idea that everything should become a container or conversely, that VMs still solve all needs. Neither claim is entirely accurate on its own.

Where exe.dev Positions Itself

Against this backdrop, exe.dev emerges as a service aiming to simplify using virtual machines for developers. The company describes itself as a subscription service offering virtual machines with persistent disks “quickly and hassle-free.” It also states that these machines are accessible immediately and can be exposed via HTTPS with secure default settings. Their documentation adds that the service’s API uses SSH, and web access is provided via HTTPS/TLS termination with proxy to the VM, instead of assigning a public IP to each instance.

The company seeks to solve a common challenge for developers: when opting for a VM for better isolation or flexibility, the hard part often isn’t starting the VM but managing all the extra layers. According to exe.dev, their approach offers persistent VMs accessible via SSH or browser, suitable for running code agents with minimal oversight and limited access to user data. They also emphasize that persistence is a deliberate choice, more akin to using a remote laptop than ephemeral or “serverless” infrastructure.

This approach resonates in a moment when discussions extend beyond VMs versus containers, to how AI agents, remote development environments, sandboxes, and temporary workloads with some level of isolation are managed. In these scenarios, VMs regain appeal compared to lighter containers, which offer less robust environment separation.

What a Tech Team Should Truly Ask Themselves

Before choosing virtualization, containers, or services like exe.dev, it’s wise to ground the debate in operational questions.

If strong isolation, OS compatibility, and environment control are priorities, a VM may still be the best option. If fast delivery, density, scaling, and standardized deployments matter more, containers usually fit better. If the real need is for VMs that are useful without the complexity of a traditional cloud account, products like exe.dev aim to fill that gap—but their offerings should be evaluated like any managed service: what they simplify, what they abstract, and what dependencies they introduce.

Ultimately, the best decision isn’t simply about asserting that “containers are the future” or that “VMs isolate better.” It depends on understanding what workload you want to run, the level of control needed, how you’ll handle persistence, security considerations, and how much time the team plans to dedicate to managing the infrastructure.

Frequently Asked Questions

What is the main difference between a virtual machine and a container?

A virtual machine runs a complete operating system with its own kernel, while a container shares the host kernel and runs the application as an isolated process.

Do containers replace virtual machines?

Not entirely. They are often used together. VMs remain valuable for strong isolation, OS compatibility, and legacy workloads, whereas containers excel in rapid deployments and cloud-native applications.

What does exe.dev offer according to its documentation?

It provides a subscription service offering virtual machines with persistent disks, quick access, HTTPS exposure, and SSH-based API.

When does it make sense to use VMs instead of containers for development?

When a more complete, isolated environment with simple persistence or fewer OS/kernel compatibility restrictions is required.