NVIDIA’s next major transition in AI accelerators may not progress as quickly as the market expected. Industry reports indicate that the ramp of Vera Rubin, the company’s new platform for AI data centers, is facing more difficulties than anticipated. This is beginning to have potential ripple effects throughout the supply chain, especially in memory.

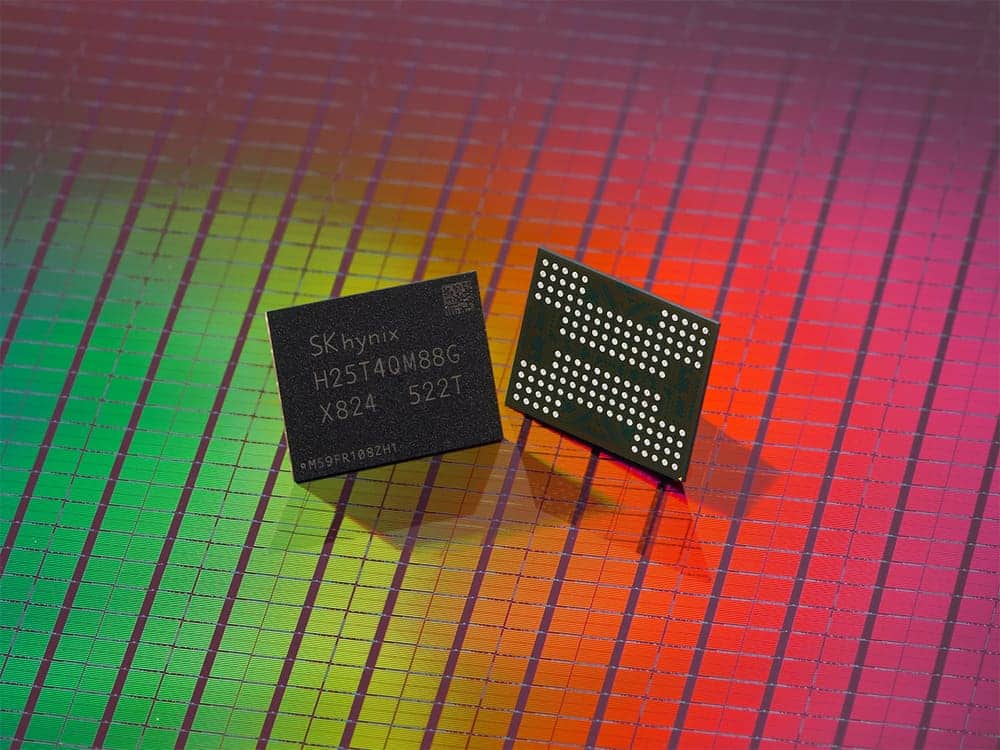

The name most associated with this situation is SK hynix, currently one of the leading players in the HBM market. According to industry sources published in Asia, the South Korean manufacturer is considering reducing its planned shipments of HBM4 to NVIDIA by between 20% and 30% in 2026. Neither NVIDIA nor SK hynix have publicly confirmed this adjustment, so for now, it should be treated as an industry rumor rather than a finalized decision. Nonetheless, the mere possibility gaining traction says a lot about the current state of the AI memory market.

Rubin is still on track, but with more friction than expected

What is confirmed is that NVIDIA introduced Rubin as its flagship platform for the next phase of AI infrastructure, and that the company stated in early 2026 that Rubin was in production, with partner systems scheduled for deployment in the second half of this year. The roadmap, on paper, is ambitious: higher performance, new architecture, increased memory capacity, and a clear evolution over Blackwell.

However, there is often a significant gap between official announcements and industrial reality. In Rubin’s case, that gap appears to be widening. TrendForce warned last week that Rubin’s share of NVIDIA’s high-end GPU shipments in 2026 might drop from the previously forecasted 29% to 22%. The firm attributes this adjustment to several potential hurdles: the time required to validate HBM4, the switch from ConnectX-8 to ConnectX-9 interconnects, higher power consumption, and the need to optimize advanced liquid cooling systems.

This diagnosis is relevant because it aligns with the SK hynix rumor. If Rubin develops more slowly, demand for HBM4 associated with that platform may also slow down more than expected. This does not imply a cooling of the entire AI memory market but rather a change in pace and product mix within the ongoing industry expansion cycle.

SK hynix had a very strong 2026 planned for HBM4

The situation is especially noteworthy because SK hynix had been preparing for an aggressive HBM4 expansion for months. In its third-quarter 2025 results, the company confirmed that HBM4 was finalized in September, entered mass production, and would begin shipment in the fourth quarter of 2025, with a large-scale commercial rollout expected in 2026. The company also indicated that it had already secured agreements with key customers for HBM supply in the upcoming fiscal year.

More recently, at its annual meeting in March 2026, SK hynix reiterated expectations of increasing demand not only for HBM but also for DRAM for AI and NAND linked to infrastructure deployment. The message was clear: 2026 was slated to be another landmark year for AI-related memory.

Therefore, if part of the HBM4 volume destined for NVIDIA is now moderating, this is not just a tactical correction. It signals that the transition between generations of accelerators and memory may not be as linear as it seemed three months ago.

Blackwell gains time and HBM3E remains very relevant

The other side of this story is Blackwell. If Rubin slows down in 2026, Blackwell will enjoy more market opportunities than initially expected. TrendForce estimates that Blackwell’s share of NVIDIA’s high-end GPU shipments will rise from the previously forecasted 61% to 71% this year. Essentially, the current generation will continue supporting an even larger portion of NVIDIA’s growth in AI.

This directly impacts memory manufacturers because HBM3E will remain critically important for longer. In other words, a potential slowdown in HBM4 shipments to Rubin does not necessarily mean a proportional business decline for SK hynix, but rather a shift toward higher volumes of HBM3E and increased pressure on the broader server memory market.

This nuance is crucial to avoid alarmist interpretations. The issue does not seem to be a demand collapse for AI but rather a temporary mismatch between NVIDIA’s roadmap pace and supply chain readiness for the next generation. In markets like this—where each technological leap requires new electrical, thermal, interconnect, and packaging validations—such frictions can delay revenue streams significantly without fundamentally altering the cycle’s trajectory.

Samsung and Micron await their opportunity

The potential slowdown of Rubin also introduces a competitive angle. Samsung and Micron have been working for months to secure their position in HBM4. Reuters reported earlier this year that Samsung was preparing HBM4 production and that both Samsung and Micron aimed to leverage the new memory generation to reduce SK hynix’s advantage with NVIDIA. Subsequently, Reuters noted that Samsung had begun moving HBM4 toward customers, with SK hynix and Micron also discussing HBM4 production plans.

This does not mean SK hynix will lose its leadership overnight. It remains the most solid name in HBM and has capitalized heavily on NVIDIA’s recent boom. However, any delays in Rubin’s rollout could temporarily rearrange the competitive landscape. A more gradual transition to HBM4 provides rivals more time to optimize validation, improve performance, and secure design wins in the next wave of deployment.

What’s really at stake

The most interesting aspect of this situation isn’t merely whether SK hynix reduces shipments by a certain percentage. It’s what this reveals about the new AI economy. Throughout 2024 and 2025, the industry grew accustomed to thinking that the entire AI value chain would expand almost automatically: more GPUs, more HBM, more racks, more data centers. But in 2026, a more realistic view is emerging: even in a demand-driven market, technological transitions still face significant bottlenecks.

Rubin requires not only better chips but also validated HBM4, new interconnects, increased energy capacity, improved cooling, and a much more complex level of integration. When one of these elements faces delays, it can trigger a domino effect affecting memory, packaging, and revenue forecasts. This explains why the SK hynix rumor holds such importance, even if not yet officially confirmed.

In the short term, the most prudent interpretation might be: NVIDIA continues with Rubin, but with increased schedule risk; Blackwell will remain dominant in 2026; and the transition to HBM4 could be more gradual than initially expected. For SK hynix, this doesn’t necessarily indicate a fundamental problem but could slow down the rapid transition that seemed destined to accelerate with minimal resistance.

Frequently Asked Questions

Has NVIDIA confirmed delays in the Rubin platform?

No, there is no official confirmation of schedule delays, but industry firms like TrendForce have issued warnings about ramp-up risks and a potentially smaller Rubin market share in high-end GPU shipments in 2026.

Has SK hynix officially announced a reduction in HBM4 shipments to NVIDIA?

No. The potential 20%-30% reduction is based on industry sources in Asia and has not been publicly confirmed by SK hynix or NVIDIA at this time.

What challenges is Rubin facing in 2026?

According to TrendForce, main challenges include validating HBM4, transitioning from ConnectX-8 to ConnectX-9 interconnects, increased power consumption, and thermal complexities associated with the new platform.

Who benefits if Rubin is delayed?

In the short term, Blackwell gains more market share, and HBM3E remains significant for longer. It also gives Samsung and Micron more time to compete for HBM4 validation and deployment.