NVIDIA has been announcing Vera Rubin as the next major platform for the AI agent era for months, and it officially remains on track: the company assures that Vera Rubin NVL72 is now in full production, and systems based on this generation will start arriving through its partners in the second half of 2026. The company has also explained that the new architecture is supported by a global ecosystem of more than 80 partners and a diversified supply chain to bring its rack-scale systems to market.

However, beneath this public roadmap, a less tidy and much more revealing reality is starting to emerge about how AI infrastructure is actually built today. A recent report from DIGITIMES, backed by sources within the passive component supply chain, suggests that the design of the Vera Rubin compute tray may not be entirely finalized yet, despite the platform aiming for production in the third quarter. According to this report, the revisions respond to a more aggressive strategy of supplier diversification and a desire to eliminate single-source dependencies for critical parts. In other words, NVIDIA is not only refining a technical design; it is also reshaping how it intends to manufacture and supply its next-generation systems.

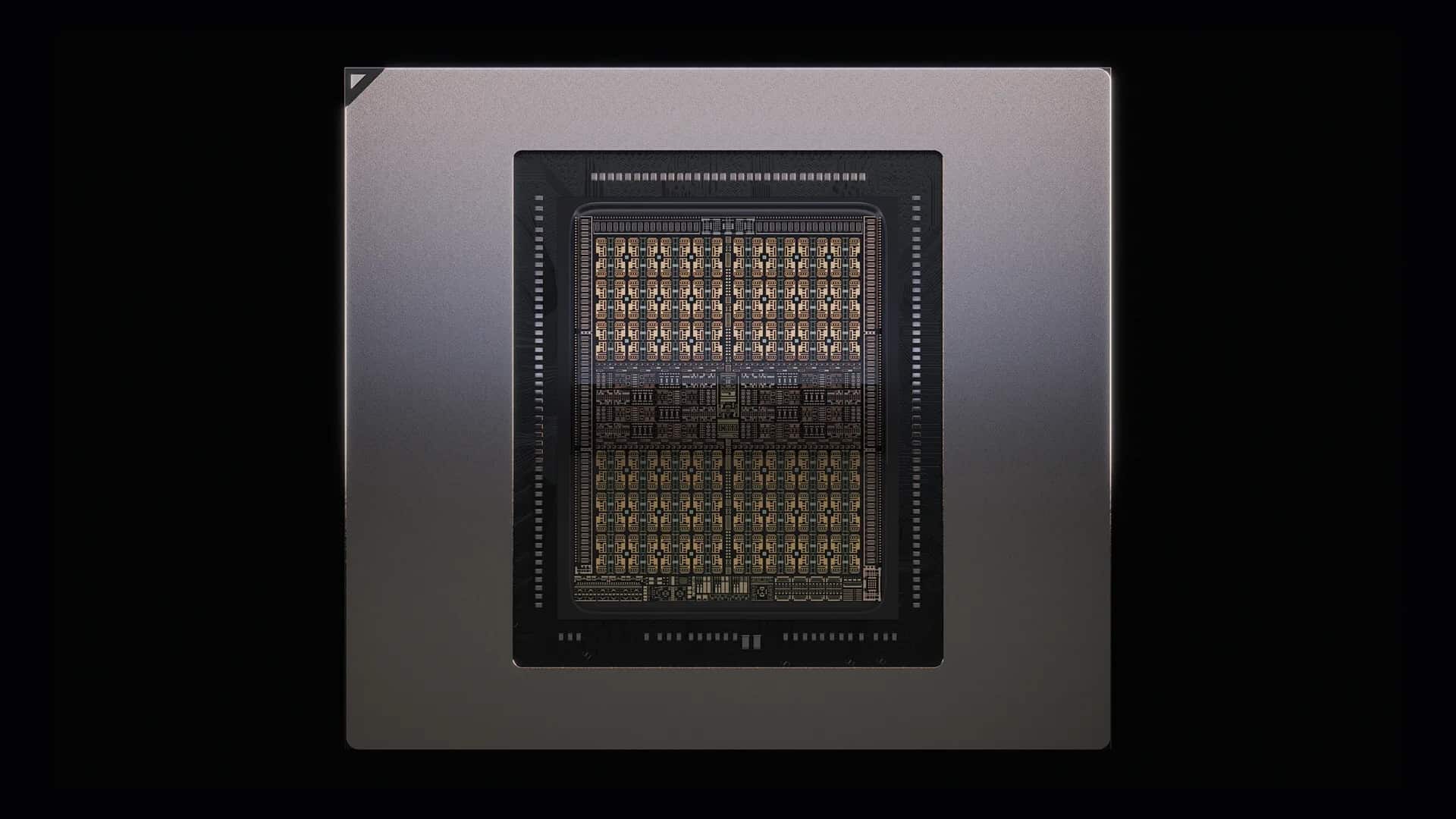

This nuance matters more than it seems. Vera Rubin NVL72 is not just an ordinary server but a highly complex platform. NVIDIA details that each rack will integrate 72 Rubin GPUs, 36 Vera CPUs, 18 compute trays, and 9 NVLink switching modules, totaling around 1.3 million components. The company also boasts that the Vera Rubin compute trays have been completely redesigned compared to Blackwell, with an internal architecture designed to accelerate assembly and simplify maintenance and service. When a system of this size still changes aspects of its BOM during the pre-deployment phase, it’s not just a cosmetic adjustment: it impacts timelines, validation, electrical performance, and the actual capacity to scale smoothly.

The problem is no longer just designing the best rack

The most interesting interpretation of this potential revision isn’t merely the risk of a delay but the shift in priorities it indicates. For years, discussions about large AI systems have focused on GPUs, HBM memory, or interconnects. But as the market matures, supply chain resilience becomes a requirement almost as critical as raw performance. NVIDIA implicitly recognizes this when emphasizing its global partner network and integration across compute, network, storage, power, and cooling to maximize availability and speed up time-to-market.

Within this context, another noteworthy detail from the Asian report is worth mentioning: Panasonic’s SP-CAP, a type of aluminum-polymer capacitor widely used in demanding applications, was apparently not adopted in the Vera Rubin compute tray after all. Panasonic publicly promotes its SP-CAP family as suitable for constant, high-load conditions in AI servers, switches, routers, and base stations, even recommending their long-life series for such environments. But that specialization does not resolve the current concern NVIDIA seems more worried about: supply concentration. If a specific component forces reliance on just one or a few suppliers, the technical gains may be outweighed by industrial risks.

The alternative increasingly gaining weight, according to these reports, is high-capacity MLCCs — a less glamorous component to most people but increasingly strategic in power electronics for AI. There are clear signs of market tension: Samsung Electro-Mechanics states that AI servers use 10 to 15 times more MLCC than general-purpose servers, with demand shifting toward ultra-high capacitance and high-voltage variants. Murata has published a technical guide this year to optimize power delivery in AI servers and emphasizes that stable power supply is now a primary challenge for next-generation data centers.

Capacitors also enter the AI war

What was previously seen as a secondary engineering concern is now becoming an economic and strategic variable. Murata acknowledged in its Q3 fiscal results conference in 2026 that demand for AI servers is “very robust,” that customer support now exceeds price considerations in this segment, and that capacity utilization is between 90% and 95%. The company admitted that, in 2026, the key question will be how much they can produce and whether supply can keep up with demand, even confirming that capacity shortages or delays are a real possibility.

This backdrop explains why a component change in Vera Rubin shouldn’t be viewed simply as a manufacturing anecdote. As AI platforms grow denser, power electronics become critical, and MLCCs shift from invisible parts to sensitive cost and supply elements. DIGITIMES already warned in December 2025 that MLCCs are becoming one of the main contributors to BOM costs in AI servers — second only to GPUs and memory. And in 2026, TrendForce has reported a tightening market, with potential price hikes and increased pressure on manufacturers like Murata and Samsung Electro-Mechanics.

All of this doesn’t necessarily mean Vera Rubin will face a wide or obvious delay. NVIDIA continues to state availability for the second half of the year, and nothing in its public roadmap currently suggests an official schedule change. However, it paints a more uncomfortable picture: the next big AI platform depends not just on advanced chips and big announcements but also on thousands of discrete decisions about who supplies what, with what margin, and how responsive they can be if a part fails or becomes a bottleneck.

In other words, Vera Rubin is not only testing NVIDIA’s ability to continue scaling performance but also its capacity to industrialize increasingly complex systems without becoming overly dependent on narrow supply chains. If the price of that resilience is redesigning the system down to the last detail, the market may ultimately accept it. In the gigawatt-scale AI era, supply chain diversification no longer seems just a procurement issue—it’s becoming part of product design.

Frequently Asked Questions

Has NVIDIA confirmed a delay in Vera Rubin due to changes in the compute tray?

No. NVIDIA officially states that Vera Rubin NVL72 is in production, with products based on this platform expected in the second half of 2026. What currently exists are supply chain reports indicating design revisions and possible adjustments in some shipments.

Why are MLCCs so important in an AI server?

Because they help stabilize power delivery in systems with very high consumption and extreme compute density. Samsung Electro-Mechanics reports that AI servers use 10 to 15 times more MLCCs than conventional servers, and Murata is focusing part of its technical strategy specifically on this power delivery challenge.

What makes Vera Rubin different from previous generations?

NVIDIA describes it as a fully redesigned rack-scale system, with 72 Rubin GPUs, 36 Vera CPUs, 18 compute trays, and 9 NVLink switches per rack, plus an internal architecture aimed at simplifying assembly and servicing compared to Blackwell.

Can supplier diversification affect an AI platform’s schedule?

Yes. Changing components or opening the design to multiple suppliers can improve resilience over the medium term but also requires validation, adjustments, and BOM revisions, which can tense timelines before mass deployment. That’s exactly what supply chain reports regarding Vera Rubin indicate.

via: Jukan