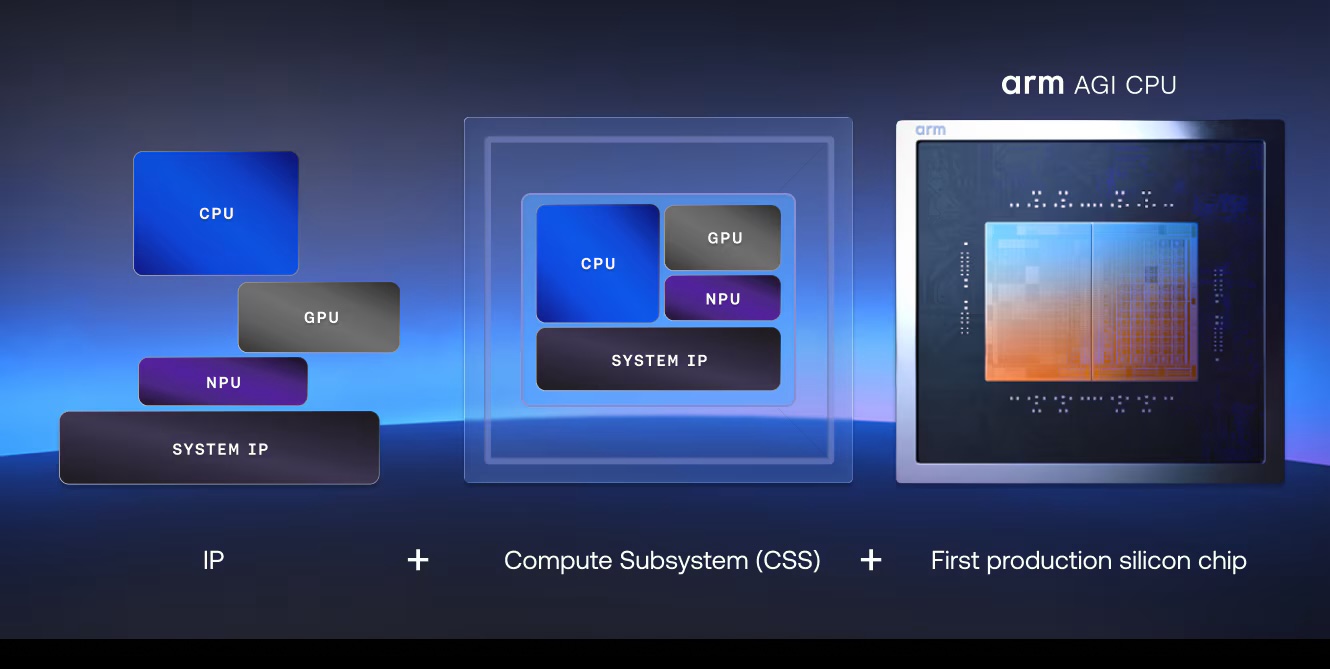

Arm has stopped being just the company that designs architecture for others and has shifted to compete, for the first time, with its own product in one of the industry’s hottest areas: AI infrastructure. The British company announced the Arm AGI CPU, its first production processor designed directly by it for data centers focused on agentic AI workloads. This move shifts its traditional role in the semiconductor market and rewires the competitive landscape. According to Arm, the chip will deliver up to 136 Neoverse V3 cores per CPU, a TDP of 300 watts, and a density that can reach 8,160 cores per rack in 1U air-cooled servers and over 45,000 with liquid cooling. The company also claims it can provide more than twice the rack performance compared to x86 platforms, though this comparison is based on the manufacturer’s own estimates.

The significance of this announcement is not just in the technical specifications but in the timing. The race for AI is no longer solely fought on GPUs. As models move from training to execution as agents capable of reasoning, chaining tasks, moving data, and coordinating multiple services, the CPU regains strategic importance within the data center. Arm aims to occupy precisely this space: the CPU that orchestrates, coordinates, and sustains the infrastructure layer enabling large-scale AI. Meta appears as a main partner and co-developer, and Arm also cites OpenAI, Cloudflare, SAP, Cerebras, F5, and SK Telecom among its primary commercial supporters.

Arm’s move isn’t just against Intel

The immediate interpretation might be that Arm seeks to attack Intel and AMD in their natural territory. And to some extent, that’s true. But the scope of the announcement is broader. Arm isn’t just entering the competition against traditional x86. It’s also vying within its own ecosystem, where AWS, Google, and Microsoft are already designing their own cloud processors, and where NVIDIA has long been pushing its Grace architecture as an ideal complement for its accelerated AI platforms. The difference is that Arm isn’t presenting a captive chip for its own cloud nor internal designs for proprietary use: it is trying to turn what has been mainly an advantage reserved for hyperscalers into a commercial product for third parties.

This makes the Arm AGI CPU a unique piece. Apple proved years ago with Apple Silicon that a well-integrated Arm architecture can compete with—and even outperform—many x86 designs in performance per watt in personal computers and workstations. The M4, built with second-generation 3-nanometer technology, continues this efficiency trend and highlights the increasing importance of local AI on devices. However, Apple doesn’t position itself as a direct rival: its strategy remains vertical, closed, and focused on its own products for Mac and iPad, not selling CPUs for third-party data centers. In this sense, Apple serves more as a proof of Arm’s maturity than a direct competitor to the AGI CPU.

NVIDIA, Intel, and AMD: Three very different rivals

Looking more closely at the AI market, the most uncomfortable rival for Arm is likely not Apple but NVIDIA. The reason is straightforward: NVIDIA isn’t competing with just a CPU but with a complete platform. Grace, its Arm CPU for data centers, is already positioned as the backbone of the next generation of AI infrastructure and, according to the company, offers up to twice the energy efficiency of traditional CPUs, with 72 Arm v9 cores, LPDDR5X memory, and close integration with GPUs via NVLink-C2C. Additionally, NVIDIA is already shaping Vera as the next-generation architecture designed for agentic AI systems. Against this backdrop, Arm offers a different approach: although it doesn’t sell a full stack of GPU, networking, and software, it aims to deliver a CPU optimized for density, efficiency, and orchestration tasks, supported by a broader and more open ecosystem.

Meanwhile, Intel continues to champion the traditional x86 position in data centers. Its Xeon 6 family relies on a dual strategy with P-cores and E-cores, emphasizing flexibility to handle a broad range of workloads—from cloud and analytics to AI, edge, and networking. The company highlights configurations with up to 144 cores per socket, focusing on performance-per-watt and compatibility with existing enterprise ecosystems. That remains its greatest strength: less about winning the headline war and more about being the most convenient choice for thousands of clients already operating on x86-based software, tools, and workflows. Arm aims to target exactly where Intel is most vulnerable: density, power consumption, and scalability for AI infrastructure. But Intel retains a significant advantage—an enormous installed base and substantial enterprise inertia that’s hard to disrupt.

AMD, in fact, has been the most adept at navigating this transition among x86 competitors. Its EPYC 5th generation processors reach up to 192 cores, with 12 DDR5 channels and a highly cloud- and AI-focused proposition. AMD markets these as CPUs capable of accelerating inference, serving as hosts for GPU-based systems, and providing high density—all while maintaining x86 compatibility. The company boasts that its EPYC 9005 series has the highest core count among x86 server processors today. This makes AMD arguably the most formidable rival to Arm in general-purpose servers and much of the AI-relevant infrastructure. The key difference is that Arm’s message is more surgical: it’s not about a CPU for everything but specifically a CPU for agent-based data centers.

The real competition: Arm vs. its own progeny

There’s another less visible but equally critical battleground: the ecosystem deployments of Arm-based cloud processors. AWS Graviton4, Google Axion, and Azure Cobalt 100 are evidence that Arm architecture has secured a structural foothold in cloud computing. AWS claims Gravion4 is its most powerful and efficient processor yet, and it continues expanding its instance families based on this design. Google markets Axion as its custom Arm CPU for general cloud compute, while Microsoft runs Cobalt 100 in dozens of regions and presents it as a proprietary processor for cloud services. In short: Arm arrived late to the “data center Arm” game, but it arrived just in time to package that logic for the broader market—helping those who can’t design a Graviton, Axion, or Cobalt but want something similar.

Alongside these developments, another emerging technology warrants attention: RISC-V. Its open ISA ecosystem advocates that a flexible, open architecture can become a compelling foundation for AI systems, offering benefits in flexibility, control, and cost. According to RISC-V International’s annual report, by 2026, first data-center-oriented RISC-V developments are expected to materialize. For now, RISC-V remains far from the commercial maturity and software ecosystem already established by Arm, x86, or NVIDIA’s full-stack AI platform. Rather than an immediate competitor to the Arm AGI CPU, it’s better seen as a background pressure—a reminder that the next big battle will not only be for selling chips but for controlling the architecture that will underpin computing in the coming decade.

The key takeaway: Arm has launched not just another processor but has crossed a significant historic threshold. In a market where Intel maintains compatibility dominance, AMD emphasizes density and performance in x86, NVIDIA leads with its accelerated stack, and Apple demonstrates how Arm can excel when controlling hardware and software, the British company has decided to shift from just being an ecosystem referee to a direct player. In the era of agentic AI, this could prove to be far more consequential than it appears.

Frequently Asked Questions

What is the Arm AGI CPU, and why is it important for AI?

It is the first production processor designed directly by Arm for data centers, aimed at agentic AI workloads where the CPU orchestrates tasks, data movement, and services around accelerators and models.

Does the Arm AGI CPU directly compete with Apple Silicon?

Not directly. Apple Silicon demonstrates Arm’s efficiency in personal devices, but Apple doesn’t sell CPUs for open data centers. In this space, the AGI CPU competes more with Intel, AMD, NVIDIA, and cloud chips from AWS, Google, or Microsoft.

How does it differ from NVIDIA Grace?

Grace is part of NVIDIA’s complete platform strategy, closely tied to GPUs, interconnects, and AI software. The Arm AGI CPU aims to be a dense, efficient CPU for orchestration and services in data centers, supporting a more open ecosystem.

Can Arm take market share from Intel and AMD in AI servers?

It has options in specific workloads with scale, efficiency, and density requirements, especially where rack power and service orchestration matter. However, Intel maintains a strong lead in enterprise compatibility, and AMD offers x86 CPUs up to 192 cores with a solid cloud and AI narrative.

via: ARM AGI CPU