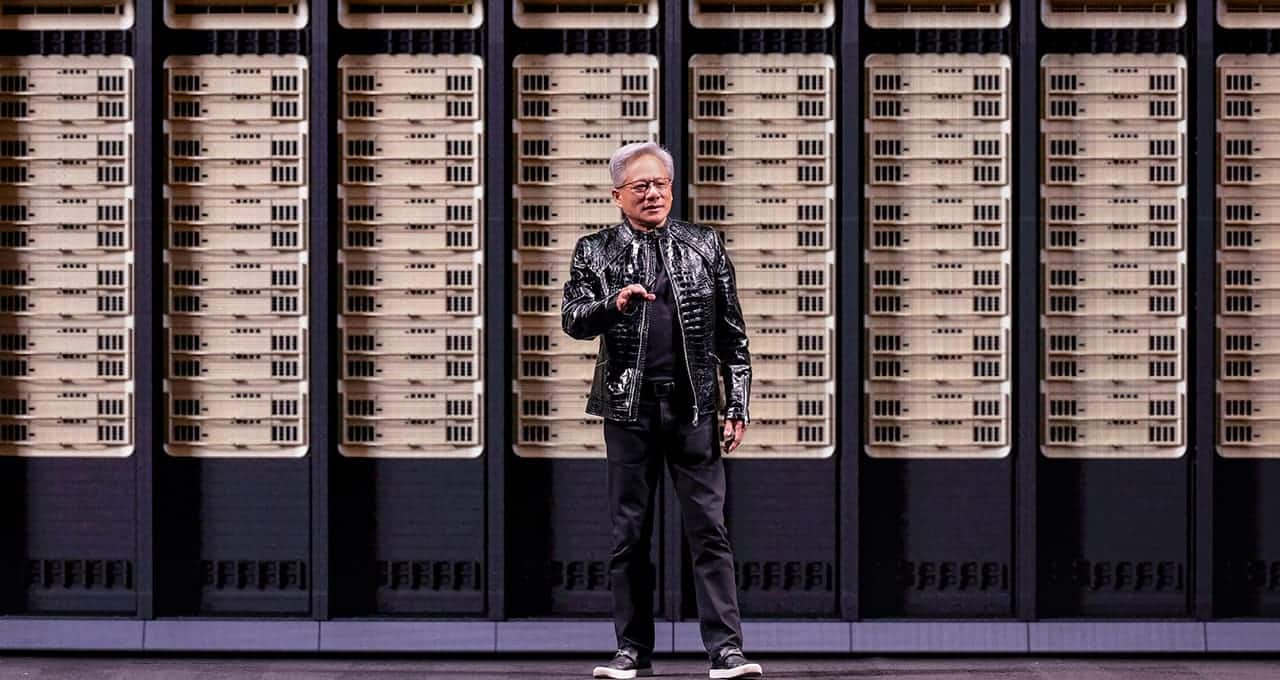

NVIDIA kicks off GTC 2026 today in San Jose with a different pressure than in previous years. The conference runs from March 16 to 19, and Jensen Huang’s keynote is scheduled for this Monday, in an event where the company itself promises announcements about Artificial Intelligence, accelerated computing, and robotics. But this time, the focus isn’t solely on delivering more raw power, but on demonstrating the company’s ability to adapt to a new market phase: inference, AI agents, and factory-scale infrastructure.

The big question hanging over GTC 2026 is whether NVIDIA will truly begin to nuance its old idea that a GPU can practically handle any significant AI workload. Not because GPUs will cease to be central, but because the market is shifting. Reuters explains that analysts expect a wave of announcements aimed at strengthening NVIDIA’s position in inference and “agentic AI,” especially as rivals, clients, and new specialized chips begin to pressure that part of the business more than pure training.

This shift in focus isn’t minor. In recent years, industry narratives centered around large model training, a domain where Hopper and later Blackwell solidified NVIDIA as the absolute reference. But now the conversation is moving toward another necessity: executing these models quickly, cost-effectively, and at scale for assistants, agents, and enterprise applications. Reuters emphasizes that the AI market itself is pivoting from massive training clusters to a new layer of services where inference and agent orchestration are gaining importance.

Therefore, a significant part of expectations around GTC 2026 revolves around Groq. Reuters reported on March 13 that NVIDIA is likely to showcase products stemming from its $17 billion acquisition of Groq in December, aimed at bolstering its position in fast, affordable inference. The agency adds that analysts expect new server lines combining Groq chips with NVIDIA’s networking technologies—an approach that would specifically complement GPUs with more specialized hardware for certain inference phases.

This is the core of the story. There is no official confirmation—at least before the keynote—of NVIDIA abandoning a strict “GPU-only” model. However, clear signals point to the company wanting to expand its platform with more specialized components based on workload needs. This aligns with Reuters’ thesis: NVIDIA faces increasing competition from custom chips, ASICs, and internal solutions developed by clients like OpenAI and Meta, especially in inference. In this context, expanding the platform makes more sense than defending an architectural purity that the market has already begun questioning.

Moreover, the transition isn’t limited to Groq. Reuters also suggests that GTC 2026 may see increased emphasis on CPUs within AI infrastructure, especially in what they call the “agent orchestration layer.” The idea is that, if the immediate future involves fleets of agents moving between applications, services, and data, bottlenecks won’t always be in matrix calculations on the GPU, but also in how these tasks are coordinated, flows are distributed, and dependencies are managed. This opens space for servers focused on CPUs and for a more heterogeneous architecture than what has been traditionally seen.

More official groundwork is evident in the infrastructure roadmap. NVIDIA has been preparing the terrain for Rubin, Rubin Ultra, and the next-generation racks for months. In October 2025, the company explained on its official blog that Vera Rubin NVL72 will be a key component for “AI factories,” and that the Kyber architecture is designed for 576 Rubin Ultra GPUs by 2027. The same post confirms that Intel and Samsung Foundry are participating in the NVLink Fusion ecosystem, integrating x86 CPUs and custom chips within NVIDIA’s infrastructure. Even in recent corporate documentation, the company speaks in terms of open platforms and diverse silicon integration, not only isolated GPUs.

Another point likely to gain prominence is the optics. Reuters indicates that we can expect further details on NVIDIA’s $2 billion investments in Lumentum and Coherent, two companies focused on optical interconnection lasers. Concurrently, NVIDIA’s official blog links Kyber and the Rubin Ultra era to data centers operating at 800 VDC and new rack densities designed to scale AI to the gigawatt level. All signs point to GTC 2026 covering not just chips but also networks, power, cooling, and connectivity as integral parts of the same platform.

Regarding Feynman, caution remains essential. Reuters notes that analysts anticipate a roadmap update “from Rubin to Feynman,” but this doesn’t imply a detailed product launch nor does it validate many circulating speculations about node, stacking, or specific integration with new inference units. Today, it’s reasonable to see Feynman as the next major milestone in NVIDIA’s roadmap, not as an imminent release with confirmed specifications.

Ultimately, GTC 2026 may represent something deeper than a new hardware generation. It could be the moment NVIDIA admits—albeit implicitly—that the era of “one GPU for everything” is becoming outdated for the new AI economy. Not because GPUs will stop dominating, but because they now need to coexist with more visible CPUs, optical interconnects, racks designed as unified systems, and specialized inference chips. If Jensen Huang confirms that direction today, GTC 2026 won’t just be another product showcase; it will be a statement on how NVIDIA aims to maintain its leadership as AI shifts from training to delivering fast, cost-effective, and large-scale responses.

Frequently Asked Questions

When does GTC 2026 start, and when is Jensen Huang’s keynote?

GTC 2026 runs from March 16 to 19, 2026, in San Jose. Jensen Huang’s keynote is scheduled for March 16.

Has NVIDIA officially confirmed a complete shift away from GPUs?

No. Before the keynote, there is no official confirmation of abandoning the GPU-based strategy. However, there is a strong expectation of announcements related to inference, AI agents, CPUs, and complementary hardware driven by market trends and the Groq acquisition.

What role might Groq play at GTC 2026?

Reuters suggests NVIDIA might present products tied to its Groq acquisition, focusing on fast and efficient inference, potentially integrated with NVIDIA’s network and platform technology.

What is officially known about Rubin Ultra and Kyber?

NVIDIA explained in October 2025 that Kyber is designed to host 576 Rubin Ultra GPUs by 2027 and is part of its vision for high-density AI factories, with 800 VDC and new rack architectures.