Meta has decided to accelerate its own family of accelerators, MTIA, to strengthen a trend that is already clear among major hyperscalers: relying less on a single GPU architecture for AI inference. In a technical blog post published by the company, Meta details four new generations of its Meta Training and Inference Accelerator line —MTIA 300, 400, 450, and 500— with deployments either already underway or scheduled between 2026 and 2027. The central message is very clear: AI inference requires chips that are optimized differently from those primarily designed for training.

Meta’s thesis aligns with a broader movement in the sector. Google has already introduced Ironwood as its first TPU designed specifically for the “inference era,” AWS continues pushing its Trainium and Inferentia families, and Microsoft has positioned Maia 200 as its new proprietary accelerator for inference workloads. While this isn’t yet a complete replacement for Nvidia, it signals an increasing diversification at the silicon level, where token costs and energy efficiency are starting to matter as much as raw performance.

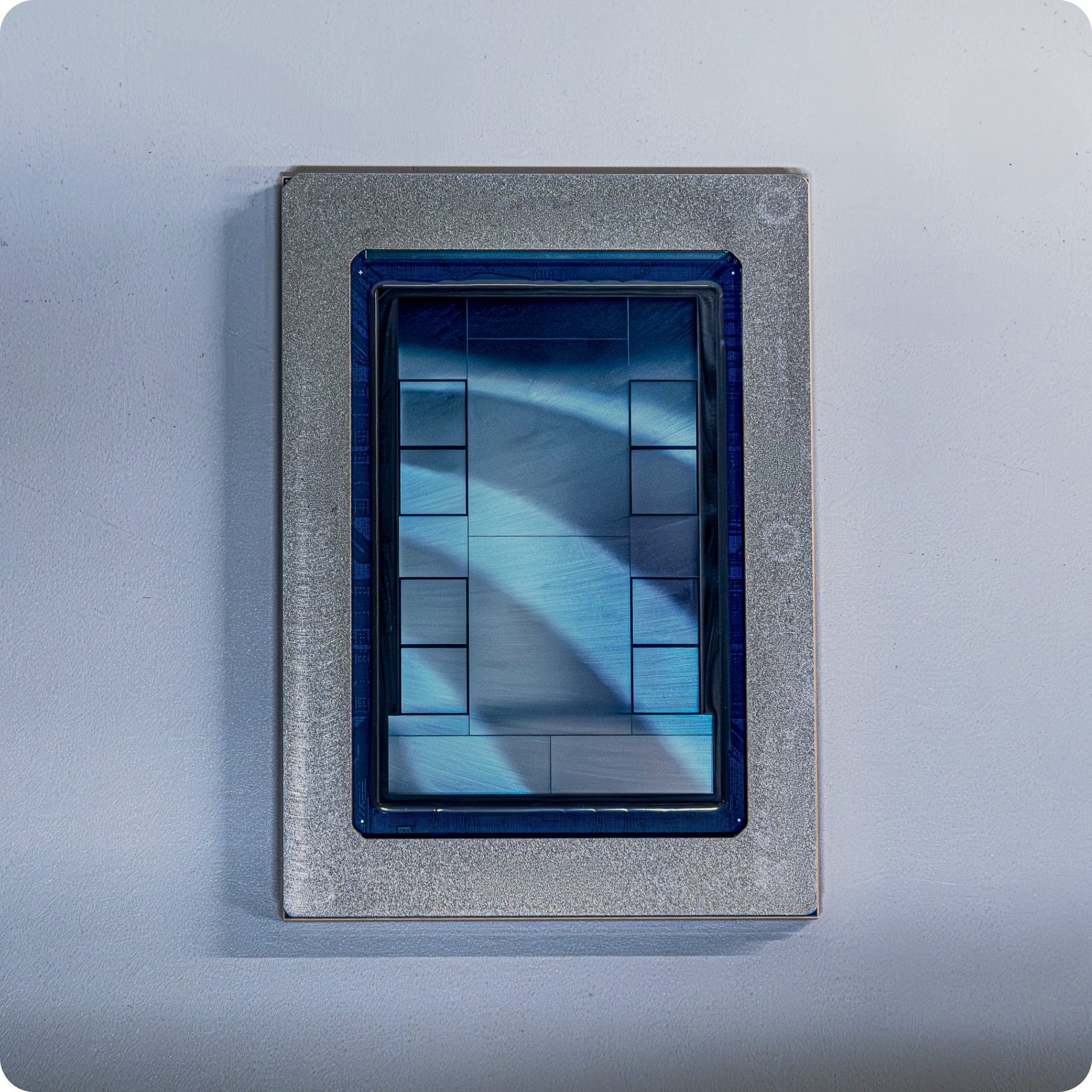

Meta explains that its MTIA family, developed “in close collaboration with Broadcom,” will remain an important part of its AI infrastructure strategy, alongside other internal and external solutions. The company reports having already deployed hundreds of thousands of MTIA chips into production and has validated these accelerators with internal models and LLMs like Llama. This foundation now allows them to pursue a more aggressive roadmap with a much faster iteration cycle than what’s typical in the industry.

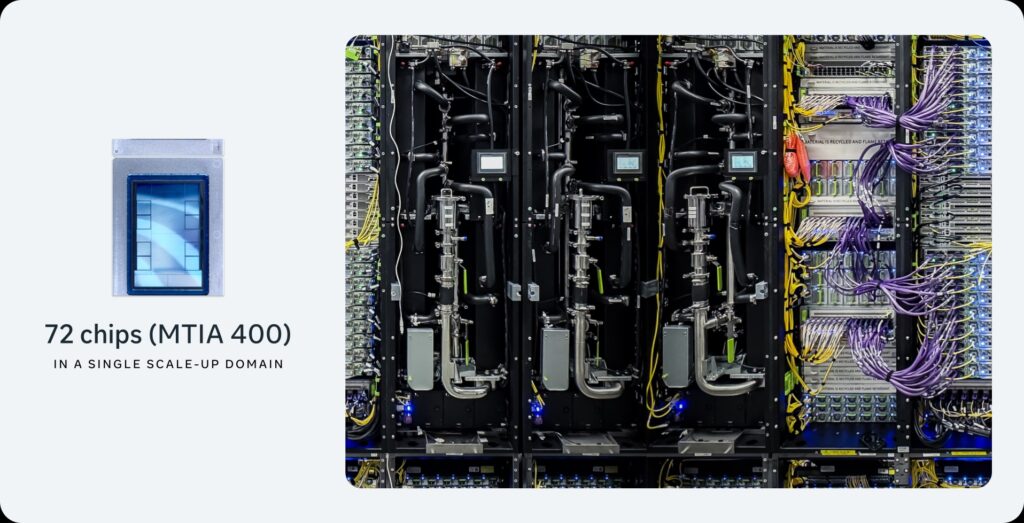

The most striking aspect of the announcement isn’t just the number of generations but the focus. Meta claims that from MTIA 300 to MTIA 500, the HBM bandwidth increases 4.5 times and computational capacity multiplies by 25, all within less than two years. According to their roadmap, MTIA 300 is already in production for ranking and recommendation model training; MTIA 400 has completed lab testing and is headed for deployment in data centers; MTIA 450 will begin mass deployment in early 2027; and MTIA 500 will arrive later that same year.

Inference as a New Battleground

Meta’s technical argument revolves around a specific idea: in generative inference, the bottleneck is no longer always in the same part of the system as during training. The company emphasizes that HBM memory bandwidth is one of the most decisive factors for inference performance, especially during phases like decoding. That’s why they assert that MTIA 450 doubles the HBM bandwidth compared to MTIA 400, and MTIA 500 increases it by another 50%. It also enhances capacity and performance with lower-precision formats optimized for inference.

This has significant strategic implications. For years, GPUs have been the nearly universal standard for training and serving models. But hyperscalers are beginning to accept that using the same hardware for everything isn’t necessarily the most efficient approach, especially now that inference accounts for the majority of total compute consumption. This is where Meta, Google, AWS, and Microsoft seem to converge: proprietary chips for predictable, high-volume workloads, while GPUs continue to dominate frontier training and more general applications.

Google has already articulated this quite explicitly with Ironwood, describing it as their seventh-generation TPU, specifically designed for inference, equipped with 192 GB of HBM3E, 7.37 TB/s bandwidth, and scalable up to 9,216 accelerators. AWS, on the other hand, presents Trainium3 with 144 GB of HBM3E and 4.9 TB/s per chip, along with UltraServer systems supporting up to 144 chips. In January 2026, Microsoft introduced Maia 200, a inference accelerator built on 3 nm technology, with 216 GB of HBM3E, 7 TB/s bandwidth, and a 30% performance-per-dollar improvement over previous systems from their own fleet.

Less Dependence on Nvidia, But No Breakup

This doesn’t mean Nvidia will lose its central position overnight. In fact, Meta itself doesn’t even suggest that. The company emphasizes that it will continue using a diverse portfolio of internal and external silicon. The market continues to recognize that frontier model training and much of large-scale infrastructure remain closely tied to Nvidia’s GPU platforms. What is changing is the internal balance within each hyperscaler: while training remains largely GPU-dominated, inference is beginning to shift towards specialized accelerators where operational costs can be optimized more effectively.

Another important point for Meta is speed. The company claims to have built the capacity to launch a new chip generation roughly every six months, leveraging a modular chiplet architecture and reusing the same chassis, rack, and network infrastructure across multiple generations. This physical compatibility would allow them to insert new chips without redesigning their deployment environment entirely — a key advantage if they truly aim to shorten cycles from the typical one or two years in the industry.

There is also a strong commitment to open-source software and frictionless adoption. Meta positions PyTorch, vLLM, Triton, and the OCP ecosystem as the native foundation of MTIA. This is significant because the silicon race isn’t just decided at the chip level: it also depends on how easily models, kernels, and inference pipelines can be ported across platforms. The more interoperable the stack, the more credible the idea for hyperscalers to decouple a portion of their workload from the CUDA ecosystem.

Broadcom’s Growing Role Behind the Scenes

In this race, there’s an increasingly prominent actor working behind the scenes with a crucial role: Broadcom. Meta mentions Broadcom as a close partner in the development of MTIA, and in October 2025, OpenAI announced a collaboration with Broadcom to deploy 10 GW of custom AI accelerators. Although each program has its particularities, such alliances reflect how custom silicon design has become a strategic priority and an investment accessible only to companies with vast capital and deployment capabilities.

Therefore, the conclusion isn’t that Nvidia has lost control of the market, but rather that the industry is actively working to reduce dependence on it in various use cases. With this new MTIA family, Meta aligns with a logic already shared by other giants: train where it makes sense to use GPUs, but serve massive inference workloads with proprietary silicon when the system economics justify it. If inference ends up becoming the primary driver of AI’s consumption in the coming years, this decision could carry far more weight than it appears today.

Frequently Asked Questions

What exactly has Meta announced with MTIA?

Meta has detailed four generations of proprietary accelerators — MTIA 300, 400, 450, and 500 — with deployment already underway or planned for 2026-2027, increasingly focused on AI inference workloads.

Why does Meta emphasize HBM bandwidth so much?

Because, according to the company, HBM memory bandwidth is one of the most critical factors for generative inference performance, especially during decoding phases. That’s why it increases significantly from MTIA 400 through 450 and 500.

Does this mean Nvidia is no longer important for hyperscalers?

Not at all. The movement more reflects a workload segmentation: proprietary chips for large-scale inference, while GPUs remain dominant in training and other general-purpose uses.

What other hyperscalers are following this strategy?

Google with Ironwood TPU, AWS with Trainium3 and Inferentia, and Microsoft with Maia 200 are also boosting their in-house AI and inference silicon programs.

via: ai.meta.com