NVIDIA unveiled a new open reference blueprint at GTC 2026 aimed at one of the major bottlenecks in physical artificial intelligence: data. The company announced the Physical AI Data Factory Blueprint, an architecture designed to automate the generation, augmentation, evaluation, and preparation of data for training robots, computer vision agents, and large-scale autonomous driving systems. The core idea is straightforward: without abundant, diverse, and well-validated data, there can be no reliable robot or truly mature autonomous vehicle.

The significance of this announcement lies in NVIDIA’s approach: it’s not presenting this as an isolated tool but as a complete workflow chain that spans from raw data to the final training-ready dataset. This chain includes the curation of real and synthetic data, generation of rare or difficult-to-capture scenarios in the real world, automatic validation of results, and orchestration of the entire process on cloud or hybrid infrastructure. Another point worth noting is that the full blueprint isn’t yet published on GitHub, but NVIDIA expects to release it in April, while some key components are already public.

From Simulation to Ready-to-Train Data

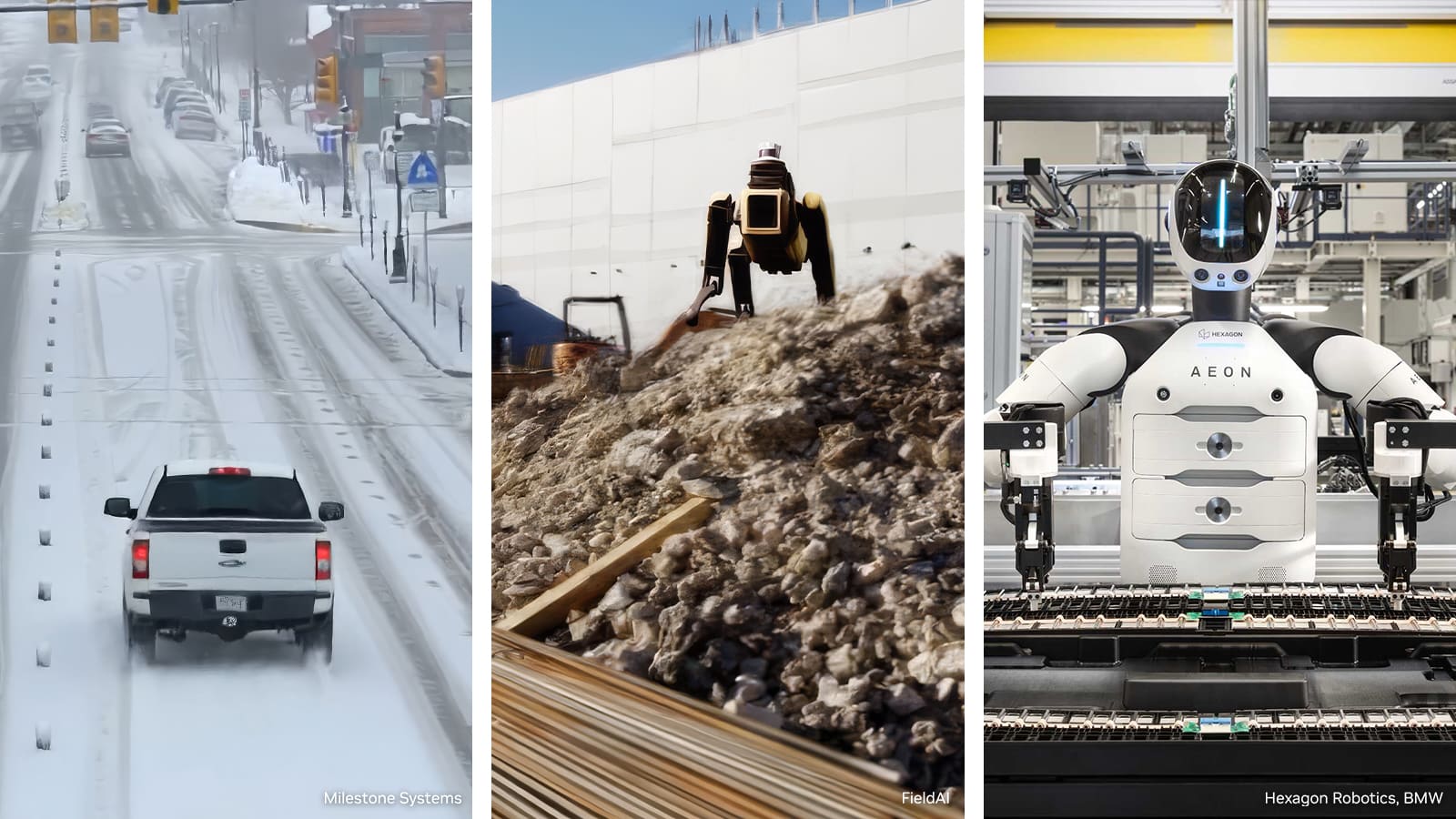

NVIDIA’s specific challenge is clear. In robotics and autonomous driving, powerful models aren’t enough: they must be trained on massive amounts of data that include not only normal situations but also rare cases, failures, adverse weather conditions, lighting changes, and uncommon interactions. These so-called “long tail” scenarios are expensive, slow, and sometimes dangerous to capture in the real world, so synthetic generation and simulation have become central to modern development.

According to NVIDIA, its new blueprint builds upon several established components within the Cosmos ecosystem. Cosmos Curator handles processing, refining, and annotating large datasets of real and synthetic data; Cosmos Transfer expands and diversifies these datasets to cover more scenarios; and Cosmos Evaluator, already available on GitHub, automatically scores, verifies, and filters the physical quality of generated synthetic videos. In its public repository, NVIDIA describes Cosmos Evaluator as an automated evaluation system for synthetic video outputs, checking for hallucinations, obstacle correspondence, and environment attribute verification.

The component that ties it all together is OSMO, NVIDIA’s open orchestrator for physical AI workloads. The company describes it as a cloud-native orchestration platform that coordinates training clusters, simulation environments, and edge setups through workflows defined in YAML. OSMO doesn’t replace simulators or training frameworks but organizes and manages them. It now also integrates with code agents like Claude Code, OpenAI Codex, and Cursor to automate parts of the operation. Essentially, NVIDIA aims for developers to spend less time fighting infrastructure and more on developing models.

Azure and Nebius Make the Announcement More Tangible

One of the most interesting aspects is that Microsoft Azure and Nebius are more than just showcase partners. Microsoft has already introduced an Azure Physical AI Toolchain on GitHub, described in its own repository as an open-source, “production-ready” framework that combines Azure cloud services with NVIDIA’s physical AI stack. The project integrates Azure Machine Learning, AKS, Azure Arc, storage, security with Entra ID, simulation with Isaac Sim and Isaac Lab, and orchestration with OSMO, with a clear focus on enterprise environments and serious deployments rather than mere demos.

This distinction is important because it grounds the GTC discourse in practical terms. Microsoft isn’t just talking about simulation but about a full pipeline that includes data capture on Jetson devices, automatic data conversion, validation, training, evaluation, and model deployment at the edge. It even claims its quick-start guide can train a pick-and-place policy in Isaac Lab on GPUAzure, track metrics with MLflow, and deploy the model on a Jetson device using GitOps in under two hours. While ambitious, this promise is supported by available repositories, architecture, and public documentation.

Meanwhile, Nebius has presented its approach as a managed service within its AI Cloud. The company states it has integrated NVIDIA’s blueprint into its infrastructure and now offers an environment for generating physics-based synthetic data, orchestrating flows with OSMO, and combining this layer with storage, tagging, serverless execution, and managed inference. Nebius also claims that some early users are reducing iteration cycles from weeks to days, though this should be viewed as a commercial assertion rather than an independent comparison.

Why This News Matters More Than It Seems

This announcement aligns with a trend NVIDIA has been building around “physical AI” for months. In January, it introduced Alpamayo, an open family of models and tools for autonomous driving focused on complex scenarios and long-tail traffic reasoning. The company now explains that it is using this new blueprint to train and evaluate Alpamayo, while companies like Skild AI apply it to generalist robots and Uber leverages it to accelerate autonomous vehicle development. This elevates the reference blueprint from a technical component to a foundational infrastructure for the next cycle of physical models.

There’s also a broader industrial perspective. In robotics and automotive, many companies fail not due to lack of ideas but because of fragmentation among simulation, training, evaluation, and deployment stages. NVIDIA seeks to bridge this gap with a comprehensive proposal that blends real-world models, simulation, synthetic generation, automatic evaluation, and multi-infrastructure orchestration. It’s not a magic product or a turnkey solution for everyone, but it clearly signals where the market is heading: fewer isolated tools and more complete pipelines of data and validation ready to scale.

It remains to be seen how quickly this vision translates into widespread adoption beyond announced partners and how well the open blueprint attracts independent developers, startups, and manufacturers beyond NVIDIA’s ecosystem. The core message, however, is clear: in physical AI, advantage will depend less on the model or chip and more on who can produce, debug, and validate better data faster. This is what NVIDIA aims to achieve by turning accelerated computing into a true data factory.

Frequently Asked Questions

What exactly is the NVIDIA Physical AI Data Factory Blueprint?

An open reference architecture announced by NVIDIA to automate the curation, synthetic generation, augmentation, evaluation, and preparation of data for training robots, vision agents, and autonomous vehicles.

Is it already available for download?

NVIDIA expects the full blueprint to be on GitHub in April 2026. However, some components like Cosmos Evaluator are already public, and some parts of the ecosystem can already be tested through related repositories and services.

What roles do Microsoft Azure and Nebius play in this project?

Azure is integrating the blueprint into an open toolchain for physical AI published on GitHub, while Nebius has incorporated it into their AI Cloud as a foundation for managed workflows of data generation, training, and deployment.

Why are synthetic data so important in robotics and autonomous vehicles?

Because they enable coverage of rare or risky scenarios that are very difficult, expensive, or slow to capture in the real world. This is key for training more robust models and validating their behavior before deployment.