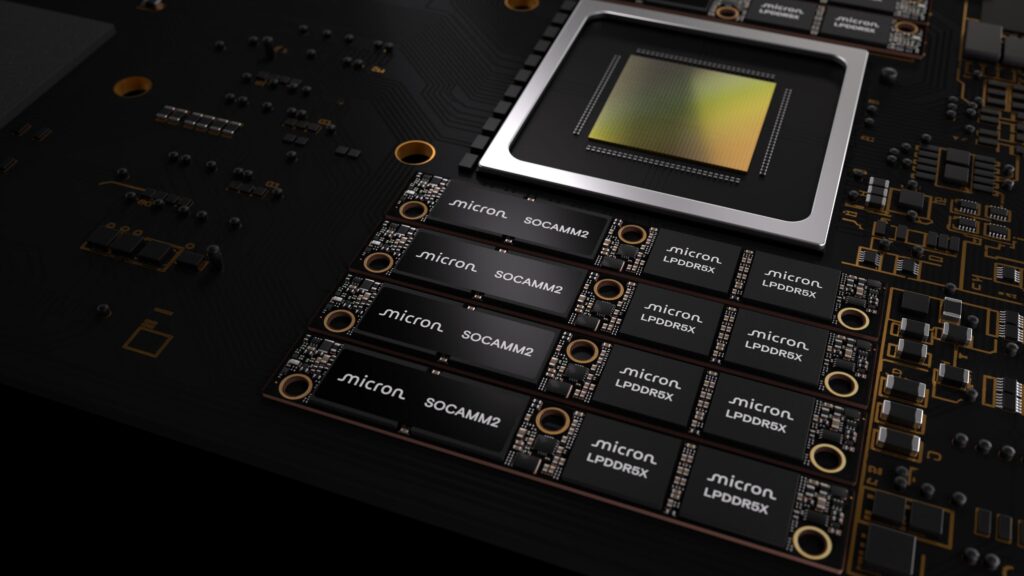

The AI in data center market is typically measured in GPUs, networks, and watts per rack. However, in practice, many bottlenecks are shifting to a less “glamorous” but much more critical component: memory. Micron has aimed to provide concrete figures and products with an announcement that hits directly at the core of the issue: the company is now sending samples to customers of SOCAMM2 256 GB, a LPDRAM (LPDDR5X adapted for servers) module that seeks to accelerate inference architectures and general compute workloads with significantly lower power consumption than traditional RDIMMs.

The context is crucial: the combination of training, inference, agents, and “classic” workloads is pushing servers to require more capacity, efficiency, and density. Workloads grow in scope, KV caches become persistent, and energy costs are no longer secondary factors. Micron asserts that, at this level, memory is no longer just a “companion” to compute but a system limit affecting performance, scalability, and total cost of ownership.

What is SOCAMM2 and why now?

SOCAMM2 stands for Small Outline Compression Attached Memory Module 2: a modular format derived from the CAMM2 family, designed to provide high capacity in a compact and “serviceable” (replaceable) module, based on LPDDR5X. The idea is to leverage the best of low-power memory—typical of mobile devices—adapted for server platforms where density, heat, and power limits are critical.

Industry has been developing SOCAMM/SOCAMM2 as an alternative for certain high-performance designs and AI-optimized systems. Sector analysts highlight that the 14 × 90 mm form factor aims to reduce space compared to RDIMMs, and that the LPDDR approach can offer clear advantages in power efficiency.

Micron’s leap: 256 GB, monolithic 32 Gb die, focused on efficiency

Micron’s move includes two key technical announcements. First: the SOCAMM2 256 GB module is supported by what the company describes as the industry’s first monolithic 32 Gb LPDDR5X die. This is notable because integration and packaging often determine whether a prototype can become a scalable product.

Second: Micron claims this module consumes about one-third of the energy and occupies one-third of the space compared to equivalent RDIMMs, based on its internal calculations (comparing a 128 GB SOCAMM2 to two 64 GB DDR5 RDIMMs for the same bus and capacity). This nuance is worth emphasizing: it’s a useful benchmark but depends on specific conditions and configurations.

The more disruptive aspect of the announcement is architecture: Micron states that with 256 GB per module, it’s possible to reach 2 TB of LPDRAM per CPU in 8-channel server configurations, targeting large-scale contexts and complex inference workloads.

Expected impact on inference: “time to first token” and KV cache

Micron highlights the time to first token (TTFT) as a key metric for LLM applications, reflecting the “immediate response” feeling in interactive inference scenarios. Based on internal tests, using 2 TB of LPDRAM per CPU in unified memory architectures to offload KV caches can improve TTFT by more than 2.3× in long-context inference, with tests involving Llama 3 70B (FP16), 500K context windows, and 16 concurrent users (according to conditions provided by the company).

Furthermore, in standalone CPU applications, Micron claims performance per watt can improve by >3× compared to conventional memory modules for specific HPC workloads (according to its internal testing).

For data center teams, the key point isn’t just “faster,” but “more efficient”: if inference tasks can be maintained with fewer watts per GB and higher rack density, data center designs change. This is especially relevant now, as electrical supply and cooling constraints are significant barriers to scaling.

NVIDIA involved: co-design and platform validation

Micron frames SOCAMM2 within a collaboration with NVIDIA to co-design memory for advanced AI infrastructure. The company emphasizes that the goal is to optimize “every layer” of the system, with NVIDIA data center CPU teams valuing the combination of large capacity and reduced power consumption compared to traditional memory solutions.

Beyond this, industry interpretation suggests that for SOCAMM2 to become a de facto standard, it’s not enough for it to exist as a module. It must fit into real platforms, be supported by the ecosystem, and standardization should reduce friction among vendors.

JEDEC and the standardization race: potential for accelerated adoption

Micron also highlights its involvement in defining the SOCAMM2 specification within JEDEC, a key factor in transitioning from “interesting design” to “industry standard.” A shared framework would allow more manufacturers and integrators to work based on the same specifications, reducing reliance on proprietary implementations.

Alongside Micron, other major industry players are proposing their visions of SOCAMM2 as a LPDDR module for data centers, indicating a broader industry push toward higher capacity and efficiency—even if that means challenging existing RDIMM DDR5 standards.

Summary table: what Micron’s 256 GB SOCAMM2 promises

| Key Point | What Micron Announces | Importance for Data Centers |

|---|---|---|

| Module capacity | 256 GB SOCAMM2 | More memory “closer” to CPU for inference and HPC |

| Base die | Monolithic 32 Gb LPDDR5X | Densities and packaging suitable for scaling modules |

| Capacity per CPU (8 channels) | Up to 2 TB of LPDRAM | Enables large context windows and more relaxed KV cache |

| Power and footprint (comparison) | ~1/3 energy and ~1/3 size compared to equivalent RDIMM (internal estimate) | Improves rack density and thermal management |

| Inference (TTFT) | >2.3× TTFT in long-context (internal tests) | Faster response in interactive LLMs |

| Performance per watt (CPU/HPC) | >3× performance/W in internal workloads | Lower energy costs per useful load |

Upcoming: from samples to real deployment

For now, Micron mentions only samples to customers. That phase typically marks the start of the most demanding stage: platform validation, thermal profiles, liquid cooling compatibility, field serviceability, and cost per GB compared to alternatives. The company defends its modular design as facilitating “serviceability” and supporting more energy-efficient server architectures.

If the 256 GB SOCAMM2 transitions into commercial designs at scale, it won’t just be a “bigger module”: it could reinforce a trend where data centers are redesigned around low-power memory to support large-context inference under tighter power constraints.

Frequently Asked Questions

What is a SOCAMM2 module, and how is it used in AI servers?

SOCAMM2 is a modular LPDDR5X memory format aimed at data centers. It provides high capacity and lower power consumption compared to RDIMM, suitable for inference workloads with large context windows and KV caches.

Why does Micron emphasize “2 TB per CPU,” and what does that mean practically?

Micron states that with 256 GB modules, it’s possible to reach 2 TB of LPDRAM per CPU with 8 channels, enabling more context data and reducing memory bottlenecks during complex inference.

Will SOCAMM2 replace DDR5 RDIMMs in all data centers?

Not necessarily. SOCAMM2 targets specific architectures where power and density are critical. RDIMMs will remain dominant for many general-purpose deployments, but SOCAMM2 could gain ground in efficiency-optimized platforms.

What should system teams watch before adopting SOCAMM2?

Platform compatibility (CPU/motherboard), actual volume availability, thermal profiles, manufacturer support, and total cost per GB—including impacts on rack space, power, and cooling.

via: investors.micron